A Paradigm Shift: Exploring the Future of LLM App Development With ZBrain

Listen to the article

Amidst the dynamic and constantly evolving field of artificial intelligence, one technology has truly redefined the landscape – large language models (LLMs). These sophisticated AI models can comprehend and generate human-like language, significantly impacting businesses’ operations across diverse industries. The possibilities seem boundless, as LLM applications offer the potential to enhance customer interactions, streamline processes, and even create entirely new products and services.

However, despite their immense promise, fully capitalizing on LLMs is no small feat. It demands considerable time, resources, and expertise in AI development—a barrier that often leaves many businesses struggling to harness the potential they offer. This is where ZBrain comes into play—a cutting-edge AI platform designed to democratize access to the power of LLMs.

The Rise and Prominence of Large Language Models

The rise of Large Language Models (LLMs) has been a remarkable journey in the field of artificial intelligence. Early LLMs like BERT and GPT-2 emerged in 2018, but it is in recent years, nearly five years later, that we have witnessed a meteoric surge in the concept of LLMs. This ripple can be attributed to the substantial media attention garnered by LLMs, especially after the release of ChatGPT in December 2022.

Since the advent of ChatGPT, we have witnessed a plethora of applications harnessing the power of LLMs. These applications range from the famous ChatGPT to more personalized chatbots like Michelle Huang’s conversation with her childhood self. LLMs have also found their way into writing assistants, enabling tasks such as editing, summarization (e.g., Notion AI), specialized copywriting (e.g., Jasper and Copy.ai ), and contracting (e.g., lexion). Moreover, LLMs have become valuable allies in the world of programming, assisting with writing, debugging code (e.g., GitHub Copilot), testing (e.g., Codium AI), and even identifying security threats (e.g., Socket AI).

As LLM-powered applications continue to increase, individuals and developers share their experiences and insights. However, the journey to building production-ready LLM-powered applications comes with its unique set of hurdles.

The Challenges of LLM Applications Development

Large language model-powered applications demonstrate diverse capabilities like comprehending the context, nuances, and relationships within text, enabling them to perform a wide array of language-related tasks, including text generation, translation, sentiment analysis, and more. However, creating an LLM from scratch to build an application on is a complex undertaking that demands extensive computational power, access to massive datasets, and specialized expertise in machine learning. Training an LLM involves processing billions of text samples to effectively understand the nuances of human language, syntax, semantics, and contextual meanings. This computationally intensive task requires substantial hardware resources, including powerful GPUs and TPUs, and can incur significant costs for organizations.

For many organizations, developing and fine-tuning LLM applications can be daunting. The high resource requirements and technical complexities may prove prohibitive, particularly for smaller businesses or those without extensive machine learning expertise. This limitation could prevent them from fully leveraging LLMs’ benefits, such as enhancing customer experiences, automating tasks, and gaining valuable insights from vast amounts of text data.

ZBrain: Streamlining LLM Applications Development

ZBrain emerges as a transformative solution to the challenges of LLM-powered application development. It is a generative AI-powered platform that streamlines the process of creating custom LLM applications. ZBrain offers businesses a user-friendly interface, making it accessible to users with varying technical expertise. With ZBrain, organizations can tap into the power of LLMs and build sophisticated applications quickly and efficiently.

Features of ZBrain

- Harnessing the Full Potential of LLMs

ZBrain empowers businesses to leverage the capabilities of LLMs in various domains. By providing seamless integration with diverse data sources, ZBrain enables the use of proprietary enterprise data, ensuring that AI applications are highly personalized and relevant to specific business needs. Whether it’s customer support, data analysis, content generation, or knowledge management, ZBrain unlocks the full potential of LLM apps in diverse contexts.

- Private Data Integration for Enhanced Security

Data privacy and security are foremost concerns for corporations. ZBrain addresses these concerns by offering private data integration. Businesses can deploy ZBrain in a secure cloud environment, ensuring that sensitive data remains protected and within the confines of the organization’s infrastructure. This level of data security instills confidence in organizations to harness the power of LLM apps without compromising privacy.

- Seamless Deployment and Scalability

Deploying LLM applications can be challenging, especially when integrating them into existing workflows. ZBrain simplifies this process with seamless deployment options. Businesses can choose to host the applications on a private self-hosted cloud or leverage ZBrain’s robust cloud infrastructure. The platform’s flexibility allows for easy integration, ensuring minimal disruptions to ongoing operations.

- Empowering Business Functions

ZBrain’s versatility makes it a valuable asset across various business functions. From enhancing customer support with intelligent chatbots to automating data analysis and generating personalized content, ZBrain streamlines diverse operations and enhances productivity. Moreover, ZBrain’s natural language processing capabilities enable intuitive and human-like interactions, providing exceptional user experiences.

- Making AI Accessible to All

ZBrain is committed to democratizing generative AI technology. It offers multiple pricing plans, including a free tier for exploration, making it accessible to businesses of all sizes. Organizations can scale up as their needs grow with additional add-ons and enterprise-level support. ZBrain empowers businesses to embark on an AI journey without the barriers of cost or complexity.

How Does ZBrain Utilize LLMs for Advanced Generative AI Capabilities?

ZBrain leverages Large Language Models (LLMs) at the core of its platform to empower businesses with advanced generative AI capabilities. Integrating LLMs within ZBrain allows users to create sophisticated LLM AI applications and engage with their data in a natural language format. Here’s how ZBrain utilizes LLMs:

- Foundation Models: ZBrain integrates with premier foundation models from industry leaders like OpenAI, Anthropic, Google, and others. These foundation models serve as the basis for various LLM functionalities, offering a wide range of language understanding and generation capabilities.

- Fine-tuning With Proprietary Data: While pre-trained foundation models provide a solid starting point, ZBrain takes it a step further by enabling fine-tuning with an organization’s proprietary enterprise data. Fine-tuning allows businesses to customize the LLMs according to their specific business needs, making the LLM applications more accurate and relevant to their domain.

- LLM Chain: The LLMChain consists of a prompt template and an LLM or chat model. It formats the prompt template using input key values and memory key values (if available), passes the formatted string to the LLM, and returns the LLM’s output. This allows users to interact with the LLMs within their applications easily.

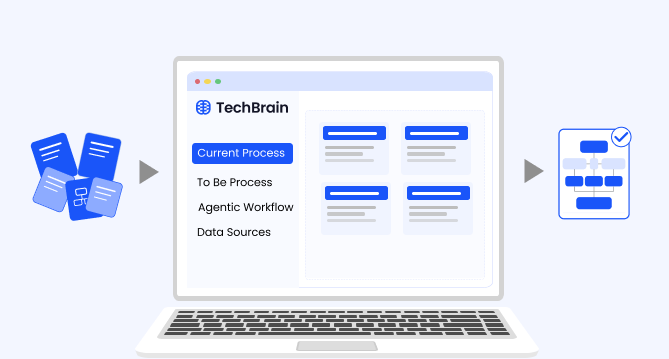

- Seamless Integration With ZBrain Flow: ZBrain Flow is a robust feature of ZBrain that enables users to create complex business logic with minimal code. It presents a drag-and-drop interface that permits users to connect LLMs, prompt templates, extraction tools, and other components effortlessly. This seamless integration enables the creation of custom ChatGPT-like apps catered to specific business use cases.

- Conversational Memory: ZBrain incorporates the concept of “Memories” to retain previous interactions with the LLMs. The ConversationBufferMemory, for instance, is a component that allows the AI to store and manage the conversation history between a human and the AI. This helps ZBrain apps to generate context-aware responses, making the conversations more natural and continuous.

Endnote

ZBrain stands as a powerful enabler, allowing businesses to unleash the full potential of large language models with ease and efficiency. By providing a user-friendly interface, private data integration, and seamless deployment, ZBrain opens the doors to transformative LLM app development across industries. With ZBrain, organizations can tap into the power of LLM applications, optimize business processes, and deliver exceptional user experiences. As the generative AI landscape continues to evolve, ZBrain emerges as a catalyst for innovation and success in the modern business world. Embrace the power of ZBrain and unlock endless possibilities for growth and efficiency in your organization.

Listen to the article

Author’s Bio

An early adopter of emerging technologies, Akash leads innovation in AI, driving transformative solutions that enhance business operations. With his entrepreneurial spirit, technical acumen and passion for AI, Akash continues to explore new horizons, empowering businesses with solutions that enable seamless automation, intelligent decision-making, and next-generation digital experiences.

Insights

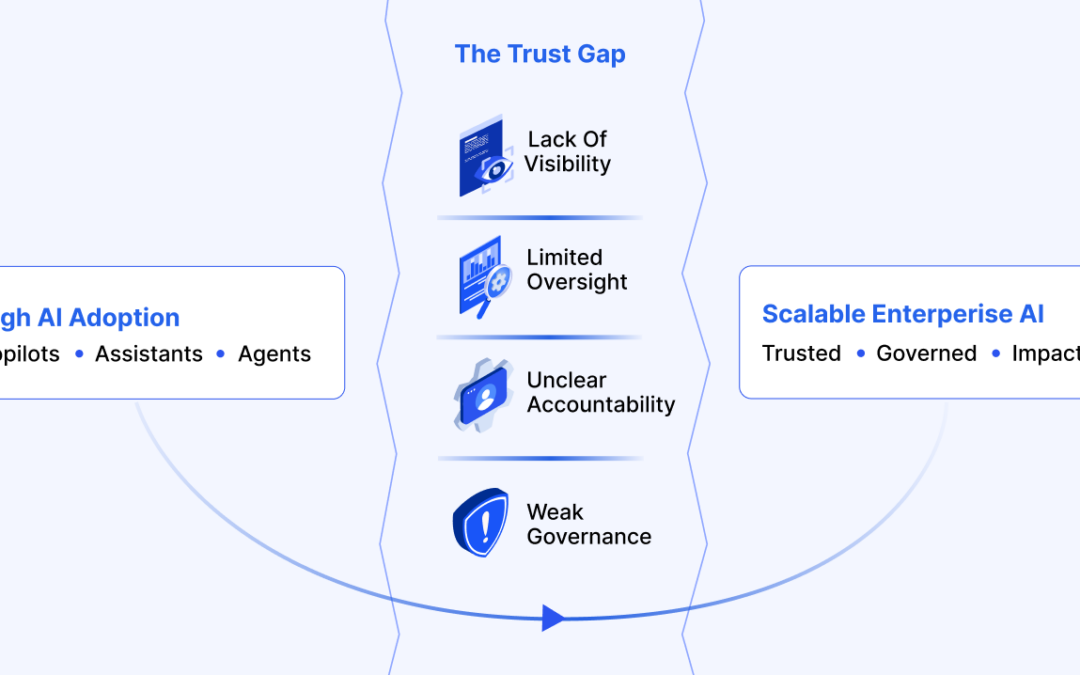

The AI Trust Gap: Why Governance Architecture Determines Enterprise Value

The trust gap surrounding enterprise AI is fundamentally an architectural challenge, and its solution is increasingly well understood.

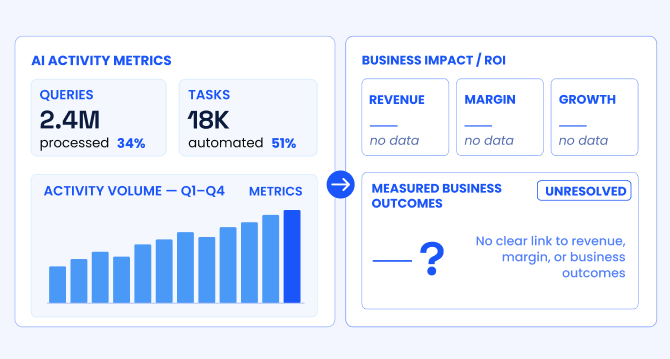

The AI ROI illusion: Why enterprises struggle to measure AI impact

Organizations with stronger measurement discipline are better positioned to link AI deployments to measurable business outcomes, prioritize high-impact use cases across the enterprise, allocate capital more effectively, and continuously refine models using real-world performance feedback.

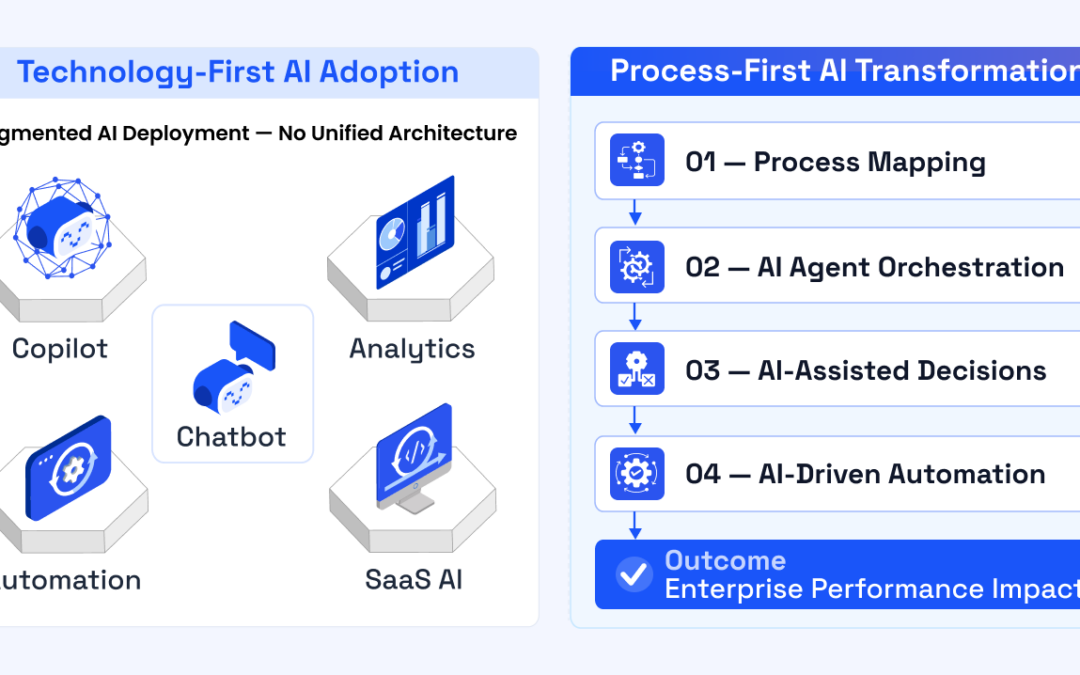

The agentic enterprise: Why AI success requires an operating model redesign

Organizations that redesign their operating models around agentic AI are beginning to outperform those that apply AI only incrementally.

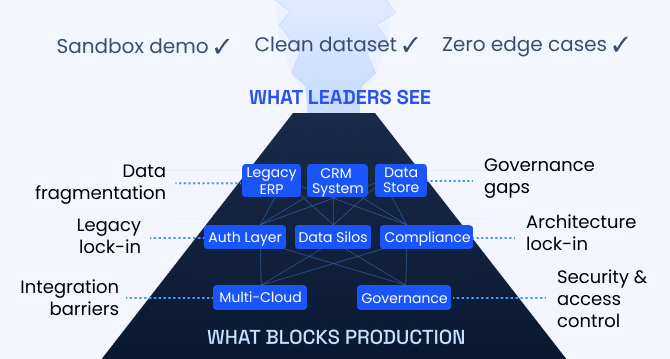

Enterprise AI pilot-to-production gap: Root causes & how to address them

The underlying cause is structural. In many enterprises, AI pilots are developed on infrastructure that was not designed to support production deployment.

Solution architecture best practices: A guide for enterprise teams

The architecture design process culminates in a set of documented artifacts that communicate the solution to development, operations, and business teams.

Common solution architecture design challenges and solutions

Solution architecture must evolve from fragmented documentation practices to a structured, collaborative, and continuously validated design capability.

Why structured architecture design is the foundation of scalable enterprise systems

Structured architecture design guides enterprises from requirements to build-ready blueprints. Learn key principles, scalability gains, and TechBrain’s approach.

Intranet search engine guide: How it works, use cases, challenges, strategies and future trends

Effective intranet search is a cornerstone of the modern digital workplace, enabling employees to find trusted information quickly and work with greater confidence.

Enterprise knowledge management guide

Enterprise knowledge management enables organizations to capture, organize, and activate knowledge across systems, teams, and workflows—ensuring the right information reaches the right people at the right time.