Understanding the A2A protocol: Scope, core components, design principles, benefits, security, and best practices

Listen to the article

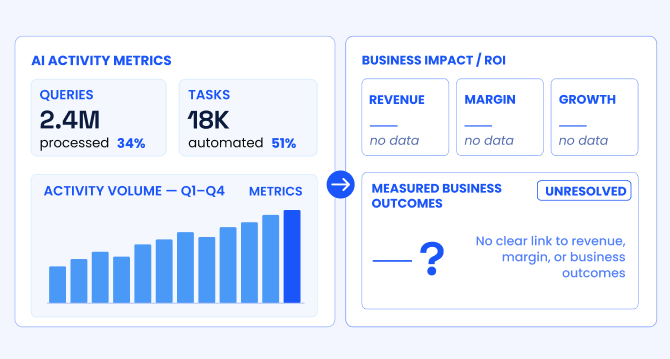

The era of artificial intelligence is no longer on the horizon; it is transforming how organizations automate, collaborate and innovate. As organizations embed specialized AI agents across operations, analytics and decision-making, a new challenge emerges: enabling these autonomous systems to communicate and orchestrate complex workflows seamlessly and securely.

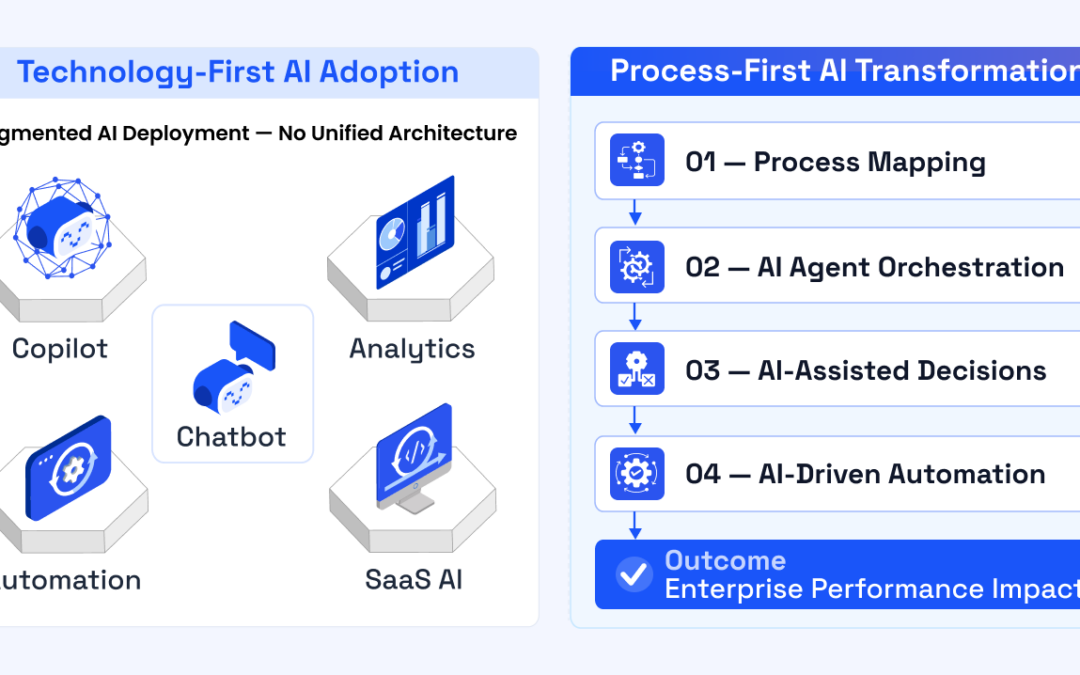

Consider this AI adoption landscape:

-

More than 80% of organizations are now integrating AI as a core component of their operations.

-

Yet, as global AI spending is projected to exceed $200 billion by 2025, still 74% of organizations struggle to realize and scale tangible value from these investments.

-

Despite this momentum, interoperability remains elusive: 76% of business leaders cite implementation complexity as their top challenge, and 35% of organizations deploy AI across multiple departments.

The key barrier is not model capability but the complexity of connecting a growing array of specialized AI agents—each developed on different platforms and for different functions.

Against this backdrop, Google’s Agent-to-Agent (A2A) protocol emerges as a decisive step forward. Much like Anthropic’s Model Context Protocol (MCP), which standardized AI-to-tool connections by providing structured, real-time context to large language models (LLMs), A2A unifies how AI agents communicate with each other regardless of the underlying framework or vendor. It enables scalable, multi-agent collaboration with cross-platform specification, allowing AI agents to communicate, collaborate and delegate tasks across heterogeneous systems. As organizations move from siloed pilots to orchestrated agent ecosystems, A2A is becoming the foundation for intelligent, modular and future-proof enterprise automation.

The following sections explore why Google’s A2A protocol is set to reshape enterprise AI and how organizations can leverage it for scalable, secure agent collaboration.

- Historical background: From siloed agents to the need for open standards

- Current challenges: The fragmentation of agentic AI

- Defining Agent-to-Agent (A2A) protocol for the next wave of enterprise AI

- Understanding Google’s Agent2Agent (A2A) protocol: Core components and how they work

- A2A communication flow: From discovery to delivery

- Key design principles of Google’s Agent2Agent (A2A) protocol

- Security and privacy in Google’s Agent2Agent (A2A) protocol

- Best practices for adopting the A2A protocol

- Core benefits of adopting A2A in the enterprise

Historical background: From siloed agents to the need for open standards

The vision of intelligent agents collaborating to solve complex problems dates to the early days of distributed artificial intelligence. In the 1990s, protocols such as the Knowledge Query and Manipulation Language (KQML) and the Foundation for Intelligent Physical Agents Agent Communication Language (FIPA ACL) laid the groundwork for agent communication, drawing on concepts from speech act theory, modal logic and the belief-desire-intention (BDI) model. These early agent communication languages aimed to standardize message structure, intent and negotiation between autonomous systems but faced significant barriers:

Limited adoption and interoperability: Early protocols, such as KQML and FIPA ACL, were largely confined to academic and research settings, with minimal enterprise-scale adoption. Integration remained labor-intensive and brittle.

Security gaps: Most historical agent systems operated in closed environments, lacking robust, scalable security models. As distributed agents moved to open networks, threats such as message spoofing and privilege escalation became increasingly significant.

Scaling challenges: Manual credential management, fragmented APIs and lack of automation made scaling agent networks difficult, especially as organizations expanded into multicloud and hybrid environments.

Enterprise integration approaches evolved, moving from SOAP and WS-Security (robust but heavyweight enterprise service buses) to cloud-native protocols such as OAuth 2.0 and JWTs, and the adoption of zero-trust architectures. Each addressed specific aspects of agent identity, message security and access management, but none provided a universal, agent-first standard for communication across diverse frameworks and vendors.

Current challenges: The fragmentation of agentic AI

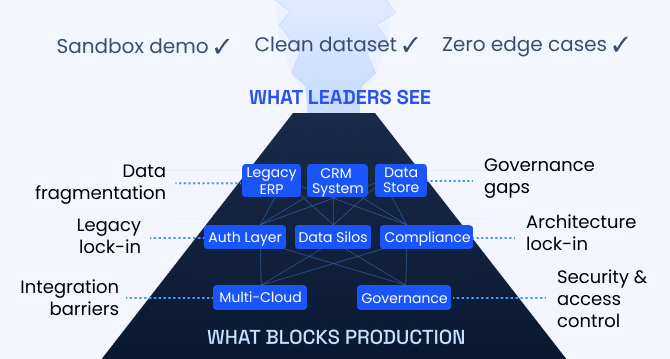

Today’s enterprise AI landscape is characterized by the proliferation of specialized agents – autonomous systems that excel in particular tasks, ranging from analytics to customer support. But as organizations deploy more agents, several fundamental challenges have emerged:

Fragmentation and custom integration: Each agent is often built using different frameworks (LangGraph, CrewAI, Semantic Kernel and proprietary platforms), with bespoke APIs, authentication and communication formats. Integrating them requires custom, point-to-point code for each connection – an approach that quickly becomes unsustainable as the number of agents and use cases grows.

The NxM problem: When every agent (N) must integrate with every tool or data source (M), the number of required integrations multiplies. This leads to compounding technical complexity, brittle architectures and high operational costs.

Security and trust boundaries: Without standardized protocols for agent discovery, authentication and authorization, AI agents may mistakenly communicate with unauthorized counterparts, expose sensitive data or perform actions beyond their intended permissions – especially as agents operate across organizational or even geographic boundaries.

Lack of composable workflows: Without a common standardized communication protocol, agents cannot easily delegate tasks, negotiate or form dynamic teams. As a result, organizations struggle to automate complex, cross-functional workflows that require input from multiple domain experts, human or AI.

Scaling and lifecycle management: Manual processes for updating and replacing security credentials (such as API keys, tokens, passwords or certificates), fragmented monitoring and inconsistent lifecycle management for agents (for example, onboarding, updating or retiring capabilities) hinder agility and operational resilience.

Defining Agent-to-Agent (A2A) protocol for the next wave of enterprise AI

To overcome integration and interoperability hurdles, a fundamentally new approach is required – one that enables autonomous agents to collaborate securely, efficiently and at scale.

The Agent-to-Agent (A2A) protocol is designed to solve these problems. It is an open standard that establishes a secure, scalable and composable way for AI agents to discover each other, share their capabilities, delegate tasks and exchange results – regardless of how or where they were built.

At its core, A2A standardizes:

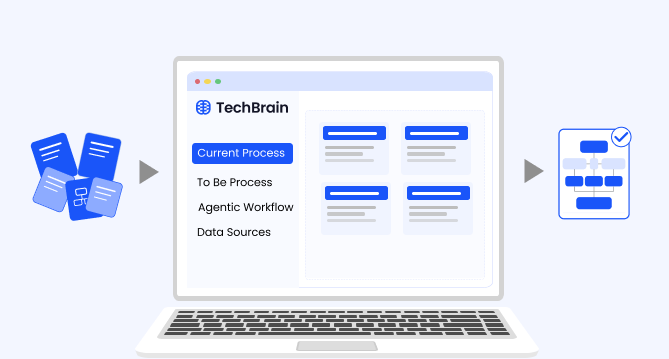

Capability discovery: Agents can announce their capabilities and how to connect, allowing teams to add new functions without complicated integration projects. Using structured “Agent Cards” (machine-readable JSON), agents publish their functions and interfaces at a known URL, enabling automated discovery, onboarding and integration.

Secure task management: A2A provides a consistent way for agents to assign, track and complete work, supporting everything from quick requests to complex, multistep processes.

Multimodal collaboration: The protocol supports the exchange of diverse data types – text, audio and video streaming files – enabling rich, multi-agent interactions.

Security and authentication: Trust and privacy are enforced from the start, with robust authentication and traceable communication between all participating agents.

Unlike earlier generations of agent protocols, A2A is built for the realities of today’s enterprise AI:

-

It is cloud-agnostic, modality-agnostic and framework-agnostic, and it integrates with both legacy and modern stacks.

-

It is designed with zero-trust principles, minimizing the risk of common attack vectors often seen in earlier agent-based systems.

-

Its standards-based, modular approach means agents can be developed and deployed independently, then seamlessly composed into robust digital teams.

Understanding Google’s Agent-to-Agent (A2A) protocol: Core components and how they work

Google’s Agent-to-Agent (A2A) protocol provides a foundation for interoperability – whether agents are built in-house, by third parties or run on different platforms. It is built around a set of core concepts that define how agents interact. Understanding these concepts is critical for developing or integrating with A2A-compliant systems. The following sections break down its core components and show how they work together in real-world scenarios.

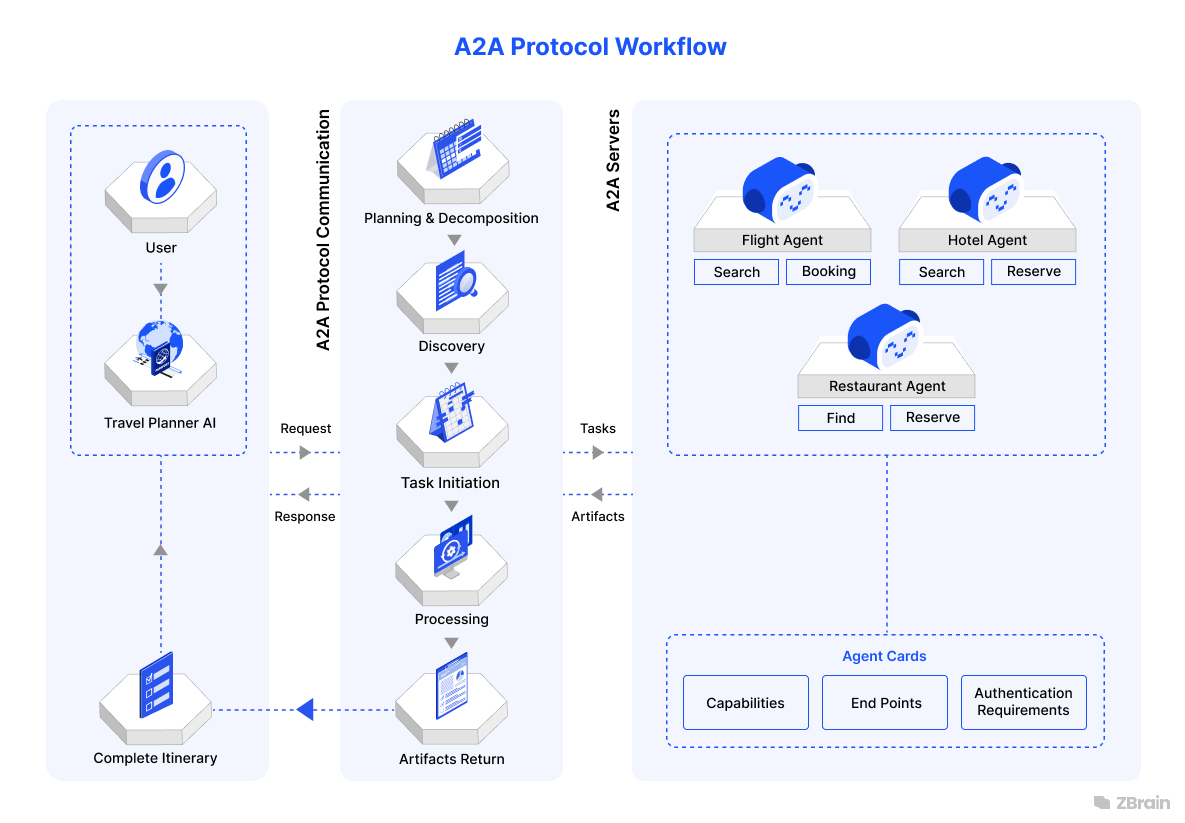

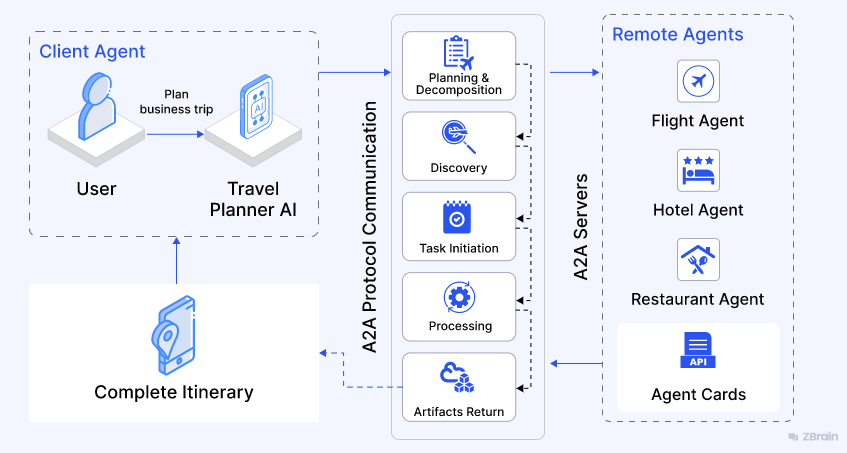

A2A protocol overview: The core actors

At its heart, the A2A protocol defines two primary agent roles:

User: The end user (human or automated service) who initiates a request or goal that requires agent assistance.

A2A client (client agent): The agent, service or application that initiates tasks by reaching out to other agents for information or action. The client initiates communication using the A2A protocol and acts as the orchestrator or project manager in a multi-agent workflow. For example, an enterprise travel planner AI agent coordinates a business trip, initiating requests for flight, hotel or restaurant bookings.

A2A server (remote agent): An AI agent, or agentic system, that provides an HTTP endpoint compliant with the A2A protocol. It accepts incoming requests from client agents, processes assigned tasks and sends back results or status updates. From the client’s perspective, the remote agent is a black box – its internal logic, memory and tools remain hidden, but its capabilities are clearly defined and accessible through the A2A interface. For example, an airline agent books flights, a hotel agent reserves rooms or a restaurant agent checks dining availability.

These agents communicate using familiar, secure web standards – primarily HTTP, JSON-RPC and, when needed, server-sent events (SSE) – making it easy to fit A2A into modern cloud or enterprise environments.

Fundamental communication elements

-

Agent card

A JSON metadata document, typically discoverable at endpoints (for example, /.well-known/agent-card.json), that describes an A2A server. Agents can publish their capabilities through a machine-readable agent card, enabling client agents to discover the most suitable peer for a given task and initiate communication using the A2A protocol. Like a digital résumé or capability sheet, each agent’s card lists its functions, endpoint and authentication method.

Example:

The hotel agent’s card advertises skills such as check room availability and make reservation, supports inputs such as dates and room type, and employs OAuth 2.0 for security.

-

A2A server (remote agent)

The A2A server is the agent exposing its capabilities over the network. It implements the A2A protocol at a published endpoint, receives structured JSON-RPC requests, interprets tasks based on its advertised functions (via the agent card) and returns structured responses. Depending on the configuration, it may also send progress updates or stream partial results.

Example:

A flight-booking agent registers its capabilities (for example, findFlights) in its agent card. When a request arrives via A2A, it queries external flight APIs, processes options and returns itineraries that match the user’s preferences.

-

A2A client (client agent)

The A2A client is the agent initiating remote collaboration by sending JSON-RPC requests to one or more A2A-compliant server agents. It uses agent cards for capability discovery, selects the appropriate agent or agents, and coordinates responses into a coherent workflow. A client agent may orchestrate multiple downstream agents to complete multistep tasks.

Example:

A travel planner agent receives a user query such as Book me a trip to Berlin. It queries multiple A2A agents – one for flights, one for hotels and one for local transportation – combining their responses into a unified itinerary.

-

Task

A task is the central unit of work, tracked by a unique task ID. Each task advances through a defined lifecycle and supports both short-lived request/response interactions and long-running asynchronous tasks.

Example:

- Find direct flights to Tokyo, May 15–20.

- Reserve hotel near Shinjuku, 4-star minimum.

-

Message

Tasks are advanced by exchanging messages – structured communications between agents that contain the sender’s role (user or agent) and the content (parts).

Example:

- Message: Which class of service do you prefer – economy, business or first?

- Response: Business class.

-

Part

Every message or artifact contains one or more parts – typed as text, file or structured data.

-

Text part: For plain or formatted text.

-

File part: For transmitting files, either as data or via secure URLs.

-

Data part: For structured data (for example, JSON tables or complex objects).

Example:

A hotel confirmation message might include:

-

Text part: Your booking is confirmed.

-

File part: a PDF receipt.

-

Data part: JSON with hotel address and check-in code.

-

Artifact

An artifact is the output produced by an agent during task execution. Artifacts are returned to the client, often as a message at task completion, and may be multimodal – containing documents, charts, images or structured results.

Example:

The flight agent sends an artifact containing the e-ticket as a PDF and the flight itinerary as structured data.

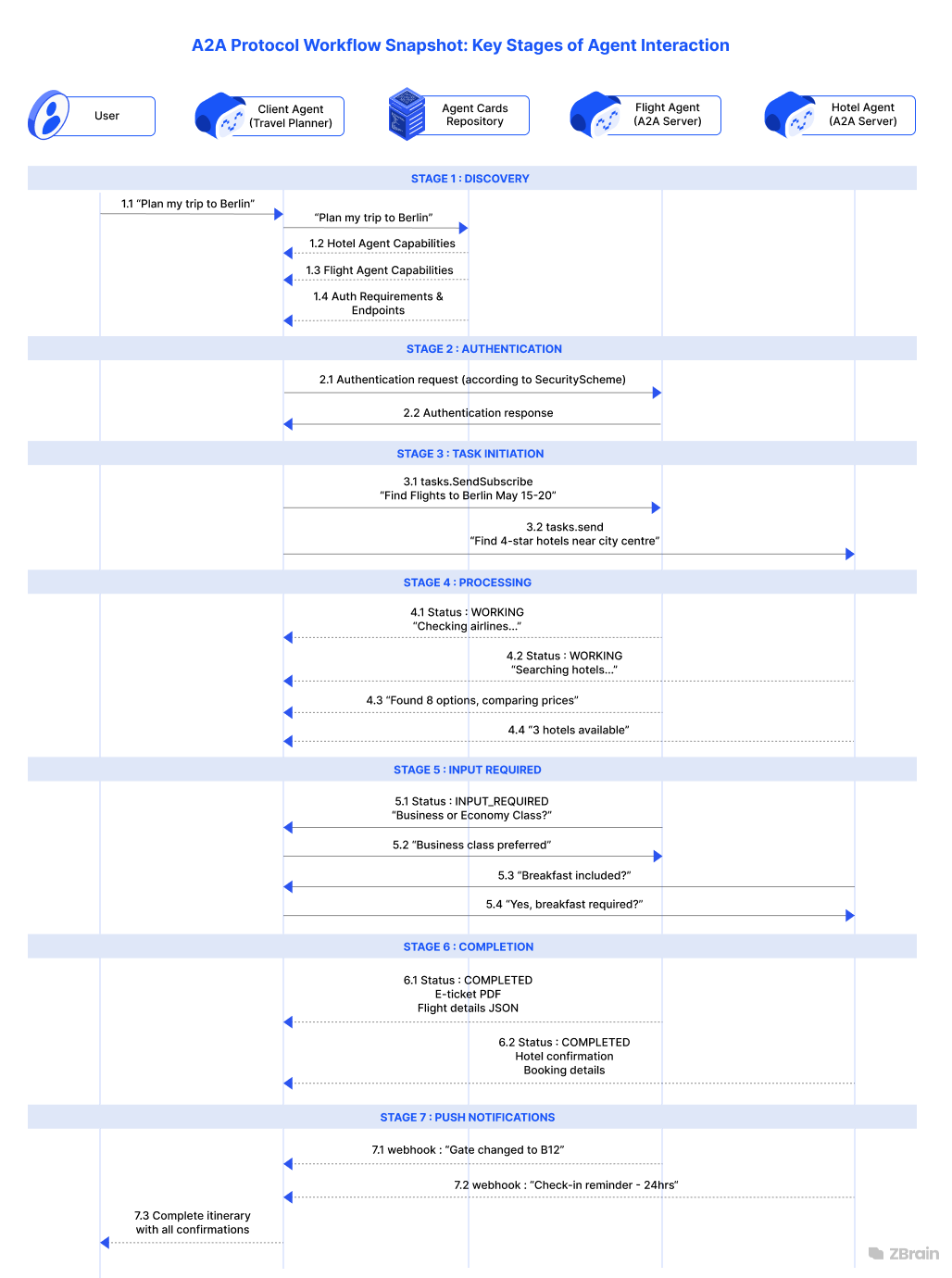

A2A communication flow: From discovery to delivery

1. Discovery

Client agents begin by discovering peers via the Agent Card, the standardized JSON metadata document exposed by each remote agent. Hosted at a well-known URI (typically /.well-known/agent.json).

The Agent Card includes key metadata:

-

Identity: name, description, and provider

-

Service endpoint: URL for interaction

-

Authentication: supported schemes (e.g., Bearer, OAuth2)

-

Capabilities: e.g., streaming, push notifications

-

Skills: specific tasks the agent can perform, with input/output modes and usage examples

This standardized discovery mechanism allows the client agent to assess which remote agents can fulfill a task, how to authenticate, and how to communicate effectively.

Example: A travel assistant agent retrieves Agent Cards for hotel, flight, and restaurant agents, learning which ones support live streaming, instant booking, or require additional verification.

2. Task initiation

After discovery, the client effectively authenticates (per the Agent Card’s authentication.schemes) and initiates a task using A2A methods:

-

message/send: used for synchronous calls that expect a single final reply.

-

message/stream: (also known as tasks.sendSubscribe in some ecosystems) for long-running tasks that send live updates via SSE if the agent supports streaming (as flagged in the Agent Card).

Example: For a quick flight search, the client uses message/send. For a hotel search involving multiple third-party lookups, the client uses message/streamto receive progress updates.

3. Processing and interaction

Once invoked, the remote A2A Server agent processes the task:

-

Non-streaming mode: returns a completed result in the single JSON-RPC response (for example, a restaurant booking)

-

Streaming mode: uses Server-Sent Events to send TaskStatusUpdateEvent and TaskArtifactUpdateEvent messages incrementally over an open HTTP connection, concluding when a final: true status is sent.

This allows clients to display live progress (“checking partner 2 of 3…”) or begin handling partial results before completion.

4. Input and clarification

Tasks may sometimes require human clarification. In such workflows, the server signals input-required, prompting the client to send a follow-up message using the same task ID. This supports interactive, multi-turn dialogue.

Example: A flight agent may ask, Do you prefer nonstop flights? and await the client’s response before continuing.

5. Completion

Every task tracks its lifecycle: submitted → working → input-required → completed → failed → canceled. The final response includes artifacts—structured outputs, such as booking details, documents or JSON results. Clients receive these artifacts and can incorporate them logically into their workflow.

6. Optional Push notifications

For tasks that exceed the lifespan of an SSE connection or in mobile contexts, A2A supports asynchronous push notifications. If supported (capabilities.pushNotifications: true in the Agent Card), the server sends event updates to a client-provided webhook URL. Clients authenticate the notification (via token or bearer auth) and use tasks/get to retrieve full task status or artifacts upon receiving the update.

Summary of flow

The A2A protocol defines a clear, structured flow for agent-to-agent interaction:

-

Discovery via Agent Card

-

Task initiation using the appropriate RPC method

-

Execution modes (synchronous or streaming)

-

Human-in-the-loop exchanges for clarification

-

Lifecycle tracking with structured state events

-

Webhook-based updates for long-running or mobile-friendly tasks

Putting it all together: A2A in real life

Example scenario: A business trip planned with A2A

-

Planning and decomposition: An enterprise AI assistant (the A2A client) receives a high-level request such as Plan my trip to Berlin and breaks it into actionable steps. One of the first actions is discovery.

-

Discovery: The client fetches agent cards for travel, hotel and restaurant agents to see what each can do.

-

Task initiation: The assistant invokes the relevant agents – for example, the flight agent to search for flights, the hotel agent to check room availability and the restaurant agent to make dinner reservations.

-

Processing: Each specialist (A2A server) may request more information, process the task and send back real-time status updates.

-

Completion: Each agent returns an artifact such as a booking confirmation or a list of restaurants. The assistant compiles these into a final itinerary for the user.

All coordination is accomplished through structured, secure and predictable protocol exchanges – provided that participating agents adhere to the A2A protocol, regardless of who built them or where they are hosted.

By formalizing how agents describe themselves, discover each other, authenticate securely and exchange rich information, Google’s A2A protocol lays the groundwork for a new era of composable, collaborative AI. With openness, security and modularity at its core, A2A enables enterprises to adopt intelligent agents at scale without cumbersome, hard-to-maintain point-to-point integrations.

Streamline your operational workflows with ZBrain AI agents designed to address enterprise challenges.

Key design principles of Google’s Agent2Agent (A2A) protocol

The emergence of autonomous AI agents has created both opportunities and complexities for modern enterprises. Google’s Agent-to-Agent (A2A) protocol addresses this by providing an open architecture for secure, scalable and dynamic agent collaboration. What sets its design apart:

Agent-centric collaboration: Opaque, modular and secure

A2A is fundamentally agent-centric. Agents act as independent collaborators, not just tools. The protocol enables agents to exchange information across modalities such as text, structured data, images or files without sharing internal algorithms, proprietary code or memory. This opaque execution model means:

-

Agents expose only their skills and interfaces, never internal logic.

-

Proprietary intellectual property remains protected, even in cross-vendor collaboration.

-

Organizations can flexibly integrate or swap agents, as long as the interface is compliant.

Result: safe, modular and future-proof multi-agent collaboration for everything from cross-team projects to cross-vendor enterprise automation.

Built on web standards: Familiar and flexible

A2A is built on proven web protocols and standards:

-

HTTP(S): reliable, secure communication layer

-

JSON-RPC 2.0: language-agnostic, lightweight request/response messaging

-

Server-sent events (SSE): firewall-friendly streaming for real-time updates and partial results

Result: developers use familiar patterns, libraries and enterprise infrastructure, reducing barriers to adoption and integration.

Secure by default: Enterprise-grade security

Security is integral to A2A:

-

Authentication and authorization: Each agent declares supported schemes (for example, OAuth 2.0, API keys, OpenID) in its agent card, enabling secure identity verification and fine-grained access control.

-

TLS encryption: All communication is encrypted using HTTPS to ensure confidentiality, integrity and authenticity.

-

Governance integration: A2A supports auditing, access control and trust boundaries through its standard metadata and token-based security model, making it compatible with enterprise policy frameworks.

Result: no need for custom security code; enterprises can trust agent interactions across complex environments.

Async-first and long-running task support

A2A is designed for the real world, where not every request is instant. It intentionally supports asynchronous workflows to accommodate long-running tasks such as API calls to third-party services, human-in-the-loop approvals or multistep reasoning.

-

Asynchronous patterns: Clients can launch tasks and receive real-time updates or push notifications, whether jobs take seconds or hours.

-

Task lifecycle management: Every task is uniquely tracked through its lifecycle states.

-

No polling overhead: Streaming and callbacks minimize wasted resources and provide up-to-date feedback.

Result: A2A handles quick queries and complex, long-running workflows, keeping everything coordinated and auditable.

Modality-agnostic and multichannel

A2A supports rich, multimodal communication between agents. Each message or artifact can include multiple typed parts – such as text, structured data, files or images – allowing agents to collaborate across diverse content formats. Through content negotiation, agents can dynamically adapt to supported formats. The protocol is extensible, enabling future support for advanced modalities such as embedded UI components or streamed media.

Result: agents collaborate using the richest possible information, always in formats they both understand.

Security and privacy in Google’s Agent2Agent (A2A) protocol

A2A is architected with security as a foundational principle – ensuring trusted, compliant and controlled collaboration between autonomous agents. As organizations turn to agent-based automation for everything from customer support to supply chain management, robust security and privacy become non-negotiable. Google’s Agent-to-Agent (A2A) protocol addresses these challenges directly, providing a comprehensive, enterprise-ready security framework that covers everything from discovery to task execution.

Enterprise-grade authentication and role-based authorization

A2A builds on proven web standards, integrating flexible authentication mechanisms that enterprises already trust:

-

OAuth 2.0 enables delegated access. For example, a travel booking agent can act on behalf of a user to access a flight API, but only within defined permissions.

-

API keys are well suited for service-to-service communication within a controlled environment, such as between microservices in a corporate network.

-

JWT tokens allow stateless and secure information exchange. For example, agents across multiple subsidiaries of a large corporation can verify each other’s requests without sharing passwords or maintaining session states.

-

Custom schemes allow organizations to integrate their authentication setups seamlessly with existing identity and access management (IAM) tools.

Role-based authorization further refines access, letting organizations enforce policies such as only HR agents can approve reimbursements or data analytics agents cannot modify customer records. By aligning agent roles with business logic, A2A helps enforce the principle of least privilege.

Data privacy protection: Selective sharing by design

Data privacy is core to A2A’s architecture:

-

Selective sharing: Agents exchange only the minimum information required for a task. For example, a flight agent receives only a passenger’s itinerary, not their full profile or payment history.

-

Encrypted communications: All A2A messages are transmitted over HTTPS/TLS, protecting against unauthorized access and tampering.

-

Data minimization: Task objects and message parts include only what is needed for a transaction, reducing exposure of personally identifiable information (PII).

-

PII controls: Agents can enforce policies for handling PII, ensuring compliance with data protection regulations.

Example: When a restaurant agent returns a reservation artifact to a planner AI, only booking confirmation details (time and table number) are shared – no credit card or unrelated user data.

Intellectual property protection: Opaque, black-box agents

A2A enables collaboration without requiring agents to reveal internal workings:

-

Opaque execution: Agents describe their capabilities but do not disclose how tasks are completed. Proprietary algorithms, business logic and data remain private, even during cross-vendor collaboration.

-

Capability-based exposure: Only advertised functions are accessible; internal tools or state remain hidden unless intentionally exposed.

Example: A travel analytics agent may advertise the capability to generate a summary report of trip expenses for a given itinerary. Other agents can submit trip details and receive a cost breakdown, but they never see the agent’s algorithms, data sources or pricing agreements. To the ecosystem, it functions as a black-box reporting service – ideal for modular integration while protecting sensitive financial logic.

Defense in depth: Threat modeling, security testing and auditing

A2A security is not “set and forget.” The protocol and its implementations undergo continuous assessment:

-

Threat modeling: Reviews identify attack vectors, such as agent card spoofing or artifact tampering, drawing on frameworks like MAESTRO for agentic systems.

-

Penetration testing: Rigorous testing validates authentication, authorization and data handling under real-world conditions.

-

Auditability: Every task request, message and response can be logged and tracked, creating a unified audit trail for compliance and risk management.

Security best practices for A2A deployments

Key practices to ensure safe A2A deployments include:

-

Endpoint security: All A2A endpoints, including agent card files, must be served over HTTPS with strong TLS configurations (ideally TLS 1.3) and regularly updated certificates.

-

Granular access controls: Define agent roles and capabilities precisely. For example, restrict sensitive data analysis skills to specific authorized agents.

-

Input validation and sanitization: All incoming messages, URIs and file uploads must be strictly validated to prevent injection attacks or schema violations.

-

Principle of least privilege: Assign agents only the permissions needed for their advertised functions.

-

Rate limiting and denial-of-service protection: Enforce per-client and per-IP connection limits, rate limiting and session timeouts to defend against denial-of-service and brute-force attacks.

-

Secure logging and monitoring: Maintain tamper-proof security logs and records. Use alerts and anomaly detection to flag suspicious behavior, such as repeated failed authentications or unusual task patterns.

-

Dependency management: Scan all open-source dependencies for vulnerabilities. Maintain a software bill of materials (SBOM) and apply regular updates.

-

Incident response: Establish clear procedures for detecting, mitigating and recovering from breaches.

A2A protocol security controls and mechanisms

|

Control / Mechanism |

Description |

Example |

|

Agent Card Protection |

Ensures agent capability metadata is securely hosted, authenticated and shielded from enumeration or abuse. |

An agent card is hosted at a predictable, secure URL such as https://hotel.example.com/.well-known/agent.json, with the endpoint protected by rate limiting to prevent abuse. |

|

Authentication Declaration |

Each agent card explicitly lists its supported authentication schemes, clearly informing client agents of the security requirements for access. |

An agent card specifies that it supports OAuth 2.0 for user-delegated tasks and API keys for internal service-to-service requests. |

|

Transport Security |

Mandates the use of HTTPS (TLS) for all A2A protocol traffic, including API calls, server-sent event (SSE) streams and push notifications. |

All connections between a travel planner agent and a hotel booking agent are encrypted using HTTPS/TLS, preventing eavesdropping. |

|

Digital Signatures & Nonce Use |

Defends against tampering, spoofing and replay attacks by cryptographically signing key payloads and using unique, single-use values (nonces). |

An agent card is signed to prove its authenticity; a task request includes a unique nonce to prevent replay. |

|

Push Notification Security |

Protects asynchronous webhook channels by requiring signed payloads and strict endpoint validation to block tampering or server-side request forgery (SSRF) attacks. |

A restaurant agent sends a booking confirmation via a webhook, with the payload signed as a JWT. The planner agent verifies the signature before accepting the update. |

|

Streaming (SSE) Security |

Authenticates and authorizes every SSE session and implements quotas and keep-alive checks to guard against resource exhaustion. |

A flight status agent begins streaming updates only after validating the client’s authentication token and terminates idle stream connections promptly. |

|

Push Notification Best Practices |

Enforces secure and resilient webhook delivery by mandating HTTPS, validating signatures and implementing retry/backoff logic to prevent abuse. |

If a webhook notification for a hotel booking fails, the server retries using an exponential backoff schedule and revalidates the JWT signature on each attempt. |

|

Connection Management |

Applies connection quotas and timeouts, and drops noncritical events for lagging clients to maintain server stability and prevent resource exhaustion. |

A hotel agent is configured to limit each client to a maximum of 10 concurrent SSE streams and automatically closes any session idle for more than 30 seconds. |

Google’s Agent-to-Agent (A2A) protocol provides a secure, privacy-first foundation for multi-agent automation in demanding enterprise environments. By adopting best practices – from role-based authorization to digital signatures and continuous auditing – organizations can deploy agentic solutions that deliver business value without compromising trust, privacy or compliance.

Streamline your operational workflows with ZBrain AI agents designed to address enterprise challenges.

Best practices for adopting the A2A protocol

Adopting the Agent-to-Agent (A2A) protocol is more than a technical upgrade – it represents a shift toward scalable, collaborative and future-proof AI systems. The following best practices help organizations get the most from A2A implementations:

-

Design agents as modular, domain-specific services

-

Keep it focused: Each agent should specialize in a clearly defined function, such as flight booker or expense reporter. This makes agents reusable and easier to maintain.

-

Use clear agent cards: Publish a well-documented agent card for every agent, detailing its skills, endpoints and authentication requirements. Think of this as the agent’s public API contract.

-

Embrace open standards and asynchronous communication

-

Leverage familiar technologies: Build on HTTP, JSON-RPC and SSE to ensure compatibility and speed onboarding.

-

Plan for nonblocking workflows: Use A2A streaming and push notification features to handle long-running or multistep processes without blocking other operations.

-

Prioritize security and governance

-

Secure by default: Enforce HTTPS (TLS) for all agent communications. Never send sensitive data unencrypted.

-

Adopt strong authentication: Require industry-standard authentication (OAuth 2.0, OIDC, JWT) as specified in each agent card. Never include confidential data directly in messages.

-

Granular authorization: Apply least-privilege principles, granting agents access only to the skills and data needed for each task.

-

Comprehensive monitoring: Use distributed tracing and logging to monitor task flow and audit inter-agent activity for compliance and debugging.

-

Continuous threat modeling: Apply frameworks such as MAESTRO to identify, document and mitigate vulnerabilities, particularly in agent discovery, authentication and task execution. Regularly review and update security posture as agents and workflows evolve.

-

Plan for robustness and scalability

-

Error handling: Agents should manage communication failures and unexpected inputs, providing clear error messages and recovery options.

-

Test and monitor: Test agents both in isolation and as part of full workflows. Monitor performance and resource use to ensure reliability at scale.

-

Scale horizontally: Design for high availability using load balancing, horizontal scaling and caching of frequently accessed resources.

-

Foster a collaborative, interoperable ecosystem

-

Promote interoperability: Choose open standards to avoid vendor lock-in. Collaborate with partners and vendors that support A2A for long-term flexibility.

-

Leverage community SDKs and tools: Use and contribute to open-source A2A SDKs, agent development kits and reference implementations. These tools accelerate adoption and ensure compatibility with the evolving standard.

-

Engage with relevant communities: Track updates, share feedback and align with the latest features and practices. Community engagement helps keep implementations future-ready and aligned with industry direction.

By following these practices, organizations can build secure, resilient and interoperable agentic solutions with A2A – setting the stage for seamless automation and smarter, connected workflows.

Core benefits of adopting A2A in the enterprise

Adopting the Agent-to-Agent (A2A) protocol is more than a technical refresh – it establishes a foundation for the next era of enterprise AI. By standardizing how independent AI agents communicate, A2A delivers benefits across architecture, operations and business strategy.

Architectural agility and developer velocity

-

Modular, composable architecture: A2A enforces a decoupled-by-design approach. Instead of building monolithic, tightly coupled AI applications, organizations can create portfolios of small, specialized agents, each responsible for a specific task. These agents become reusable building blocks, enabling rapid assembly of new business capabilities as needs evolve.

-

Faster, lower-overhead integration: Each agent publishes a public agent card that defines its interface and capabilities without revealing internal logic. This clear separation reduces integration complexity, allowing developers to focus on high-value tasks. With A2A’s support for standard web technologies such as HTTP and JSON-RPC, agents can be built in any modern language, making cross-team and cross-platform collaboration seamless.

-

Simplified maintenance and evolution: Because agents are loosely coupled, any single agent can be updated, patched or replaced with minimal impact on the system, as long as its public contract remains stable. This minimizes technical debt and allows the architecture to adapt quickly to new technologies.

Operational resilience, scale and governance

-

Enterprise-scale automation: A2A is engineered for orchestrating complex, asynchronous workflows, whether tasks complete in seconds or require days and multiple handoffs. This enables automation of sophisticated value chains – from order fulfillment and logistics to regulatory compliance – at scale.

-

Inherent resilience and fault isolation: The multi-agent architecture isolates failures. If one agent becomes unavailable or returns an error, orchestrators can retry tasks, invoke fallback agents or degrade service gracefully. This increases uptime for critical processes and reduces single points of failure.

Strategic advantage and future-proofing

-

Enterprise-grade security by design: A2A is secure by default, mandating encrypted transport (TLS), strong authentication (OAuth 2.0, OIDC, JWTs) and granular, least-privileged authorization for every agent and task. Security teams gain visibility and control at every step.

-

Open, vendor-neutral standard: As an open protocol, A2A prevents vendor lock-in. Agents can interoperate across any cloud, framework or provider, protecting AI investments and enabling best-of-breed adoption.

-

Internal marketplace of AI capabilities: A2A lets teams publish agents as discoverable, reusable services. This reduces redundant development, accelerates innovation and enables enterprise-wide reuse of capabilities.

-

Platform for new revenue and business models: A2A enables secure collaboration not only within organizations but also with trusted external partners or customers. This supports hyper-integrated digital services, new customer experiences and business models built on composable workflows.

Business benefits and competitive differentiation

-

Accelerated time to market: A2A’s modularity allows product and innovation teams to compose new offerings from existing agent building blocks. This agility enables faster response to customer needs and regulatory changes than monolithic platforms.

-

Reduced integration and vendor costs: By providing a universal protocol for agent communication, A2A reduces the need for custom integration projects and reliance on proprietary middleware. This lowers both deployment and long-term maintenance costs.

-

Enhanced ecosystem and partnership potential: A2A makes it easier to connect with partners, suppliers and customers. Organizations can selectively expose agent capabilities for ecosystem collaboration, forging new value chains and business models without rewriting core systems.

Adopting the Agent2Agent (A2A) protocol is more than a technical refresh—it’s a foundation for the next era of enterprise AI. By standardizing how independent AI agents communicate, A2A brings compounding benefits across architecture, operations, and business strategy.

Endnote

As enterprise AI matures, the ability to orchestrate secure, modular and interoperable agent ecosystems is becoming a business imperative. Google’s Agent-to-Agent (A2A) protocol stands out as a strategic enabler – bringing composability, resilience and open governance to the core of enterprise automation. By embracing agent-centric patterns and open standards, organizations can accelerate innovation, reduce integration risk and scale their AI initiatives effectively.

Whether an organization is just beginning its agentic journey or preparing to future-proof complex, multi-team workflows, A2A provides a clear path forward. As adoption accelerates and the ecosystem expands, early adopters will be positioned not only to keep pace with change but to lead it.

The next era of enterprise AI will be connected, open and scalable – driven by intelligent agents working in tandem. With A2A and complementary standards such as the Model Context Protocol (MCP), now is the time to architect for what comes next.

Streamline your AI operations with ZBrain. Contact us to explore how our platform accelerates integration and supports the next wave of open, interoperable agent standards.

Listen to the article

Author’s Bio

An early adopter of emerging technologies, Akash leads innovation in AI, driving transformative solutions that enhance business operations. With his entrepreneurial spirit, technical acumen and passion for AI, Akash continues to explore new horizons, empowering businesses with solutions that enable seamless automation, intelligent decision-making, and next-generation digital experiences.

Table of content

- Historical background: From siloed agents to the need for open standards

- Current challenges: The fragmentation of agentic AI

- Defining Agent-to-Agent (A2A) protocol for the next wave of enterprise AI

- Understanding Google’s Agent2Agent (A2A) protocol: Core components and how they work

- A2A communication flow: From discovery to delivery

- Key design principles of Google’s Agent2Agent (A2A) protocol

- Security and privacy in Google’s Agent2Agent (A2A) protocol

- Best practices for adopting the A2A protocol

- Core benefits of adopting A2A in the enterprise

Frequently Asked Questions

What is the Agent-to-Agent (A2A) protocol, and why does it matter for enterprise AI?

How does A2A support interoperability across vendors, clouds and frameworks?

What are the key stages of the A2A communication flow, from agent discovery to task completion?

The A2A protocol structures secure, collaborative workflows between agents in the following stages:

-

Discovery: The client agent retrieves agent cards (metadata files) from remote agents to understand their capabilities, endpoints and security requirements before starting work.

-

Task initiation: After authenticating as required by the Agent Card, the client sends a task request to the remote agent’s endpoint using either

tasks.sendfor synchronous work ortasks.sendSubscribefor long-running, streaming interactions. -

Processing and interaction: The remote agent processes the task. In non-streaming mode, it returns a single result via HTTP. In streaming mode, it sends live updates and artifacts back to the client through SSE.

-

Input and clarification: If additional information is needed, the remote agent requests clarification. The client responds with context tied to the unique task ID, enabling multi-turn interaction.

-

Completion: The task concludes in a terminal state (completed, failed or canceled). The client receives the final artifacts—such as confirmations, tickets or structured data.

-

Optional push notifications: For asynchronous workflows, remote agents can send updates to client webhooks, ensuring delivery even if the client is offline.

This structured flow ensures reliable task handoffs, clear status tracking and modular automation across diverse agent ecosystems.

How does A2A enable resilience, scalability and robust operations?

What security and compliance controls are built into A2A?

What is the long-term value and ROI of adopting A2A?

How do we get started with ZBrain for AI development?

To begin your AI journey with ZBrain, simply reach out to us at hello@zbrain.ai or complete the inquiry form available on our website. Our dedicated team will work closely with you to assess your current AI development environment, identify the most impactful opportunities for AI integration, and design a tailored pilot plan that aligns with your organization’s goals.

Insights

The AI ROI illusion: Why enterprises struggle to measure AI impact

Organizations with stronger measurement discipline are better positioned to link AI deployments to measurable business outcomes, prioritize high-impact use cases across the enterprise, allocate capital more effectively, and continuously refine models using real-world performance feedback.

The agentic enterprise: Why AI success requires an operating model redesign

Organizations that redesign their operating models around agentic AI are beginning to outperform those that apply AI only incrementally.

Enterprise AI pilot-to-production gap: Root causes & how to address them

The underlying cause is structural. In many enterprises, AI pilots are developed on infrastructure that was not designed to support production deployment.

Solution architecture best practices: A guide for enterprise teams

The architecture design process culminates in a set of documented artifacts that communicate the solution to development, operations, and business teams.

Common solution architecture design challenges — and how to overcome them

Solution architecture must evolve from fragmented documentation practices to a structured, collaborative, and continuously validated design capability.

Why structured architecture design is the foundation of scalable enterprise systems

Structured architecture design guides enterprises from requirements to build-ready blueprints. Learn key principles, scalability gains, and TechBrain’s approach.

A guide to intranet search engine

Effective intranet search is a cornerstone of the modern digital workplace, enabling employees to find trusted information quickly and work with greater confidence.

Enterprise knowledge management guide

Enterprise knowledge management enables organizations to capture, organize, and activate knowledge across systems, teams, and workflows—ensuring the right information reaches the right people at the right time.

Company knowledge base: Why it matters and how it is evolving

A centralized company knowledge base is no longer a “nice-to-have” – it’s essential infrastructure. A knowledge base serves as a single source of truth: a unified repository where documentation, FAQs, manuals, project notes, institutional knowledge, and expert insights can reside and be easily accessed.