Why enterprise AI pilots fail to scale and how to address it

Every major organization has declared AI a top strategic priority. Budgets have been allocated, mandates issued, roadmaps published, and pilots launched. And yet, for the overwhelming majority, the needle has not moved where it matters most: in production, at scale, embedded in the workflows and decision systems that drive actual business outcomes.

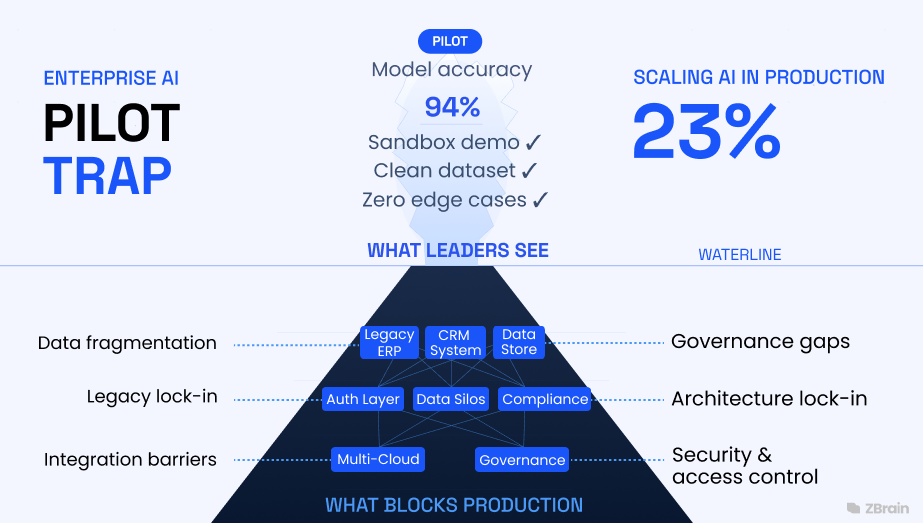

The gap between AI ambition and AI execution continues to widen across many enterprises. While organizations are investing heavily in AI initiatives, relatively few are converting experimentation into measurable business value. According to McKinsey & Company’s State of AI 2025 report, nearly two-thirds of organizations remain in the experimentation or pilot stage, and only a minority report scaling AI across enterprise operations. More specifically, the report notes that approximately 62% of organizations are experimenting with AI agents while only about 23% report scaling them in production environments, illustrating the significant gap between experimentation and operational deployment.

Comparable findings appear in research focused on generative AI adoption. Studies from the Capgemini Research Institute indicate that organizations continue to struggle to transition from isolated pilots to enterprise-wide deployments, with many initiatives remaining confined to experimentation. The result is an enterprise landscape characterized not by scaled AI capabilities but by large portfolios of disconnected experiments. Pilot programs often require substantial investments in engineering resources, data preparation, vendor licensing, and executive oversight. Yet when initiatives fail to progress beyond early experimentation, these investments generate limited long-term operational impact.

When AI initiatives fail to transition from pilot to production, organizations often attribute the failure to familiar constraints. These include shortages of AI talent, resistance to organizational change, insufficient production budgets, and the inherent complexity of specific use cases. While these explanations identify genuine sources of friction, they are more accurately understood as symptoms rather than root causes. They describe the conditions under which AI initiatives stall, but they do not explain why these conditions arise so consistently across organizations.

The underlying cause is structural. In many enterprises, AI pilots are developed on infrastructure that was not designed to support production deployment. Experimental systems are evaluated in sandboxed environments that bear little resemblance to the operational complexity of enterprise systems at scale. Production environments introduce fragmented data architectures, multiple interconnected systems, regulatory constraints, and governance requirements that are rarely addressed during the pilot stage. AI systems that perform well under controlled experimental conditions often encounter integration barriers when deployed in real-world enterprise environments.

A related issue concerns the metrics used to evaluate pilot success. Many organizations assess AI initiatives primarily through technical indicators such as model accuracy, benchmark scores, or hallucination rates. While such measures are useful for model development, they offer limited insight into the business value generated by the system. Executive decision-makers responsible for approving production deployment budgets generally evaluate initiatives based on measurable outcomes such as revenue generation, cost efficiency, or risk reduction. When pilot evaluations fail to connect technical performance with these business outcomes, scaling decisions often stall at the point where operational investment becomes necessary.

This article identifies precisely what separates the 23% of organizations successfully scaling AI from the 77% that are not and provides technology leaders with a clear-eyed diagnosis of the architectural decisions that determine which side of that divide their organizations occupy. The enterprises on the production-ready side share a common infrastructure foundation: a model-agnostic orchestration layer with deep enterprise data integration, built-in governance, and human-in-the-loop feedback, the architecture that platforms like ZBrain Builder are specifically designed to provide.

Table of content

- Foundational principles of effective solution architecture

- Two architecturally distinct approaches to enterprise AI

- A pattern repeating across four technology waves

- The performance gap: What production-ready looks like

- The human dimension: Why the talent narrative is the wrong diagnosis

- ZBrain Builder: Where enterprise AI architecture meets production reality

- The architecture of scalable AI: Eight non-negotiables

- Closing the pilot-to-production gap: Key actions for measurable business impact

Two architecturally distinct approaches to enterprise AI

A closer examination of enterprise AI initiatives reveals a structural divide in how organizations approach deployment. This divide does not primarily reflect differences in AI budgets, industry characteristics, or the availability of technical talent. Instead, it reflects architectural choices made early in the AI adoption journey.

Across industries, two distinct models of enterprise AI deployment are emerging. The first treats AI as a collection of experimental projects, each designed to test the potential of a specific technology or model. The second treats AI as enterprise infrastructure, designed to support repeatable, scalable deployment across multiple business functions.

Organizations following the first approach typically experience a persistent cycle of experimentation. Individual business units launch pilots, often focused on narrow use cases or isolated productivity improvements. Success is frequently defined using technical metrics such as model accuracy or prototype performance. However, because these initiatives are not designed with enterprise integration in mind from the outset, they often encounter significant barriers when organizations attempt to move them into production environments.

In contrast, organizations adopting the second approach design AI initiatives around operational integration and business impact from the beginning. Rather than launching isolated experiments, they use reusable infrastructure that enables AI systems to interact with enterprise data, workflows, and governance frameworks. In these environments, pilots are not standalone experiments but early stages in a structured deployment pipeline.

Research across multiple enterprise AI studies suggests that this architectural distinction is strongly correlated with scaling outcomes. Organizations that prioritize reusable infrastructure, cross-functional ownership, and governance integration are significantly more likely to transition AI initiatives into production systems.

The contrast between these two models can be summarized across several operational dimensions.

| Pilot-trapped enterprise | Production-ready enterprise |

|---|---|

| Evaluates AI projects in isolation | Builds reusable AI infrastructure from day one |

| Measures success by model accuracy scores | Measures success by business KPI impact |

| AI owned and operated by data science team | AI owned by cross-functional business units |

| New pilot launches before the prior one scales | Scales existing agents before starting new ones |

| Proprietary, single-vendor AI architecture | Model-agnostic, integration-first architecture |

| Governance retrofitted after deployment | Built-in governance at the architecture stage |

| Each deployment rebuilt from scratch | Reusable agent library grows with every deployment |

| Pilot success = model accuracy threshold met | Pilot success = production deployment achieved |

The distinction between these organizations is not determined by intelligence, capital, or access to AI talent. In most cases, both groups recognize the strategic importance of AI and possess capable technical teams. The difference lies in the architectural and organizational decisions made early in the AI journey, often before the first pilot is launched. These early choices determine whether AI initiatives become scalable enterprise capabilities or remain isolated experiments. Organizations that establish the right foundations early accelerate the value of their AI investments, while others spend years retrofitting infrastructure for scale.

A pattern repeating across four technology waves

The current challenges surrounding enterprise AI scaling are often treated as a new phenomenon. In practice, they reflect a recurring pattern in the history of enterprise technology adoption.

Over the past two decades, organizations have repeatedly encountered a similar dynamic when adopting transformative technologies. Early enthusiasm generates widespread experimentation, yet many initiatives fail to translate into sustained operational impact. In most cases, the limitation is not the underlying technology but the organizational and architectural context into which it is introduced.

Enterprises frequently attempt to apply new capabilities within existing operating models rather than redesigning processes, data architectures, and governance frameworks to support them. As a result, technologies that demonstrate strong potential in controlled environments encounter significant barriers when deployed at enterprise scale.

The current gap between AI experimentation and AI-driven operational impact follows this same historical pattern. Similar dynamics can be observed across four major waves of enterprise automation and intelligence technologies: robotic process automation (RPA), machine learning, generative AI, and the emerging generation of agentic AI systems.

Wave One: Robotic Process Automation (RPA) — Why early automation scaled

Robotic process automation achieved broader operational deployment than many subsequent AI technologies. The reasons are largely structural. RPA operates within a relatively narrow problem domain. It automates deterministic tasks governed by explicit rules and predictable workflows. Typical RPA deployments involve structured interactions with a limited number of systems, such as extracting data from an invoice, validating fields against predefined criteria, and updating entries in enterprise resource planning systems.

Because the logic is rule-based and fully auditable, organizations can deploy RPA without significantly altering underlying enterprise architectures. Integration requirements are limited, data dependencies are predictable, and operational outcomes are deterministic. These characteristics made RPA relatively simple to operationalize. However, the same constraints that enabled scalability also limited its broader applicability. RPA performs effectively when processes are well defined, repetitive, and stable. Once tasks require contextual reasoning, cross-system data synthesis, or judgment under uncertainty, rule-based automation approaches reach their limits.

Organizations that interpreted the relative success of RPA deployments as a template for scaling more sophisticated forms of AI automation often encountered significant challenges during subsequent technology waves.

Wave two: Machine Learning (ML) — The first production failure pattern

The enterprise adoption of machine learning demonstrated the first widespread instance of the pilot-to-production gap that continues to affect AI initiatives today. ML models frequently delivered strong predictive performance in controlled experimental environments. Fraud detection systems, demand forecasting models, and customer churn prediction algorithms achieved levels of analytical accuracy that exceeded traditional statistical approaches and manual analysis.

However, translating these models into operational systems proved significantly more complex. Successful deployment required developing data pipelines, real-time integration with enterprise applications, monitoring frameworks, model retraining mechanisms, and governance structures to manage model drift and operational risk. Many organizations had not anticipated these requirements during the pilot phase. As a result, models that demonstrated strong technical performance often remained confined to data science environments rather than becoming embedded in production workflows.

The pattern became common across industries: a team would develop a high-performing model over several months, demonstrate promising results to leadership, and then encounter extended delays when attempting to operationalize the system. Integration work frequently required more time and investment than the original model development.

In some cases, models became obsolete before they were fully deployed, replaced by evolving business requirements or newer analytical techniques. The result was growing skepticism among business leaders about the real operational value of machine learning investments.

Wave three: Generative AI — Rapid adoption, limited integration

The emergence of generative AI triggered the fastest wave of enterprise experimentation in the history of enterprise software. Large Language Models(LLMs) enabled a broad range of new applications across corporate functions. Customer service teams experimented with conversational assistants. Legal departments evaluated AI-assisted contract analysis. Finance teams tested tools for financial reporting and document generation. Marketing organizations deployed AI systems to assist with content production and campaign development.

Unlike earlier waves of technology, generative AI adoption occurred simultaneously across multiple organizational functions. The accessibility of foundation models and cloud-based AI services has significantly reduced the barriers to experimentation. However, most deployments remained relatively shallow in terms of enterprise integration. Generative AI tools were often connected to operational systems through custom integrations or standalone interfaces rather than through deeply embedded enterprise architectures.

These implementations frequently performed well in demonstrations and limited pilot environments. Yet when organizations attempted to deploy them in production settings, they encountered familiar constraints: fragmented enterprise data, complex access-control requirements, compliance and regulatory considerations, and dependencies across multiple operational systems. As a result, a large share of generative AI pilots failed to progress beyond early experimentation stages.

Wave four: Agentic AI — A new capability facing familiar constraints

The current agentic AI wave represents a genuine step-change in capability. These systems can orchestrate complex, multi-step workflows across multiple enterprise systems; synthesize information from diverse, disparate data sources in real time; and autonomously take consequential actions within defined operational boundaries. They are not tools that augment individual knowledge workers; they are operational actors that can manage workflows, make decisions, and execute processes at a scale and speed no human team can match.

But the pattern is repeating. Agentic AI pilots are being evaluated in sandboxed environments disconnected from production data infrastructure. They are being built on single-vendor architectures that will impose lock-in costs at the scaling stage. They are being measured by technical performance benchmarks rather than business outcome KPIs. And they are being deployed without the governance infrastructure required for board-level production approval.

IBM IBV projects that AI-enabled workflows will grow from 3% to 25% of enterprise operations by the end of 2025. Gartner estimates that 40% of enterprise applications will embed AI agents by 2026, up from less than 5% today. The scale of the expected transition makes the architectural question urgent in a way it has not been before: the organizations that solve the pilot-to-production problem now will capture a first-mover advantage in a competitive landscape that is about to restructure around AI operational capability. Organizations that repeat the mistakes of the previous three waves will find themselves defending disappointing results to a board that has significantly raised its expectations.

The three root causes of pilot trap

If the same failure pattern appears across multiple technology waves, the underlying causes are unlikely to be project-specific. Instead, they tend to be structural features of how organizations design, evaluate, and deploy new technologies. In the case of enterprise AI, three structural constraints account for the majority of pilot-to-production failures: fragmented data environments, restrictive architectural choices, and the absence of business-connected performance metrics.

Root cause 1: Data fragmentation

Fragmented enterprise data architectures remain one of the most persistent barriers to scaling AI. IBM’s Institute for Business Value reports that about 50% of CEOs acknowledge that the pace of AI investment has left their organizations with disconnected data environments.

This constraint is fundamental. AI agents require connected, real-time access to data across systems to operate effectively. An autonomous procurement agent that can see purchase orders but not inventory levels, supplier performance, or demand forecasts is not truly autonomous. It is simply an automated lookup tool operating with incomplete information.

This limitation typically surfaces during the transition from pilot to production. A supply chain pilot may perform well when querying a single system. But when teams attempt to extend the solution across multiple operational systems, integration complexity increases rapidly. Data pipelines, access controls, and system compatibility issues accumulate, slowing deployment and often causing the initiative to stall before reaching enterprise scale.

Root Cause 2: Architecture lock-in

A second structural barrier arises from architectural decisions made during early experimentation. According to Deloitte research, nearly 60% of AI leaders cite integrating with legacy systems and existing enterprise infrastructure as the primary challenge when scaling agentic AI deployments.

Many organizations initially build pilots using proprietary or single-vendor model stacks because they enable rapid experimentation. However, this approach introduces architectural rigidity as deployments expand. Each additional system integrated into the workflow increases the cost and complexity of maintaining that vendor-specific architecture.

Organizations that successfully scale AI tend to follow a different principle: model-agnostic orchestration. Rather than binding the application architecture to a single foundation model, they build a flexible integration and workflow layer that can route tasks across multiple models. In this approach, the infrastructure beneath the models determines scalability far more than the choice of model itself.

Root Cause 3: Measurement vacuum

The third and often overlooked barrier is the absence of business-connected metrics. Many organizations evaluate pilots using technical indicators such as model accuracy, precision, recall, or hallucination rates. While useful for model development, these metrics rarely address the question most relevant to enterprise leadership: what measurable business value did the system create?

The gap becomes evident during funding decisions for production deployment. A pilot team may present strong technical performance, for example, a model achieving 94% accuracy. But executive leadership evaluates initiatives based on revenue impact, cost reduction, risk mitigation, or productivity improvement. When technical results are not clearly connected to these outcomes, the justification for scaling weakens.

As a result, pilots often demonstrate technical feasibility but fail to secure the investment required for enterprise-wide deployment.

Streamline your operational workflows with ZBrain AI agents designed to address enterprise challenges.

The performance gap: What production-ready looks like

The gap between pilot-trapped and production-ready enterprises is not merely operational; it is exponential.

McKinsey’s 2025 State of AI research shows that AI high performers are three times more likely to be scaling agents across multiple business functions, demonstrating that value emerges when organizations move beyond isolated experimentation and embed AI into core operational workflows.

The economic impact becomes even more pronounced at the firm level. BCG research shows that AI-native companies achieve five times higher revenue growth and three times greater cost reductions compared with peers that are slower to integrate AI into their operating models. These differences increasingly translate into measurable shareholder value and competitive advantage.

PwC’s 2025 AI Jobs Barometer highlights a similar structural divide. Since 2022, productivity growth in industries most exposed to AI has nearly quadrupled, reflecting the growing performance gap between organizations that have integrated AI into operational workflows and those still experimenting with isolated deployments. Integration, in this sense, is not a deployment detail; it is the primary driver of productivity gains. Every quarter spent in pilot mode is effectively a quarter during which competitors accumulate operational advantage.

The scale of the coming transition further amplifies the urgency. IBM IBV projects that AI-enabled workflows will grow from 3% to 25% of enterprise operations by the end of 2025. Meanwhile, Gartner estimates that 40% of enterprise applications will embed AI agents by 2026, up from less than 5% today.

These projections indicate that the key question for technology leaders is now not whether AI will become a part of enterprise operations, but how quickly their organizations can implement it. As the transition speeds up, the architectural choices made today will decide which organizations gain early scaling benefits and which stay stuck in prolonged experimentation. Therefore, the urgency is not rhetorical; it is structural.

The human dimension: Why scaling AI is not a talent problem

When AI scaling efforts stall, internal reviews often arrive at the same conclusion: the organization lacked the right talent. This diagnosis is not entirely wrong, but it is incomplete. Skills gaps do exist, but in many cases, however, the talent narrative distracts from a deeper issue: the architectural foundations required for production deployment were never established.

Most enterprise teams already possess the capabilities required to build pilots. Data scientists, ML engineers, and product managers can develop impressive demonstrations in controlled environments. What many organizations lack is the architectural discipline needed to translate a successful demonstration into a production-grade system integrated across enterprise data, applications, and workflows. Building a pilot and deploying enterprise AI are fundamentally different problems.

Production-ready organizations increasingly reflect this distinction in the roles they establish. AI architects design the connectivity layer between AI agents and enterprise systems. Enterprise agent orchestrators manage workflow logic across multi-agent deployments. MLOps engineers maintain the monitoring, retraining, and quality assurance infrastructure required to keep production systems reliable over time.

Equally important is a cultural shift that cannot be solved through hiring alone. Scaling AI requires cross-functional ownership and cannot remain confined to one team. Production-grade AI deployment intersects with legal oversight, compliance requirements, operational processes, financial controls, and customer-facing systems. Organizations that continue to treat AI as a technology initiative rather than a business transformation effort will continue producing impressive pilots that never progress beyond experimental environments.

ZBrain Builder: Where enterprise AI architecture meets production reality

Every architectural requirement described in this article, model-agnostic orchestration, unified data integration, low-code deployment, built-in governance, and continuous improvement, exists because organizations need a specific kind of infrastructure that most are still attempting to assemble from point solutions. ZBrain Builder is that infrastructure: the enterprise agentic AI orchestration platform, purpose-built to deliver such requirements as a unified production foundation, not a collection of tools bolted together.

ZBrain Builder capabilities: The production infrastructure stack

The following table maps each ZBrain Builder capability to the specific production gap it closes and the business outcome it enables:

|

Pattern |

Problem it solves |

When to apply |

|---|---|---|

|

CQRS (Command Query Responsibility Segregation |

Separates read and write operations for independent scaling; prevents cascade failures in distributed calls |

High-traffic systems with asymmetric read/write loads |

|

Circuit breaker |

Prevents cascade failures in distributed calls |

Microservices and any remote service dependency |

|

Strangler fig |

Incrementally replaces a legacy system |

Legacy modernization without big-bang replacement |

|

Event sourcing |

Stores state as a sequence of events |

Audit trails, complex event replay, and financial syste |

|

Bulkhead |

Isolates failures within resource pools |

Systems requiring fault containment at the component level |

|

Anti-corruption layer |

Protects a clean domain from legacy interfaces |

Integration with legacy or third-party systems |

|

Saga pattern |

Manages distributed transactions |

Multi-service business transactions without Two-Phase Commit (2PC) |

|

Feature toggle

|

Controls feature exposure dynamically |

Controlled rollouts, A/B testing, canary deployments |

A critical discipline is selecting patterns based on genuine need rather than theoretical completeness. The combination of CQRS, event sourcing, microservices, and a multi-cloud deployment is appropriate in very few real-world scenarios, yet it appears with alarming frequency in architecture proposals. Each pattern carries an operational cost; that cost must be justified by a concrete requirement.

Governance, reviews, and continuous improvement

Establish architectural governance structures

Without governance, architectural standards erode under delivery pressure. An Architecture Review Board (ARB) provides a forum for reviewing significant design decisions, enforcing standards, and maintaining reusable reference architectures. Effective governance enables teams to move quickly within well-understood boundaries rather than centralizing every technical decision as an approval bottleneck.

Conduct regular architecture reviews

Point-in-time architecture reviews conducted at major project milestones ensure the evolving implementation remains consistent with the intended design. The ISO/IEC 25010 quality model provides a useful framework covering functional suitability, performance efficiency, reliability, security, and maintainability. Reviews should be collaborative rather than adversarial, with shared learning as the primary objective.

Integrate architecture with enterprise architecture (EA) repositories

When solution designs are maintained within an EA repository, they benefit from real-time traceability to business capabilities, shared component libraries, and automated impact analysis when changes are proposed. A centralized platform provides a single source of truth, enabling intelligent reuse of existing components and better governance of technology standards across the enterprise portfolio.|

Manage technical debt proactively

Technical debt should be treated as a managed backlog item: documented with a cost estimate, assigned a severity, and allocated a portion of each development cycle for systematic reduction. A common model reserves 20% of engineering capacity for debt remediation. Gartner estimates that organizations already spend up to 40% of their IT budgets maintaining existing technical debt rather than investing in innovation, a figure that compounds annually without proactive management.

Communication: The underrated dimension of architecture

Visualize to communicate

Architecture diagrams are communication tools, not documentation artifacts. Architects must maintain multiple views at different levels of abstraction: context diagrams for business stakeholders, component diagrams for development leads, and deployment diagrams for operations. Standard notations provide shared vocabulary that reduces ambiguity. The discipline of keeping diagrams current and audience-appropriate is as important as technical rigor.

Tell the story behind the design

Effective architectural communication means explaining not just what was decided, but why, what alternatives were considered, what trade-offs were made, and what assumptions underpin the design. Architecture Decision Records (ADRs) capture this context in a format that remains useful as the system evolves and team membership changes. If you cannot explain your solution clearly, you do not yet understand it well enough.

Engage stakeholders continuously

Architecture is a continuous discipline, not a phase. Solution designs should be reviewed by representatives of all affected groups, with feedback genuinely incorporated. Visual workshops and interactive design sessions are far more effective for building stakeholder alignment than distributing written documents for asynchronous review.

Balancing innovation and stability

The innovation-stability dilemma

Technology leaders face a persistent tension: innovate to remain competitive, but maintain stability to protect customer trust and operational continuity. The instinct to chase every emerging capability must be balanced against the discipline of protecting what already works. A practical way to resolve this tension is to treat engineering investment as a portfolio, deliberately segmenting capacity across three horizons: sustaining core systems, optimizing existing capabilities, and exploring new technologies. The proportions vary by industry context, but the principle of intentional, bounded innovation investment applies universally.

Controlled experimentation

Structured experimentation through feature flags, canary deployments, A/B testing, sandbox environments, and chaos engineering allows organizations to validate new approaches while protecting production systems. Progressive rollout – 1% to 10% to 50% to 100% – provides confidence in new capabilities before full deployment, with rollback capability at every stage.

Evolve, do not revolutionize

Large-scale ‘big bang’ replacements of enterprise systems carry a well-documented and consistently underestimated failure rate. The Strangler Fig pattern allows organizations to modernize complex legacy platforms incrementally, with continuous value delivery. Versioned APIs, backward-compatible database migrations, and deliberate deprecation cycles are the mechanisms that allow architecture to advance without leaving broken integrations in its wake.

Common pitfalls and how to avoid them

Over-engineering

The temptation to design the theoretically perfect system consistently produces architectures that are too complex to build, too expensive to operate, and too opaque to maintain. Start with the simplest architecture that meets current requirements and add complexity only when a concrete, observed requirement demands it. ‘We might need this later’ is not sufficient justification for architectural complexity.

Security as an afterthought

Treating security as a final-phase quality gate rather than an architectural input is one of the most persistent and costly failures in enterprise technology delivery. Threat modelling and secure design review must be integrated into the standard design process, not relegated to a separate team review late in the project lifecycle.

Neglecting non-functional requirements

Projects that focus exclusively on functional requirements consistently produce systems that work in demonstrations but fail under real-world operational demands. NFRs must be explicitly documented, measurable, and incorporated into acceptance criteria. For example: The system shall be fast is not an architectural requirement. ‘P95 API response time under 200ms at 5,000 concurrent users is.

Ignoring the people dimension

A solution that is architecturally sound but practically unusable, due to poor change management, inadequate training, or designs that ignore actual user workflows, will fail to realize its intended value. The most successful architects balance deep technical capability with genuine curiosity about how people work, strong communication skills, and the humility to recognize that the best design is one that serves its users.

Streamline your operational workflows with ZBrain AI agents designed to address enterprise challenges.

Building an architecture-positive culture

Psychological safety for raising technical risks

Architects must be empowered to raise risks, surface trade-offs, and push back on unrealistic constraints without fear of political consequences. Cultures in which bad news is suppressed consistently produce projects that encounter predictable but undisclosed problems late in delivery.

Continuous learning and adaptation

Technology evolves continuously. Architectural standards that were current three years ago may be outdated today. Organizations should invest in structured learning programmes, communities of practice, and dedicated time for architects to evaluate emerging technologies and update reference architectures accordingly.

Learning-focused incident reviews

When systems fail, they will be learning-focused incident reviews focused on understanding systemic causes rather than assigning individual accountability produce architectural improvements that reduce the likelihood of recurrence.

Architecture as a shared responsibility

In high-performing engineering organizations, architectural thinking is distributed across engineering teams, with architects providing enabling guidance, standards, and oversight. This model scales architectural capability as organizations grow rather than creating a centralised bottleneck.

TechBrain: Al-assisted enterprise architecture design in practice

The principles and practices outlined in this article, requirements traceability, and design validation, structured documentation, governance, and cross-stakeholder alignment – represent the gold standard of solution architecture. Yet in practice, the translation from validated solution requirements to execution-ready technical designs remains one of the most consistently challenging phases of enterprise delivery. Requirements are scattered across fragmented sources, system dependencies are manually reconciled, and key assumptions go unvalidated until development is underway, and documentation is produced retrospectively rather than as an integral part of the design process.

TechBrain, developed by ZBrain, is an enterprise-grade AI-assisted technical architecture design

platform purpose-built to close this execution gap. Rather than replacing the judgment and

experience of the solution architect, TechBrain augments it – providing a governed, structured

workspace in which the practitioner’s expertise is amplified by AI-driven automation, validation,

and synthesis.

What is TechBrain?

TechBrain transforms validated solution requirements into structured, build-ready architecture blueprints with traceability, completeness, and governance built in. Its core capability chain reflects the end-to-end architecture design workflow described throughout this article:

| Capability | What it does | Production gap it closes |

|---|---|---|

| Model agnostic | Routes each workflow to the optimal model (GPT-4, Claude, Gemini, Llama, Mistral) based on task, cost, and compliance without vendor lock-in | Architecture lock-in that makes future model adoption require full infrastructure rework |

| Multi-source data ingestion & ETL pipeline | Connects and transforms structured and unstructured data from ERP, CRM, APIs, PDFs, and databases into a real-time AI-queryable layer | Data fragmentation that forces agents to operate on incomplete or stale data |

| Private knowledge base | Maintains a governed, access-controlled vector store of enterprise knowledge with tagging, versioning, and lineage | Security and access-control blockers preventing AI from using sensitive enterprise data |

| Low-code workflow builder | Provides a visual interface to design and deploy multi-step agent workflows without heavy engineering dependency | Engineering bottlenecks limiting AI deployment speed |

| Reusable agent library | Stores and reuses agents as composable components, enabling faster deployment of new use cases | Rebuild-from-scratch cost for every new AI initiative |

| APPOps & observability dashboards | Offers real-time visibility into agent performance, workflows, and system health for both business and technical teams | Operational blindness where issues are detected too late |

| Evaluation suite | Continuously evaluates output quality, reasoning accuracy, and business KPI impact to detect degradation early | Silent performance decay in production systems |

| Ethics guardrails | Enforces policy, compliance, and behavioral constraints before and during execution | Out-of-scope behavior causing legal and reputational risks |

| Decision logging & audit trails | Maintains tamper-proof logs of inputs, decisions, and reasoning for full traceability | Delayed governance approvals due to lack of audit readiness |

| Human-in-the-Loop RLHF | Incorporates structured human feedback loops to continuously improve agent performance | Static deployments that degrade over time without improvement |

Together, these capabilities form a unified orchestration engine where every component reinforces the others: the ETL pipeline feeds the knowledge base, the knowledge base powers the agents, the FlowBuilder deploys them across business functions, APPOps monitors them, the evaluation suite scores them, guardrails govern them, audit trails account for them, and the RLHF loop improves them continuously, in production. Organizations deploying on ZBrain Builder can achieve shorter time-to-production because the infrastructure compounds rather than resets with each new deployment.

The architecture of scalable AI: Eight non-negotiables

The production-ready enterprises share a common architecture. It is not defined by which AI model they use; the model is almost incidental. What distinguishes them is the infrastructure they built beneath the model. Five requirements define that infrastructure:

1. Model-agnostic orchestration

Lock-in to a single foundation model is the single most common architecture mistake in enterprise AI. Different tasks require different models. Customer-facing applications may demand different capabilities than internal analytics agents. A model-agnostic orchestration layer allows organizations to route each workflow to the most appropriate model, and to swap models as the market evolves without rebuilding the integration layer.

2. Multi-source data integration with real-time refresh

Agents are only as intelligent as the data they can access. An orchestration platform that integrates structured and unstructured data from databases, documents, APIs, and cloud storage, and keeps that data current, is the difference between an agent that makes decisions on last quarter’s information and one that responds to what is happening now.

3. Low-code workflow builder

If only engineers could deploy agents, AI would never scale beyond engineering capacity. Low-code workflow builders that allow business teams, finance, HR, supply chain, and customer operations to configure and deploy agents against their own domain knowledge are the architectural requirement that moves AI from a technology project to an enterprise capability.

4. Built-in evaluation, guardrails, and observability

Production AI requires continuous monitoring. Guardrails that prevent agents from taking actions outside defined boundaries, evaluation frameworks that flag performance degradation, and observability dashboards that make agent behavior transparent; these are not optional features. They are the infrastructure that makes production AI trustworthy enough to scale.

5. Human-in-the-Loop feedback mechanism

Agents improve through feedback. A structured mechanism for human reviewers to validate, correct, and rate agent outputs, and for that feedback to flow back into model performance, is what distinguishes a static deployment from a continuously improving one. Production-ready AI is deployed and iterated, not forgotten.

6. Enterprise governance and auditability

Production AI must operate within regulatory, security, and ethical boundaries. Governance cannot be retrofitted after deployment. Enterprise-grade AI infrastructure must include decision traceability, audit logs, access controls, and policy enforcement that make every agent action transparent and reviewable. Without built-in governance, organizations struggle to obtain approval for production deployment in regulated environments where accountability and explainability are mandatory.

7. Reusable agent and component architecture

Scalable AI infrastructure compounds over time. Every deployed agent should become a reusable component that can be adapted, extended, or integrated into future workflows. Organizations that treat each deployment as a standalone project rebuild the same capabilities repeatedly, slowing adoption and increasing costs. A reusable architecture enables enterprises to build an expanding library of agents, integrations, and workflows that accelerate each subsequent deployment.

8. Enterprise security and access control

AI agents interact with sensitive enterprise systems, data, and workflows. Production deployments, therefore, require security frameworks that enforce authentication, authorization, and data access policies across every interaction. Agents must operate within clearly defined permissions, ensuring they only access the data and systems required for their tasks. Without a robust security architecture, organizations either block AI access to critical systems or expose themselves to unacceptable operational and compliance risk.

To architect this at enterprise scale, organizations are turning to platforms that unify these eight requirements under a single orchestration layer, rather than attempting to assemble them from disparate point solutions. The integration overhead of assembling a model-agnostic stack from scratch is itself a scaling barrier. Enterprise AI orchestration platforms reduce time-to-production from 12–18 months to weeks.

Closing the pilot-to-production gap: Key actions for measurable business impact

The path from pilot-trapped to production-ready is not a multi-year transformation program. It is a series of deliberate architectural and organizational decisions that compound over time. These five actions represent the highest-leverage starting points:

-

Conduct an honest pilot-to-production conversion audit.

What percentage of your AI pilots have reached enterprise-wide deployment in the past 24 months? For those that stalled, document precisely where and why the transition failed. In most organizations, the answer traces back to one of three structural constraints: fragmented data architectures, vendor lock-in, or the absence of business-connected success metrics.

-

Define your AI infrastructure non-negotiables before the next pilot begins

It’s important to consider whether new AI initiatives can be built on model-agnostic, data-integrated, and governance-ready infrastructure. Architectural decisions made during experimentation determine whether scaling will be possible later. The time to establish these standards is before the pilot starts, not after it succeeds.

-

Define ownership across every stage of AI deployment

The transition from experimentation to production is where most AI initiatives stall, not due to technology limitations, but due to the absence of defined ownership across the delivery stages that make scaling possible. Organizations that successfully operationalize AI establish clear accountability at each critical stage of that transition: data integration, governance, operational readiness, and business outcome measurement. This is not a single-role responsibility. It is a cross-functional coordination requirement in which each stage has an identified owner, and those owners are aligned on a shared deployment objective rather than working in independent workstreams.

-

Build a reusable agent library

Every successful pilot should produce reusable components that can be deployed, adapted, or extended across additional functions. Organizations that rebuild solutions from scratch for each new use case fail to accumulate architectural leverage. Production-ready enterprises treat each deployment as a building block in a growing portfolio of reusable AI capabilities.

-

Tie pilot success metrics to production deployment outcomes

Model accuracy alone is not a meaningful success metric. The defining measure of a pilot should be whether it progresses to production and delivers measurable business value once deployed. Establishing this expectation before the pilot begins fundamentally changes how initiatives are designed, evaluated, and prioritized.

Organizations that take these steps begin shifting AI from isolated experimentation toward operational capability. The difference between pilot activity and production impact rarely lies in the sophistication of the models being tested. More often, it reflects the discipline with which enterprises design the architecture, governance, and organizational ownership required to scale AI into everyday operations.

Streamline your operational workflows with ZBrain AI agents designed to address enterprise challenges.

Endnote

The 77% of organizations still trapped in pilot mode are not failing because they lack ambition, budget, or belief in AI. They are failing because the infrastructure beneath their ambition was never built to carry it. Every wave of enterprise technology has produced this same moment, the point where experimentation has to give way to architecture, where enthusiasm has to be matched by engineering, and where the question shifts from can AI do this? to have we built the foundation for AI to do this at scale? That question is now unavoidable. And the answer, for most organizations, is not yet.

ZBrain Builder exists precisely for this inflection point, not as another tool to add to an already fragmented AI stack, but as the unified orchestration layer that transforms a portfolio of promising pilots into compounding enterprise capability. The organizations that will lead their industries in three years are not necessarily running the most pilots today. They are the ones quietly building infrastructure that makes every subsequent deployment faster, smarter, and more governable than the last. The pilot era is ending. The infrastructure era has begun. The only question left is which side of that transition your organization is building toward.

Move beyond AI pilots to production-ready agents that deliver measurable business impact. Explore how ZBrain Builder helps operationalize enterprise AI at scale. Book a demo today.

Author’s Bio

An early adopter of emerging technologies, Akash leads innovation in AI, driving transformative solutions that enhance business operations. With his entrepreneurial spirit, technical acumen and passion for AI, Akash continues to explore new horizons, empowering businesses with solutions that enable seamless automation, intelligent decision-making, and next-generation digital experiences.

Table of content

- Two architecturally distinct approaches to enterprise AI

- A pattern repeating across four technology waves

- The performance gap: What production-ready looks like

- The human dimension: Why the talent narrative is the wrong diagnosis

- ZBrain Builder: Where enterprise AI architecture meets production reality

- The architecture of scalable AI: Eight non-negotiables

- Closing the pilot-to-production gap: Key actions for measurable business impact

Frequently Asked Questions

Why do most enterprise AI pilots fail to reach production?

The core reason is architectural, not operational. Most pilots are built on infrastructure disconnected from real enterprise systems and lack data integration, governance, and business-outcome metrics. When scaling is attempted, the gaps become insurmountable. The result is a portfolio of experiments that never deliver operational value.

What is the "pilot trap" and how do organizations get stuck in it?

The pilot trap is a cycle in which organizations continuously launch new AI experiments before prior ones have scaled. It’s driven by measuring success through model accuracy rather than production deployment, siloing AI within data science teams, and rebuilding infrastructure from scratch for every new initiative instead of compounding on reusable foundations.

How is agentic AI different from previous AI waves like RPA or machine learning?

Agentic AI can orchestrate multi-step workflows across systems, synthesize real-time data, and take autonomous action, far beyond the rule-based determinism of RPA or the model-in-isolation nature of most ML deployments. But it also introduces greater architectural complexity, making the infrastructure decisions even more consequential than in previous waves.

What separates the 23% of enterprises successfully scaling AI from the rest?

Production-ready enterprises share a common infrastructure foundation: model-agnostic orchestration, multi-source data integration, low-code deployment tools, built-in governance, and human-in-the-loop feedback. Critically, they also measure pilot success by production deployment and business KPI impact, not by technical benchmarks.

How does data fragmentation prevent AI from scaling?

AI agents require connected, real-time access to data across functions. When enterprise data sits in siloed systems, agents can only operate on partial information, limiting their decision-making capability. Scaling an agent from one system to several in production often multiplies integration complexity to a point that stalls the entire initiative.

How does ZBrain Builder help organizations move from pilot to production?

ZBrain Builder provides a unified orchestration engine that addresses all production prerequisites in one platform: multi-LLM routing, multi-source data integration, low-code workflow deployment, built-in governance and guardrails, and human-in-the-loop feedback. Organizations using it shorter time-to-production per subsequent use case, because the infrastructure compounds rather than resets with each new deployment.

What makes ZBrain Builder's approach to governance different from other platforms?

Most platforms treat governance as a post-deployment retrofit, something to address after the agent is already running. ZBrain Builder builds governance in at the architecture level: ethics guardrails validate every agent action before and during execution, tamper-evident decision logs make every deployment audit-ready from day one, and access-controlled knowledge bases ensure sensitive data is never exposed beyond defined permissions. This is what enables production approval in regulated environments without months of compliance remediation.

How much faster do organizations reach production when they deploy on ZBrain Builder?

Organizations deploying on ZBrain Builder report shorter time-to-production timelines with each subsequent use case. The reason is compounding infrastructure: every deployed agent is stored as a reusable component in the agent library, so new use cases build on existing agents rather than starting from scratch. Unlike point-solution approaches, where every deployment resets the clock, ZBrain Builder’s architecture accelerates with each new deployment rather than repeating the same fixed cost.

How do we get started with ZBrain™?

To begin your AI journey with ZBrain™:

-

Contact us at hello@zbrain.ai

-

Or fill out the inquiry form on zbrain.ai

Our dedicated team will work with you to evaluate your current AI development environment, identify key opportunities for AI integration, and design a customized pilot plan tailored to your organization’s goals.