Transforming enterprise automation with agentic AI orchestration

Listen to the article

Agentic AI workflows—where AI systems autonomously interpret goals, decompose them into multistep plans, and execute conditional actions—are rapidly moving from experimental research into real-world enterprise deployments. What was once confined to innovation labs is now becoming core operational infrastructure.

By 2025, an estimated 85% of large organizations will have implemented AI agents to streamline operations and customer service. These workflows promise substantial gains: McKinsey projects $2.6 trillion to $4.4 trillion in annual value from the use of generative AI in various applications, while individual productivity can increase by up to 30% when agents handle routine tasks. Gartner forecasts that by 2028, one-third of enterprise software will embed agentic AI, automating 15% of day-to-day decisions.

However, these gains are not achievable with simplistic “LLM wrapper” approaches. Linear, single-shot interactions lack the reliability, observability, security, and governance required in complex, regulated enterprise environments. As organizations move from experimentation to production, they are discovering that agentic AI success depends less on the model itself and more on orchestration, control, and system design.

This article examines why agentic AI orchestration is becoming the foundation for scalable enterprise autonomy and demonstrates how ZBrain Builder operationalizes these principles—providing a structured, governance-ready platform for designing, coordinating, and scaling end-to-end autonomous workflows.

- The GenAI divide in enterprises

- What is agentic AI, and why does it matter?

- Agentic AI orchestration: Coordinating autonomous workflows at scale

- Key capabilities of agentic AI orchestration

- Types of agentic AI orchestration patterns

- Implementation strategy for agentic AI orchestration

- Core components of agentic AI: A module-based breakdown of autonomous LLM-powered systems

- Core stages of an agentic AI workflow

- Adoption considerations for agentic AI: Performance, security and governance

- How ZBrain Builder’s agent crew framework operationalizes agentic AI principles

- Best practices for implementing and scaling agentic AI with ZBrain Builder

The GenAI divide in enterprises

Generative AI has rapidly progressed from experimentation to widespread enterprise adoption, reshaping how organizations approach innovation, productivity, and decision-making. Across industries, companies have increased investment as early deployments demonstrated promising gains. By mid-2024, nearly two-thirds of enterprises already using generative AI had increased their investment after early gains.

Yet the results tell a different story. Despite significant spending, most enterprise AI initiatives fail to deliver measurable business impact. Research from MIT suggests that as many as 95% of enterprise generative AI pilots fail to deliver rapid, measurable revenue impact — representing what the report calls the clearest manifestation of the “GenAI Divide,” the widening gap between organizations that experiment with AI and those that successfully operationalize it at scale.

The root cause is not model quality or compute limitations. It is deployment. Many enterprise AI systems remain stateless, reactive copilots—disconnected from real workflows, unable to carry objectives forward, and incapable of adapting as conditions change. Built as isolated tools rather than embedded operational systems, they struggle to operate within the complexity of real business environments.

This limitation signals the next evolution of enterprise AI: a shift beyond prompt-driven generation toward systems that can reason about goals, coordinate actions across tools, and execute work autonomously over time. This evolution is known as agentic AI.

What is agentic AI, and why does it matter?

Agentic AI refers to a class of artificial intelligence systems designed to act autonomously in pursuit of defined goals, rather than merely responding to prompts or executing predefined rules. Unlike traditional AI systems—which analyze data or generate outputs in isolation—agentic AI systems can interpret objectives, plan multi-step actions, invoke tools, adapt to changing conditions, and continuously refine their behavior with minimal human intervention.

The term agentic derives from “agency”: the capacity to act independently and purposefully. In practical terms, this means an agentic AI system does not wait passively for instructions. Instead, once a goal is provided, it evaluates relevant context, determines the actions required to achieve it, executes them, and adjusts its strategy based on observed outcomes and feedback.

This represents a structural shift in how AI systems operate. Traditional and generative AI models are largely reactive; they respond to inputs but do not persist intent or carry objectives forward beyond a single interaction. Agentic AI introduces continuity, statefulness, and goal-driven initiative, enabling systems to evaluate context, reason through alternatives, and orchestrate complex workflows rather than merely generate isolated outputs.

Agentic AI and AI agents

It is important to distinguish between agentic AI and AI agents, as the two terms are related but not interchangeable.

Agentic AI refers to a class of AI systems designed for autonomous, goal-driven problem-solving with limited human supervision. It defines the broader system architecture — including planning, memory, orchestration and governance — that enables AI to operate independently over time.

AI agents, by contrast, are the individual software components operating within that framework. Each agent is designed to perform specific tasks with a degree of autonomy, such as retrieving data, generating analysis, invoking APIs or validating results.

In practical terms, agentic AI provides the coordination layer, while AI agents serve as the execution units.

For example, consider an enterprise procurement workflow. The agentic AI system manages the overall objective — ensuring timely, policy-aligned vendor onboarding and purchase approval workflows. Individual AI agents handle specialized tasks such as validating vendor documents, checking compliance requirements, generating purchase orders, and updating ERP systems. While each agent performs its assigned function, the agentic AI system coordinates sequencing, enforces policies, and ensures all actions align with organizational rules and budget constraints.

This distinction becomes especially important in enterprise environments, where multiple specialized agents must collaborate under a governed orchestration layer to deliver reliable, end-to-end outcomes.

How agentic AI works

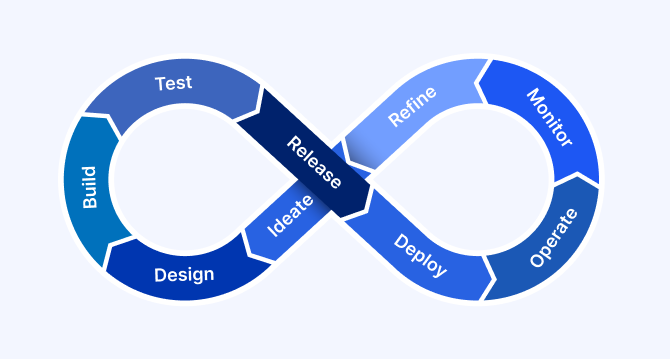

An agentic AI workflow typically follows an iterative sequence:

- Observe: Collect relevant information from the current context.

- Plan: Analyze the objective, break it down into actionable subtasks and identify necessary tools or integrations.

- Act: Execute specific tasks – such as API calls, data retrieval or system interactions – without manual oversight.

- Evaluate: Review outcomes and determine success or the need for adjustments.

- Iterate: Continuously cycle through these steps until completion or predefined thresholds (such as time, cost or policy constraints) are met.

From reactive AI to autonomous systems

Enterprise AI has evolved in distinct phases. Early predictive AI focused on forecasting outcomes from historical data. Generative AI expanded these capabilities by producing text, images, and code in response to prompts. However, both paradigms remain largely reactive—they assist users but do not independently manage objectives over time.

Agentic AI represents the next evolutionary step. It extends generative models with autonomy, memory, reasoning, and action, enabling AI systems to operate within real business workflows end-to-end. Rather than answering a single question, an agentic system can manage an entire process over time.

For example, instead of merely suggesting how to resolve a customer issue, an agentic AI system can:

- Detect an issue from incoming signals

- Retrieve relevant data from enterprise systems

- Decide on a resolution path

- Execute corrective actions via APIs

- Escalate to humans only when predefined risk or confidence thresholds are crossed

This shift—from isolated assistance to sustained execution—fundamentally differentiates agentic AI from prior AI approaches.

Agentic AI in practice: from assistance to execution

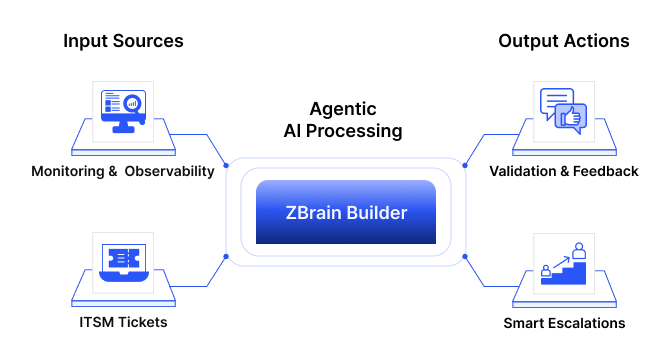

In real-world enterprise deployments, agentic AI systems function as a digital operations layer across IT environments.

Consider an IT incident scenario. When a critical system alert is triggered, an agentic AI system can:

- Classify the severity of the issue

- Retrieve logs and system diagnostics

- Correlate similar past incidents

- Execute predefined remediation scripts

- Notify stakeholders and update ticketing systems

- Escalate to human engineers if risk thresholds are exceeded

These actions are not rigidly scripted. The system evaluates context, selects appropriate tools, and dynamically adapts its response.

At scale, multiple specialized agents—monitoring, diagnostics, remediation, and compliance—coordinate through orchestration logic and shared resources state. This transforms AI from a passive support tool into an active operational responder.

Strategic importance of agentic AI for enterprises

Adopting agentic AI is not simply an incremental improvement over conventional AI – it is a strategic evolution. Here’s why leading enterprises are embracing it:

-

Enhanced adaptability

Agentic AI dynamically adjusts in real time, responding intelligently to shifting data or unforeseen circumstances. This reduces dependence on frequent manual interventions, allowing enterprises to operate with agility at scale.

-

Sophisticated problem-solving

Complex business challenges often involve multiple decision points, ambiguity and varying conditions. Agentic AI systems excel at breaking down complex problems into smaller, manageable tasks, navigating conditional pathways and continuously refining strategies to reach optimal solutions.

-

Autonomous coordination across systems

Agentic AI autonomously orchestrates multistep workflows – ranging from customer interactions and internal data queries to external API calls – without human intervention. For example, an agentic AI deployed in IT support could autonomously:

- Engage users by asking clarifying questions

- Retrieve information from internal knowledge bases

- Automatically resolve issues or escalate appropriately

-

Scaling specialised expertise

By encoding business logic, domain rules, and contextual reasoning into autonomous agents, organizations can scale expert-level decision-making across workflows—without being constrained by individual availability.

-

Governed autonomy

Organizations maintain essential governance and auditability by logging every action, decision and data interaction undertaken by the AI in detail. This transparent framework facilitates compliance, risk management and effective oversight.

How is agentic AI different from traditional AI agents?

|

Dimension |

Traditional AI agents |

Agentic AI systems |

|---|---|---|

|

Decision-making |

Limited to a predefined domain; escalates ambiguity |

Holistic situational analysis adapts autonomously |

|

Problem-solving |

Handles tasks within a specialized, trained scope |

Autonomously plan and execute multi-step solutions that span across domains, without requiring constant human intervention. |

|

Learning and adaptation |

Improves only with explicit retraining |

Evolves continuously by learning from outcomes; adapts strategies autonomously |

|

Input tolerance |

Requires structured data and clear schemas |

Interprets ambiguous, unstructured, and noisy data |

|

Resilience |

Brittle; failures halt execution |

Resilient; detects failures and pivots to alternative paths |

|

Integration capability |

Integrates within pre-defined workflows |

Dynamically orchestrates multiple systems |

Agentic AI vs. basic LLM wrappers

The typical LLM-based approach (often called a “basic wrapper”) simply takes an input, possibly enriches it, makes a single call to an LLM, and returns a static response. In contrast, agentic AI extends the capabilities significantly:

|

Aspect |

Agentic AI |

Basic LLM wrapper |

|---|---|---|

|

Control flow |

Dynamically determines next steps based on context |

Static linear workflow |

|

Tool integration |

Autonomously invokes multiple APIs, scripts, and databases |

Single LLM call with fixed augmentation |

|

Iteration and refinement |

Iterative observe-plan-act loops until goals are met |

Single-shot execution without iteration |

|

Complex task handling |

Manages multi-step, conditional, and ambiguous workflows |

Suitable only for simple Q&A or templated tasks |

|

State management |

Maintains shared state and progress over time |

Stateless, one-shot interactions |

|

Error recovery |

Detects failures, autonomously retries, and routes tasks to other agents |

Errors immediately escalate to human operators |

|

Data retrieval |

Dynamic, agentic RAG with source validation |

Static RAG from fixed vector stores |

|

Human involvement |

Minimal, primarily for strategic oversight or escalation |

Frequently requires human intervention |

|

Use cases |

IT support, end-to-end process automation, and research assistants |

FAQ chatbots, document summarization, and simple AI applications |

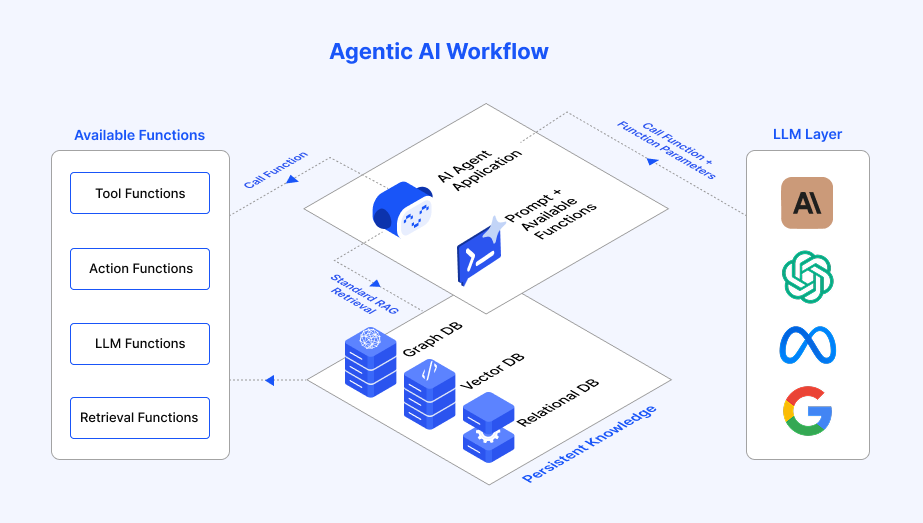

Core architectural components of Agentic AI

Agentic AI systems comprise several essential building blocks:

- AI agents: Autonomous software components capable of independent reasoning, planning and executing actions.

- Environment: Business or operational context (digital) in which the agent operates and interacts.

- Shared memory (knowledge repository): A central hub enabling seamless communication, information sharing and coordinated strategies among multiple agents.

- Tooling and integration layer: APIs, databases or services that agents dynamically use to achieve their business objectives.

This architecture allows individual agents to operate autonomously, collaborate effectively and continuously enhance collective performance by learning from shared experiences.

For enterprise leaders, adopting agentic AI represents more than technological advancement – it is a strategic imperative. By transitioning from passive AI solutions to autonomous, intelligent workflows, organizations can significantly enhance their operational efficiency, responsiveness, and problem-solving capabilities. Agentic AI unlocks deeper reasoning, adaptive decision-making and seamless integration across organizational systems, enabling enterprises to achieve higher productivity, agility and sustained competitive advantage in a rapidly evolving digital landscape.

Agentic AI orchestration: Coordinating autonomous workflows at scale

As agentic AI systems evolve from single-agent workflows into multi-agent system deployments, orchestration becomes the defining control layer.

Individual agents can interpret goals, generate plans and invoke tools. However, enterprise-grade environments require structured coordination across multiple agents, data sources, enterprise systems and governance controls. Without orchestration, autonomous agents may operate effectively in isolation but struggle to scale reliably within complex operational ecosystems.

Agentic AI orchestration refers to the structured framework that supervises, coordinates and governs autonomous AI agents operating within shared workflows. It ensures that execution is sequenced correctly, context is preserved, failures are managed systematically, and enterprise policies are enforced consistently.

If workflows define what agents do, orchestration defines how those agents interact, are coordinated, monitored, and held accountable for their actions.

Why orchestration becomes essential in enterprise environments

In controlled experiments, a single agent interacting with a limited set of tools may be sufficient. In production environments, however, the landscape becomes significantly more complex.

Enterprise deployments typically involve:

- Multiple specialized agents operating in parallel to achieve a goal

- Integrations with enterprise systems, databases and external APIs

- Real-time state synchronization across workflows

- Regulatory and compliance constraints

- Budget and latency thresholds

- Audit and traceability requirements

Without orchestration, autonomous systems can become fragmented and opaque. Agents may duplicate actions, compete for system resources, enter uncontrolled execution loops or produce outputs that lack traceability.

Orchestration introduces structural discipline. It transforms autonomous reasoning systems into coordinated, accountable and production-ready digital workforces.

Key capabilities of agentic AI orchestration

A mature orchestration layer performs several interconnected functions that enable scalable multi-agent system coordination.

1. Task routing and agent selection

Not every task should be handled by the same model or agent. Orchestration introduces intelligent routing mechanisms that dynamically allocate work based on context.

- Task routing to specialized agents: Incoming tasks are directed to the most appropriate specialized agent based on domain, complexity, or risk level. This ensures each objective is handled by the agent best suited for that function.

- Context-aware activation: Routing decisions can incorporate user roles, historical session data and system state, ensuring agents are activated only when appropriate.

Effective routing improves performance, reduces cost and ensures the appropriate expertise is applied to each objective.

2. Workflow coordination and dependency management

Enterprise workflows often involve conditional logic, parallel execution and interdependent subtasks. Orchestration ensures structured sequencing across these components.

- Execution graph management: Workflows are often modeled as directed graphs that define task dependencies. Independent subtasks can execute in parallel while preserving logical ordering for dependent steps.

- Event-driven progression: When one agent completes a task, it automatically triggers the next stage. This asynchronous coordination reduces idle time and improves efficiency.

- Conditional branching: Execution paths can adapt dynamically based on intermediate results. For example, a high-risk assessment may trigger additional compliance checks before proceeding.

Without coordinated dependency management, multi-agent systems risk inconsistent or incomplete execution.

3. State and context management

Multi-agent systems require shared awareness of workflow progress and historical interactions.

- Session-level state tracking: The orchestration layer maintains centralized records of intermediate outputs and task status, ensuring continuity across execution steps.

- Context isolation: Strict boundaries are maintained between user sessions, workflows, and individual agent contexts to prevent data leakage and unauthorized cross-task access—especially in regulated environments.

Robust state management enables coherent execution and operational reliability across complex workflows.

4. Execution control and fault handling

Autonomous agents interact with external systems that may fail unpredictably. Orchestration introduces resilience mechanisms.

- Retry logic and timeouts: Failed tool invocations are retried within predefined thresholds to mitigate transient errors.

- Fallback strategies: Secondary tools or alternative workflows can be triggered when primary systems are unavailable.

- Human escalation paths: High-risk or ambiguous outcomes can pause execution and request human validation.

This resilience layer ensures localized failures do not cascade into systemic breakdowns.

5. Governance, compliance and guardrails

Agentic autonomy must operate within enterprise-defined constraints.

- Role-based access control (RBAC): Agents are restricted to specific tools and datasets based on their identities and policies.

- Approval checkpoints: Sensitive actions—such as financial approvals or account modifications—can require explicit human oversight.

- Termination criteria: Limits on time, iterations or token consumption prevent uncontrolled execution loops.

- Comprehensive audit trails: Every prompt, decision and tool invocation is logged for traceability and compliance reporting.

These controls ensure autonomy enhances efficiency without compromising accountability.

6. Cost and resource governance

Agentic workflows can become computationally intensive without monitoring.

- Token usage tracking: Token consumption is monitored at the workflow and agent levels.

- Budget ceilings: Execution can be halted or throttled once cost thresholds are reached.

Embedding cost governance into orchestration ensures financial sustainability alongside innovation.

Streamline your operational workflows with ZBrain AI agents designed to address enterprise challenges.

Types of agentic AI orchestration patterns

Different orchestration models emerge depending on enterprise scale, regulatory intensity and system complexity. While real-world implementations often blend multiple approaches, four dominant patterns define how autonomous agents are coordinated in production environments.

Centralized orchestration

In a centralized model, a primary orchestrator acts as the control layer for all agent activity. It receives objectives, assigns tasks to agents and maintains global visibility into execution progress.

Key characteristics:

- A single supervisory entity manages task allocation and sequencing

- All agent interactions pass through the orchestrator

- Governance, access control and logging are enforced centrally

Advantages:

- Strong oversight and unified observability

- Simplified compliance enforcement and audit trails

- Predictable, structured workflow execution

Considerations:

- Requires horizontal scaling to avoid performance bottlenecks

- Must include redundancy to prevent single points of failure

Best suited for highly regulated environments where control and traceability are critical.

Decentralized orchestration

In decentralized orchestration, agents coordinate directly with one another through defined communication protocols. Decision-making authority is distributed across the system rather than concentrated in a single control entity.

Key characteristics:

- Agents publish and consume task-related events

- Peer-to-peer coordination replaces centralized supervision

- State can be maintained through distributed memory or messaging systems

Advantages:

- High scalability across large agent networks

- Improved fault tolerance

- Greater adaptability in dynamic environments

Considerations:

- Requires strong coordination policies to prevent conflicting actions

- Observability and debugging can be more complex

Best suited for high-scale, real-time systems where resilience and flexibility are priorities.

Hierarchical orchestration

Hierarchical orchestration introduces layered control structures. Strategic agents oversee coordination agents, which in turn manage task-specific execution agents.

Key characteristics:

- Multi-layer supervision model

- Clear segmentation between strategy, coordination and execution

- Structured feedback loops between layers

Advantages:

- Balances autonomy with structured oversight

- Enables modular expansion of agent groups

- Supports domain specialization within large enterprises

Considerations:

- Architectural design requires careful role definition

- Additional layers can introduce latency if not optimized

Best suited for large enterprises managing complex, multi-department workflows.

Federated orchestration

Federated orchestration enables collaboration between independent agent systems across organizational or regulatory boundaries, without centralizing all data.

Key characteristics:

- Each organization maintains its own agent infrastructure

- Interactions occur through standardized communication protocols

- Data exchange is controlled and policy-driven

Advantages:

- Preserves data sovereignty and regulatory compliance

- Enables cross-organization collaboration

- Supports multi-cloud and distributed ecosystems

Considerations:

- Depends on mature interoperability standards

- Requires robust identity and trust management mechanisms

Federated models are particularly relevant in healthcare networks, financial ecosystems and multi-partner supply chains.

In practice, enterprise deployments often blend elements of these patterns, selecting orchestration models based on operational risk, regulatory exposure, scalability demands, and system complexity. The choice of orchestration architecture influences not only performance, but also governance robustness, resilience, and long-term adaptability. As agentic AI becomes embedded within core business processes, orchestration design will increasingly define enterprise AI maturity.

Implementation strategy for agentic AI orchestration

Agentic orchestration requires more than deploying agents into workflows. It demands deliberate alignment between business objectives, infrastructure maturity, governance controls and organizational readiness. The following framework outlines how enterprises can move from experimentation to scalable, production-grade autonomy.

Step 1: Align orchestration with business goals and measurable outcomes

Agentic AI should be introduced where it can drive measurable operational impact — not where it is merely novel.

Begin by identifying high-impact workflows that are:

- Repetitive but cognitively demanding

- Dependent on multiple systems or data sources

- Slowed by manual coordination or decision bottlenecks

- Sensitive to latency, error rates or SLA pressure

Common starting points include:

- Customer onboarding and case resolution

- IT incident triage and remediation

- Financial reconciliation and reporting

- Invoice processing

For each selected workflow, clearly define the agent’s responsibilities. Avoid vague autonomy. Specify:

- Which agent gathers and validates data

- Which agent performs reasoning or classification

- Which agent invokes APIs or updates systems

- Which agent handles escalation or reporting

Finally, tie orchestration to explicit KPIs such as:

- Cycle time reduction

- SLA adherence improvement

- Reduced manual processing costs

- Error rate or rework reduction

Agentic orchestration must be outcome-driven. Without measurable objectives, it remains an experiment rather than an operational capability.

Step 2: Assess system readiness and integration maturity

Autonomous workflows depend heavily on the reliability of integrations. Before scaling, evaluate whether your infrastructure can support coordinated multi-agent execution.

Focus on three core dimensions:

Connectivity readiness

- Are stable, well-documented APIs available for enterprise systems(CRMs, ERPs, databases and ticketing systems)?

- Can agents securely access required data sources without workarounds?

- Are integrations resilient to latency and temporary failures?

Workflow and communication architecture

- Can your environment support asynchronous execution?

- Are workflows able to trigger downstream actions dynamically without blocking?

- Is there a clear mechanism for passing structured outputs between systems?

Security and governance baseline

- Is identity management centralized and enforced consistently?

- Are role-based access controls applied across systems?

- Are audit logs available and retained for compliance needs?

Identifying these gaps early prevents fragile deployments where agent intelligence outpaces infrastructure stability.

Step 3: Select an orchestration framework built for enterprise environments

Agentic AI requires more than workflow automation. It requires coordination, governance and control.

An enterprise-ready orchestration framework should support:

- Multi-agent coordination across complex workflows

- Secure and standardized tool integration

- Role-based permissions and identity enforcement

- Observability across prompts, tool calls and execution states

- Cost and token governance controls

Beyond features, the orchestration layer should serve as a centralized coordination and governance layer. It must:

- Maintain shared state across agents

- Enforce policy guardrails

- Provide structured audit trails

- Prevent uncontrolled execution loops

In production environments, orchestration is what transforms autonomous agents into accountable digital workforces.

Step 4: Adopt a phased rollout model

Scaling autonomy too quickly increases operational risk.

Instead, begin with:

- A single, well-defined pilot workflow

- Limited regulatory exposure

- Clear performance baselines

Run this workflow in a controlled production or sandbox environment. Monitor various execution aspects, including execution latency, success and failure rates, human intervention points, etc.

Use these insights to refine planning logic, tool invocation patterns and governance thresholds before expanding to adjacent use cases.

Phased rollout reduces systemic risk while building institutional confidence in agentic systems.

Step 5: Build observability and fault tolerance into the architecture

Autonomous systems must be observable systems.

Without visibility, agent loops, API failures or unintended execution paths can go unnoticed until they become costly.

Ensure your orchestration layer provides:

- Real-time telemetry of agent actions

- Structured logging of prompts, tool calls and outputs

- Retry and fallback logic for tool failures

- Alerting for anomalous behavior

- End-to-end traceability for compliance

Production-grade autonomy requires production-grade monitoring. Observability is not optional — it is foundational.

Step 6: Design for human-in-the-loop checkpoints

Autonomy should increase efficiency, not eliminate accountability. High-risk workflows should include configurable validation points.

Typical human-in-the-loop triggers include:

- Sensitive data modifications

- Low-confidence model outputs

- Policy boundary conditions

These checkpoints preserve trust and regulatory alignment while allowing the majority of routine decisions to proceed autonomously. A hybrid human–agent model is often the most sustainable enterprise pattern.

Step 7: Prepare teams for orchestration-centric operating models

Agentic AI changes how workflows are designed, monitored and optimized.

Organizations must develop capabilities in:

- Multi-agent workflow architecture

- Prompt and reasoning optimization

- Governance and policy enforcement

- Observability and performance analysis

- Cost management for token-intensive execution

- Reliability and resilience engineering

- Organizational change management

- Security and access control architecture

- AI operations maturity

This requires cross-functional collaboration across IT, operations, compliance and business stakeholders.

Agentic orchestration is not merely a technology deployment — it is an operational transformation. Enterprises that treat it as such are more likely to achieve sustained, scalable value.

Core components of agentic AI: A module-based breakdown of autonomous LLM-powered systems

Agentic AI systems represent a significant evolution of traditional AI, empowering autonomous decision-making, strategic action and continuous learning capabilities. These advanced capabilities arise from clearly defined, interdependent modules – each with a distinct role yet seamlessly integrated. Below is a structured, module-based architecture mapping the critical components for LLM-driven autonomous agents:

Perception module: Enabling contextual awareness

The perception module functions as the “eyes and ears” of agentic AI systems, gathering and processing data essential for informed decision-making.

Key components:

- Data input sources: Gathers data from integrated digital sources (e.g., APIs, databases, streams).

- Data processing and feature extraction: Cleans, structures and prepares data for subsequent cognitive interpretation, extracting essential contexts from raw inputs.

Cognitive module: The strategic decision-making core

This module functions as the “brain,” leveraging large language models (LLMs) to reason, interpret complex scenarios and formulate intelligent strategies.

Key components:

- Large language models (LLMs): Foundation models (e.g., GPT-5, Gemini, Llama 3) serve as the central reasoning engines, capable of understanding nuanced user intents and generating strategic responses.

- Goal definition: Dynamically defines, prioritizes and updates objectives based on contextual feedback, enabling goal-driven behavior.

- Reasoning and strategic planning: Decomposes complex tasks into actionable subtasks, handles conditional branches and formulates adaptive strategies.

- Prompt engineering and optimization: Uses carefully designed prompts to guide LLMs toward accurate reasoning and decision-making, enhancing coherence, compliance and reliability.

Action module: Executing autonomous tasks

The action module serves as the “hands and feet” of the system, translating cognitive decisions into concrete, autonomous actions.

Key components:

- Tools and API integration: Dynamically invokes external services such as APIs (for search and database queries), computational functions (scripts and calculators), etc.

- Execution and task automation: Carries out strategic actions autonomously – such as database updates, task automation or digital interactions – without human intervention.

- Execution monitoring: Tracks task outcomes and execution status in real time, allowing immediate detection and response to anomalies or failures.

Memory module: Retaining knowledge and state

The memory module manages knowledge storage and contextual continuity across interactions and sessions.

Key components:

- Short-term memory: Temporarily holds recent conversations, intermediate results and session-specific states to preserve context within a single interaction or task.

- Long-term memory: Persistently stores valuable data, historical actions, and insights in structured databases, vector stores or knowledge graphs, enabling improved decisions over time.

- Knowledge graphs and vector stores: Provide semantic understanding through structured representation of knowledge, enabling efficient retrieval, reasoning and decision support.

Learning module: Continuous improvement

This module enables AI agents to refine strategies and improve performance through ongoing experiences.

Key components:

- Reinforcement learning and historical analysis: Learns from task outcomes and historical actions to improve future performance.

- Self-reflection and evaluation: Periodically reviews past decisions to identify optimization opportunities, enabling continuous self-improvement.

- Continuous optimization: Adapts system parameters (LLM tuning, prompt refinement) and workflows based on success metrics, ensuring incremental gains in effectiveness and efficiency.

Collaboration module: Enabling multi-agent coordination

Facilitates coordinated decision-making and communication among multiple specialized agents.

Key components:

- Shared memory (knowledge repository): Acts as a centralized hub for information sharing, enabling coordinated strategies and collective problem-solving.

- Agent communication protocols: Standardized interfaces or messaging protocols facilitate seamless information exchange, collaborative task execution and resource sharing.

- System integration: Harmonizes interactions with enterprise systems, such as CRMs, ERPs, or business applications, through MCP, ensuring alignment with organizational workflows.

Security module: Ensuring operational integrity

Provides safeguards essential for secure and compliant operations, protecting data and ensuring trustworthiness.

Key components:

- Threat detection and real-time monitoring: Continuously identifies and mitigates security risks, anomalous behavior or unauthorized actions.

- Data encryption and privacy controls: Implements encryption standards and privacy measures to protect sensitive data.

- Sandboxing and isolation: Provides secure execution environments to prevent unauthorized or harmful code execution, ensuring integrity and trustworthiness.

Feedback and governance module: Maintaining oversight and compliance

Ensures robust governance, auditability and compliance through continuous feedback and safety oversight.

Key components:

- Human-in-the-loop (HITL) checks: Allows strategic human oversight at critical decision points, maintaining accountability and adherence to business rules.

- Agent-to-agent feedback: Uses specialized secondary agents (validators, critics) for cross-verification of decisions, enhancing accuracy and compliance.

- Automated validation and escalation: Implements business rule engines, test suites and escalation paths that trigger alerts or corrective actions.

- Termination criteria: Clearly defines completion conditions, safety limits (including time, iterations and cost) and escalation rules to prevent uncontrolled execution.

Execution environment and orchestration module: Scalability and reliability

Provides the infrastructure to reliably and securely deploy, manage and scale agentic AI systems.

Key components:

- Containerization and deployment platforms: Uses containerized environments (Docker, Kubernetes) or serverless frameworks to deploy scalable and reliable agent instances.

- Orchestration frameworks: Leverages platforms such as LangChain, LangGraph, AutoGPT, Vertex AI Agent Engine, Azure OpenAI or IBM BeeAI to manage agent lifecycles, scaling, retries, logging and security policies.

- Monitoring and logging infrastructure: Provides detailed visibility into agent actions, execution logs and performance metrics to support oversight, troubleshooting and improvement.

By clearly mapping and integrating these modules – perception, cognition, action, memory, learning, collaboration, security, feedback and governance, and execution environment – enterprises can effectively leverage LLM-based agentic AI. This structured approach empowers autonomous yet governed workflows, delivering strategic agility, sophisticated problem-solving, continuous improvement and comprehensive compliance – essential for achieving sustainable competitive advantage in digital transformation.

Core stages of an agentic AI workflow

Agentic AI systems follow a structured, goal-driven workflow in which autonomous agents operate through distinct, iterative stages to accomplish complex objectives. This multistage approach ensures that agents not only understand goals but also plan, act, adapt, and deliver results autonomously, mirroring advanced, human-like problem-solving.

A typical agentic AI workflow consists of five core stages, each contributing to the system’s ability to operate independently and intelligently:

Goal ingestion

The agent receives its high-level objective – the starting point for autonomous operation. The goal is often expressed in natural language or structured data and defines what the agent must achieve without prescribing how to achieve it.

Key functions:

- Interpret the intent or business objective behind the goal.

- Enrich it with relevant context (e.g., user history, system state).

- Validate or clarify incomplete or ambiguous goals before proceeding.

Why it matters:

A clear, well-understood goal forms the foundation for autonomous action, ensuring the agent’s reasoning remains aligned with business or operational intent.

Plan generation

In this phase, the agent breaks down the goal into actionable steps, forming a flexible execution strategy. The agent uses reasoning mechanisms, symbolic reasoning or hybrid approaches to determine the best course of action.

Key functions:

- Decompose the high-level objective into smaller subgoals or tasks.

- Sequence tasks in a logical, goal-oriented order.

- Adapt planning dynamically based on internal logic, prior knowledge, or environmental conditions, such as updating a table that serves as a trigger for the agent.

Why it matters:

Dynamic planning enables agents to adapt strategies to the complexity of their goals, avoiding rigid, rule-based workflows and facilitating flexible task completion.

Tool and API invocation

Once the plan is in place, the agent interacts with external systems – via APIs, databases, and software tools – to gather information or take action.

Key functions:

- Execute API calls, database queries or programmatic actions.

- Retrieve data from internal or external sources.

- Trigger automated workflows or updates.

Why it matters:

This stage connects agent reasoning to real-world actions, allowing the agent to operate effectively within business environments or digital ecosystems.

State tracking

While working toward a goal, the agent tracks intermediate outcomes and maintains awareness of its environment. This enables context-aware execution and dynamic re-planning when necessary.

Key functions:

- Track progress across multiple subtasks or workflows.

- Store intermediate results for later use.

- Adjust actions or planning in response to changing conditions or unexpected outcomes.

Why it matters:

Effective state tracking ensures resilience and adaptability, allowing the agent to recover from errors, adapt to real-time events and maintain coherent progress toward its goal.

Result synthesis

At the final stage, the agent produces a comprehensive output – whether a decision, report, or system update – that fulfills the original objective.

Key functions:

- Consolidate and format results for human review or automated downstream use.

- Communicate final outcomes via reports, system notifications or direct API responses.

Why it matters:

Result synthesis delivers tangible, traceable outcomes from the agent’s autonomous reasoning cycle, ensuring transparency and business alignment.

By cycling through these five stages – goal ingestion, planning, action, monitoring and result delivery – agentic AI systems can operate with minimal human intervention, delivering adaptability, efficiency and decision-making capabilities. This structured approach enables businesses to confidently deploy AI agents in complex, dynamic environments while ensuring control, traceability, and continuous improvement.

Streamline your operational workflows with ZBrain AI agents designed to address enterprise challenges.

Adoption considerations for agentic AI: Performance, security and governance

Adopting agentic AI powered by standards such as MCP offers major benefits, but organizations should also weigh practical trade-offs and requirements.

Moving from pilot agents to production-grade autonomy requires disciplined attention to performance, security, governance and regulatory readiness. The following considerations help ensure that agentic AI scales safely and sustainably.

Performance trade-offs: Latency vs. throughput

One concern is that breaking tasks into multiple LLM and tool calls – as agentic AI does – can introduce latency. Each step (plan, call tool, get result, call LLM again) adds network overhead or computation time. In a straightforward question-and-answer case, a single LLM call might take 2 seconds. In an agentic approach, you might spend 1 second deciding to call a tool, 1 second on the tool call, and 1 second for the LLM to integrate the result, totaling more time. There is an overhead to modularity.

How to manage this:

- Invoke tools only when needed.

- Prompt the agent to attempt a direct answer first.

- Fall back to tool calls for complex or data-dependent queries.

- Avoid overplanning for simple tasks.

- Leverage batching and parallelism.

- Identify independent data sources or actions.

- Spawn parallel calls (e.g., query two databases at once).

- Join results before feeding them back to the LLM.

- Use local MCP servers for low latency.

- Run critical integrations as subprocesses or in-memory services.

- Co-locate database connectors or APIs with the agent cluster.

- Minimize network round trips.

- Implement smart caching.

- Cache repeated queries within a conversation or session.

- Validate cache freshness and enforce TTLs to prevent stale data.

- Respect privacy and consistency constraints when caching sensitive information.

- Scale for throughput.

- For large batches (e.g., 1,000 tasks), spin up multiple agents.

- Distribute tasks or steps across horizontal instances.

- Consider a hybrid ETL/RPA preprocessing stage to gather inputs in bulk.

- Monitor and optimize performance.

- Track key metrics: time per task, average latency, throughput.

- Identify bottlenecks (slow external APIs, heavy computations).

- Apply targeted optimizations such as async designs, additional caching and local hosting.

By combining minimal tool use, parallel execution, local hosting, smart caching and scalable orchestration, and continuously monitoring, enterprises can maintain agentic AI that is both powerful and performant.

Security: Prompt injection, context isolation, sandboxing and access control

Mitigate prompt injection and control outputs

- Use structured function-calling interfaces instead of free-form prompts.

- Validate every model output:

- Ensure JSON parses correctly.

- Re-prompt or error out on malformed or unauthorized calls.

- Sanitize inputs inserted into prompts:

- Escape or filter substrings that resemble system instructions.

- Use libraries that detect and neutralize known injection patterns.

Defend against indirect prompt injection

Agentic systems frequently process third-party data (emails, PDFs, web content, ticket descriptions) that may contain hidden or malicious instructions.

Mitigation strategies include:

- Treat all external content as untrusted input

- Use secondary validation models or sanitization layers to inspect retrieved content before passing it to primary reasoning agents

- Strip or neutralize embedded instructions that attempt to override system policies

This dual-layer validation approach prevents agents from executing unintended actions embedded within data sources.

Enforce least-privilege tool design

- Curate a minimal, finite toolset for each agent.

- Avoid exposing OS-level commands or unrestricted endpoints.

- Even if an injection occurs, the model can only invoke approved, schema-bound functions.

Secure execution sandboxing

If agents are permitted to perform actions or execute code (e.g., Python scripts for analysis or automation), execution should occur within isolated, short-lived environments.

Best practices include:

- Running tasks inside ephemeral containers or serverless sandboxes

- Restricting network access to internal systems

- Limiting filesystem and OS-level permissions

- Monitoring runtime behavior for anomalies

Sandboxing ensures that autonomous execution remains isolated, preventing unintended actions from impacting core enterprise systems.

Encrypt context and protect data

- Transmit all MCP messages over TLS/HTTPS (or secure pipes for local servers).

- Store sensitive data encrypted at rest and in transit.

- Use tokenization or masking for sensitive fields.

- Maintain strict context isolation so one session’s data cannot leak into another.

Implement access control and identity propagation

- Carry user identity and role through the MCP handshake (e.g., OAuth tokens, user IDs).

- Apply RBAC checks in each tool:

- Reject calls if the user’s role lacks permission.

- Enforce parameter-level restrictions (e.g., regular users fetch only their own records).

Governance, auditing and rate limiting

- Log every JSON-RPC request and response for audit trails.

- Track and enforce call quotas per agent or user to control cost and risk.

- Provide dashboards for admins to:

- Review usage patterns.

- Adjust rate limits or tool availability dynamically.

By structuring calls, limiting privileges, encrypting data, enforcing identity checks and auditing usage, enterprises can harden agentic AI against injection attacks, data leaks and unauthorized access.

Governance and compliance: Monitoring, SLAs and regulatory readiness

Observability and dashboard

- Aggregate JSON-RPC logs into a centralized log store.

- Provide dashboards showing:

- Agent success and error rates.

- Average and percentile response times.

- Tool usage per conversation or job.

- Configure alerts for anomalies such as sudden spikes in failures or unexpected tool calls.

Service Level Agreements (SLAs)

- Define reliability targets (e.g., 99.9% uptime) and responsiveness goals (e.g., under 5 seconds per request).

- Architect for high availability:

- Auto-scale agent and MCP server instances.

- Implement failover mechanisms to isolate failing integrations.

- Provide fallback behaviors such as routing to human support or queuing requests.

Regulatory and data-protection frameworks

- SOC 2 and ISO 27001:

- Ensure encryption at rest and in transit for all MCP communications.

- Maintain strict context isolation so one user’s data never leaks into another’s session.

- Host on premises or in a compliant cloud environment as needed.

Audit trails and user attribution

- Log every agent action alongside the invoking user’s identity and timestamp.

- Correlate agent sessions with enterprise identity systems (SSO, OAuth).

- Store immutable records of:

- Prompts received

- Tools called and parameters used

- Results returned and final outputs

Policy enforcement and ethical guardrails

- Integrate content filtering or moderation endpoints to catch:

- Harassment, hate speech or policy violations

- Unintended data disclosures

- Embed human-in-the-loop checkpoints for high-risk outputs (e.g., public communications).

- Log all human interventions (e.g., “AI recommended X; human approved Y”).

Execution safeguards and loop prevention

Autonomous agents can enter recursive reasoning or tool-calling loops, rapidly increasing token consumption and cost.

Mitigation strategies include:

- Enforcing maximum tool-call or turn limits per objective

- Defining cost thresholds that automatically pause execution

- Detecting repeated tool calls with identical parameters

- Escalating stalled workflows to human review

Execution safeguards provide deterministic execution boundaries, preventing uncontrolled recursion, resource exhaustion, and unintended downstream actions.

Continuous improvement and oversight

- Establish an agent review board to:

- Periodically audit logs and usage metrics

- Identify failure patterns or bias incidents

- Update prompts, schemas or toolsets

- Continuously track model performance to detect drift, and update prompts or retrain models as needed to maintain accuracy.

- Track KPIs (throughput, latency, user satisfaction) and iterate on workflow design.

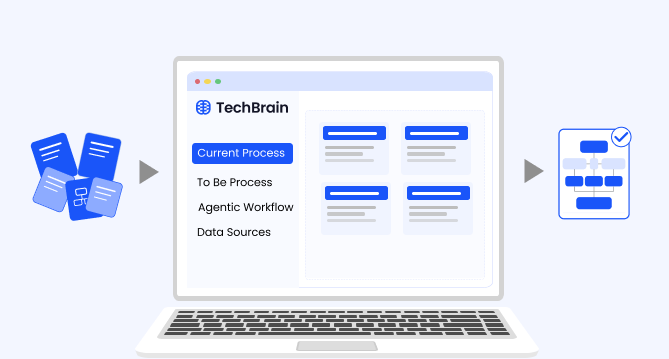

How ZBrain Builder’s agent crew framework operationalizes agentic AI principles

ZBrain Builder operationalizes agentic AI by providing a modular, multi-agent crew framework in which autonomous agents collaborate dynamically to execute complex tasks. It integrates goal-driven autonomy, adaptive planning, tool orchestration and continuous monitoring while maintaining enterprise-grade governance, observability and compliance.

The crew framework breaks down the agentic AI workflow into distinct operational stages – each aligned with principles of autonomy, reasoning, adaptation and result accountability. Below is a comprehensive mapping of these stages to their implementation within ZBrain Builder.

|

Agentic stage |

ZBrain Builder components and features |

|---|---|

|

Goal ingestion |

|

|

Plan generation |

|

|

Tool/API invocation |

|

|

State tracking |

|

|

Result synthesis |

|

Goal ingestion: Capturing and clarifying the mission

An autonomous agent begins with a goal-driven objective rather than a rigid script. It should interpret high-level intents and determine execution steps without micro-level instructions.

ZBrain Builder crew implementation:

- Crew setup (overview and create queue): Users initiate the process by defining the crew name and description, and selecting the LLM and framework (LangGraph or other orchestration engines).

- Input sources (e.g., webhooks, Google Drive uploads, email triggers) are connected through the queue in the create queue stage.

Goal-capturing mechanism:

- Incoming goals (e.g., “generate a marketing report” or “screen resumes”) arrive through the input source queue.

- The supervisor agent captures these goals and processes the raw payload using LLM reasoning and short-term memory context.

Context enrichment via MCP:

ZBrain Builder integrates with the Model Context Protocol (MCP), enriching each incoming goal with context such as:

- Prior task history

- Organizational preferences

- Knowledge base lookups

This ensures the agent starts with both mission clarity and organizational context.

Pre-execution governance:

Optional configurations allow managerial approval or human clarification prompts before the crew proceeds with execution.

Plan generation: Building the autonomous strategy

Rather than following static rules, an agent decomposes goals into adaptive, multistep plans, reasoning through subgoals and dynamically updating as needed.

ZBrain Builder crew implementation:

- Define crew structure: The supervisor agent assigns autonomous subordinate agents, each with specialized roles (e.g., data retrieval, content generation, analysis, validation). ZBrain Flow agents can also be added from the agent library for reusable task flows.

- Dynamic planning: LLMs within the supervisor or specialized planners use:

- chain-of-thought (CoT) reasoning

- reflection loops

- self-critique mechanisms

- Plans are dynamically constructed based on:

- goal type

- available tools and resources

- live session memory

- Memory integration: Vector store or graph store integration allows referencing prior completions, domain-specific data or organizational processes. Also, the crew supports in-memory, long-term term and short-term memory integration.

- Governance in planning: Plans are auditable through logging.

Tool and API invocation: Secure and scalable execution

Agents must act autonomously, invoking APIs, tools or internal services while staying compliant with organizational boundaries and security protocols.

ZBrain Builder crew implementation:

- Flows within subordinate agents: Each worker agent has a customizable Flow library option that executes actions via prebuilt connectors (e.g., Salesforce, Jira, Google Drive, GitHub).

- MCP server integration: ZBrain Builder uses MCP servers to standardize tool invocations, providing enterprise connectors for CRMs, ERPs, data lakes and custom APIs.

- Security-first design: API credentials are stored securely with least-privilege access control. Specific permissions can be assigned at the crew, agent or tool level.

- Resilience and error handling: Built-in mechanisms include retry logic and failure handling. Critical actions can be routed for human sign-off.

State tracking: Contextual memory and adaptive monitoring

Agents must track state, remember intermediate progress and adjust dynamically to real-time feedback, much like a project manager.

ZBrain Builder crew implementation:

- Session manager: Tracks end-to-end task progression, maintaining awareness of:

- pending vs. completed subtasks

- intermediate results

- Short-term and long-term memory: Short-term memory (LLM context window) ensures continuity during active sessions. Long-term memory via vector databases or knowledge graphs enables cross-session continuity and knowledge reuse.

- Dynamic re-planning: If real-time feedback or tool outputs conflict with expected outcomes, ZBrain Builder can trigger plan adjustments.

- Observability dashboards: Crew activity, state changes, and performance metrics (e.g., session times, success rates, token costs) are displayed as the agent’s performance.

Result synthesis: Delivering explainable and auditable outputs

The agent’s work culminates in producing a cohesive, high-quality outcome that can be validated and explained.

ZBrain Builder crew implementation:

- Agent output/app output nodes: After completing tasks, subordinate agents or dedicated synthesis agents compile final outputs – summaries, reports, or downstream API calls.

- Output validation: Optional evaluator agents validate results before release. Sensitive outputs (e.g., PII handling) can be filtered or masked.

- Governance and compliance: Full traceability via agent logs, with downloadable decision trails. Final outputs can be delivered via API/webhook or surfaced through the ZBrain Builder UI, while key session data is fed back into long-term memory for continuous learning.

ZBrain Builder agent crew framework summary

|

Crew component |

Purpose |

|---|---|

|

Supervisor agent |

Orchestrates end-to-end task execution, manages subordinate allocation, and maintains global context. |

|

Subordinate agents |

Execute specific tasks (data fetch, generation, validation, reporting). |

|

ZBrain Flow agents |

Handle specialized workflows (e.g., data enrichment, notification handling). |

|

MCP server connectors |

Secure integration to enterprise tools and APIs. |

|

Session manager |

Tracks task progress, intermediate results, and context updates. |

|

AgentOps layer |

Provides observability and session management. |

|

Security layer |

Enforces access controls, credential protection, and compliance monitoring. |

ZBrain Builder crew model transforms agentic AI theory into a fully governed operational system:

- Autonomy, where it improves speed and efficiency,

- Human-in-the-loop touchpoints where business risks demand oversight,

- Explainable decisions through full traceability,

- Reusable building blocks for scalable automation across business functions.

This approach delivers trusted agentic AI orchestration at enterprise scale—combining flexibility, autonomy, and security without sacrificing performance.

Best practices for implementing and scaling agentic AI with ZBrain Builder

Implementing agentic AI should be viewed as a continuous program, not a one-off project. Industry experts recommend iterative rollout and continuous improvement for agentic initiatives, and teams should plan to refine agents and processes over time. In practice, this means establishing feedback loops and metrics from the outset. The guidelines below outline key design, monitoring, governance and iteration practices – each mapped to ZBrain Builder features (Flows, connectors, logs, etc.) to make them concrete.

Design for modularity and reliability

- Specialize agent tasks: Break complex processes into multiple focused agents. Assign each agent a single, narrowly defined task (e.g., “Summarize report” vs. “Fetch data”). In ZBrain Builder, use Flows to orchestrate these agents. For example, build a Flow where one agent extracts paragraphs from a report and another generates the final executive summary.

- Use robust error handling and retries: Always include retry logic in your Flows. ZBrain Flow’s automated reruns feature can auto-retry failed steps. For example, if a data lookup call fails, configure the Flow to wait and retry or continue. Each Flow step in ZBrain logs its output and error messages, visible in the Flow logs, so you can detect failures and loop back as needed.

- Leverage prebuilt connectors: Integrate agents with enterprise systems using ZBrain’s connector library (e.g., Slack, email, Salesforce, databases). For example, use an SMTP or Slack connector to send an approval request to a manager. This ensures agents have access to live enterprise data and can take actions such as updating a database or sending alerts.

- Embed human-in-the-loop steps: Do not assume 100% autonomy from the start. In your Flow design, include explicit approval or feedback steps that allow a human to intervene. ZBrain’s approval component can send an approval request and pause the Flow until a manager responds. This protects against costly mistakes and builds trust.

- Build a shared knowledge base: Use ZBrain’s knowledge base as the common source of truth for agents. Store corporate policies, FAQs and reference documents there. For example, a compliance agent and an analysis agent can query the same KB for regulatory rules, ensuring consistency and accuracy.

Monitor performance with KPIs and alerts

- Track key metrics with dashboards: Use ZBrain’s dashboard to monitor operational KPIs, including:

- Processing time: Total duration for an agent to complete a task.

- Session time: End-to-end time from session start to finish.

- Satisfaction score: Direct user feedback on agent performance.

- Tokens used: Computational tokens consumed per task or session.

- Cost: Expense per session based on token usage.

ZBrain’s built-in reports present these metrics – processing time, session time, token usage, cost breakdowns and satisfaction – in one place. Set alerts for anomalies and track trends to drive continuous improvement.

- Review logs and agent histories: Use ZBrain Flow logs and audit logs to diagnose issues. Logs capture inputs, outputs and errors at each step. Regular audits help uncover recurring failures and guide refinements. Logs can be exported for deeper analysis.

- Incorporate user feedback: Build feedback loops into workflows. For example, after an agent produces a summary, ask the user to rate it. ZBrain’s satisfaction metric quantifies user experience, helping prioritize retraining or flow optimization.

Enforce governance and security

- Implement role-based access control (RBAC): Define clear roles (admin, builder, operator) and enforce least privilege. Restrict the Flow design to builders, while operators can run workflows but not edit them.

- Protect data and endpoints: Host ZBrain in a VPC or on premises to keep data within your network. Encrypt data at rest and in transit. Secure API credentials with secrets management and restrict network access.

- Embed policy checks: Add guardrails into Flows. Use the knowledge base to store compliance rules that agents must query before sending outputs.

- Enterprises strategically incorporate guardrails – such as cost limits, approval checkpoints and compliance monitoring – to ensure these autonomous workflows remain secure, auditable and aligned with organizational policies.

Iterate and evolve continuously

- Plan for ongoing iteration: Treat deployment as a baseline. Review metrics regularly (monthly or quarterly) and refine prompts, Flows as needed. Monitoring is an ongoing process.

- Manage versions and velocity: Track deployment velocity by monitoring the number of new Flows, agents or updates per sprint. Automate Flow and KB testing prior to release.

- Scale modularly: Expand workflows incrementally once ROI is proven. Reuse modular agents across teams, publishing them through the agent directory. Monitor each workflow independently to manage scale.

- Embrace feedback loops: Continuously feed new data into the system. Add logs of repeated failures into the knowledge base as counterexamples. Use user ratings to retrain prompts. Connect new data sources via ZBrain connectors to extend capabilities without redesigning workflows.

Endnote

In conclusion, agentic AI marks a transformative step for enterprise AI, moving beyond one-off prompts and simple chatbots to fully autonomous agents that can plan, act and learn. ZBrain™, an enterprise AI orchestration platform, with its low-code Flow interface, connector library, shared knowledge base and built-in monitoring, provides the building blocks for these intelligent pipelines.

Successfully adopting agentic AI requires a phased approach: start with low-risk pilots, embed human-in-the-loop checkpoints, and iteratively refine agents based on metrics, including processing time, session time, satisfaction scores, token usage, and cost. Govern these workflows with clear access controls and comprehensive audit logs. As you scale, use modular Flows and reusable agents to accelerate new use cases while maintaining consistency and compliance.

By combining strategic design, rigorous monitoring and robust governance, organizations can deploy ZBrain-powered agents to automate complex processes, cut error rates and deliver measurable productivity gains. With continuous iteration and a focus on performance and security, agentic AI will become a core capability, transforming AI initiatives into a resilient and scalable engine for innovation.

Unlock the power of autonomous agentic AI with ZBrain’s low-code Flow interface, pre-built connectors, and real-time analytics. Start building intelligent, end-to-end agents in minutes with ZBrain!

Listen to the article

Author’s Bio

An early adopter of emerging technologies, Akash leads innovation in AI, driving transformative solutions that enhance business operations. With his entrepreneurial spirit, technical acumen and passion for AI, Akash continues to explore new horizons, empowering businesses with solutions that enable seamless automation, intelligent decision-making, and next-generation digital experiences.

Table of content

Frequently Asked Questions

What is agentic AI orchestration?

What orchestration patterns are commonly used in agentic AI systems?

Agentic AI orchestration can follow several architectural patterns depending on enterprise scale, governance needs and system complexity. The most common patterns include:

- Centralized orchestration – A single control layer coordinates all agents, manages task allocation and enforces governance policies.

- Hierarchical orchestration – Supervisor agents oversee specialized sub-agents, creating layered decision-making structures.

- Decentralized orchestration – Agents coordinate directly through shared events or messaging systems without a central controller.

- Federated orchestration – Independent agent systems collaborate across organizational or regulatory boundaries while maintaining data sovereignty.

Each pattern offers trade-offs between control, scalability, resilience and fault tolerance. In regulated environments, centralized or hierarchical models provide stronger governance and observability. In high-scale, real-time systems, decentralized models offer greater resilience and adaptability. Mature enterprise AI orchestration platforms like ZBrain Builder support modular, crew-based designs that can align with these patterns while maintaining auditability, security and policy enforcement.

What is an agentic workflow, and why is it important for enterprises?

An agentic workflow is an AI-driven, multi-step process in which autonomous “agents” plan, execute, monitor, and adapt tasks without manual intervention at each step. Unlike single-prompt chatbots, agentic AI handles complex objectives, such as compiling a quarterly report or resolving a service desk ticket, by decomposing goals into discrete actions, invoking external systems, and synthesizing results. For enterprises, this approach delivers higher reliability, consistency, and scalability, enabling teams to automate end-to-end processes while maintaining oversight and governance.

How does ZBrain Builder enable goal ingestion in an agentic workflow?

ZBrain Builder captures objectives through its flexible Flow triggers: scheduled jobs, API/webhook calls, or UI-driven inputs (text, files, or form fields). This ensures that every agent begins with a clear, structured understanding of the task at hand.

In what ways does ZBrain Builder support planning and task decomposition?

Using the low-code Flow interface, you can embed LLM-powered planning steps alongside conditional branches and loops. ZBrain Builder logs each prompt and response for full traceability. Flows can verify prerequisites and branch based on real-time data—ensuring that complex objectives are translated into precise, executable sequences.

How are external systems and tools invoked within an agentic workflow?

ZBrain Builder provides a rich Connector Library, offering out-of-the-box integrations for CRM, ERP, databases, messaging platforms, cloud storage, and more. In any Flow step, you simply select the appropriate connector, supply parameters, and ZBrain Builder handles authentication, pagination, and error handling. This plug-and-play model eliminates the need for custom ETL or API wrapper development, allowing agents to interact with enterprise systems seamlessly.

What mechanisms ensure that agents maintain context and state?

ZBrain’s two-layer memory system combines Flow variables (transient, run-specific data) with a shared Knowledge Base (persistent, vector-indexed content). As an agent progresses, outputs and retrieved documents are stored, making them available for subsequent steps or related agents. This design preserves continuity, enables retrieval-augmented reasoning, and ensures that the state survives restarts or parallel executions.

How does ZBrain Builder handle errors and retries?

Each Flow step can be configured with automated reruns and a retry option. If a connector call fails—due to a timeout or service error—the Flow can pause, retry after a configurable delay. Every retry and path is logged, enabling rapid diagnosis and iterative improvement.

What reporting and metrics are available to monitor agent performance?

ZBrain’s Agent Dashboard tracks key operational metrics:

-

Processing Time per task

-

Session Time per user interaction

-

Satisfaction Score

-

Tokens Used per session

-

Cost based on token consumption

You can chart trends and drill into detailed Flow logs, ensuring comprehensive visibility into efficiency, user experience, and budget impact.

How can I incorporate human oversight into fully automated workflows?

ZBrain Builder supports an explicit Approval step within any Flow. You can pause execution, send a notification via email or Slack, and require a human decision before proceeding. This human-in-the-loop capability is essential for high-risk operations, such as legal review or financial approvals, allowing you to balance autonomy with control.

What governance and security controls protect sensitive data?

ZBrain Builder enforces role-based access control, ensuring only authorized users can design, deploy, or run agents. All data in transit and at rest is encrypted, and connectors use secure credential storage. You can also embed policy checks in Flows, such as schema validation or content filtering, to prevent unauthorized access to or leakage of sensitive data.

How do we get started with ZBrain for AI development?

To begin your AI journey with ZBrain:

-

Contact us at hello@zbrain.ai

-

Or fill out the inquiry form on zbrain.ai

-

-

Our dedicated team will work with you to evaluate your current AI development environment, identify key opportunities for AI integration, and design a customized pilot plan tailored to your organization’s goals.

Insights

Solution architecture best practices: A guide for enterprise teams

The architecture design process culminates in a set of documented artifacts that communicate the solution to development, operations, and business teams.

Common solution architecture design challenges — and how to overcome them

Solution architecture must evolve from fragmented documentation practices to a structured, collaborative, and continuously validated design capability.

Why structured architecture design is the foundation of scalable enterprise systems

Structured architecture design guides enterprises from requirements to build-ready blueprints. Learn key principles, scalability gains, and TechBrain’s approach.

A guide to intranet search engine

Effective intranet search is a cornerstone of the modern digital workplace, enabling employees to find trusted information quickly and work with greater confidence.

Enterprise knowledge management guide

Enterprise knowledge management enables organizations to capture, organize, and activate knowledge across systems, teams, and workflows—ensuring the right information reaches the right people at the right time.

Company knowledge base: Why it matters and how it is evolving

A centralized company knowledge base is no longer a “nice-to-have” – it’s essential infrastructure. A knowledge base serves as a single source of truth: a unified repository where documentation, FAQs, manuals, project notes, institutional knowledge, and expert insights can reside and be easily accessed.

How agentic AI and intelligent ITSM are redefining IT operations management

Agentic AI marks the next major evolution in enterprise automation, moving beyond systems that merely respond to commands toward AI that can perceive, reason, act and improve autonomously.

What is an enterprise search engine? A guide to AI-powered information access

An enterprise search engine is a specialized software that enables users to securely search and retrieve information from across an organization’s internal data sources and systems.

A comprehensive guide to AgentOps: Scope, core practices, key challenges, trends, and ZBrain implementation

AgentOps (agent operations) is the emerging discipline that defines how organizations build, observe and manage the lifecycle of autonomous AI agents.