Stateful vs. stateless agents: Why stateful architecture is essential for agentic AI

Listen to the article

Artificial intelligence systems have evolved from simple, reactive responders to structured, goal-directed agents capable of executing complex, multi-step workflows. This progression—from stateless systems to stateful architectures and ultimately to agentic AI—marks a fundamental shift in how intelligent systems are designed and deployed. At the core of this transformation lies state: the ability to persist, manage, and reason over context across time.

Early AI agents, often described as simple reflex systems, operated without memory. Each input was processed in isolation, with no awareness of prior interactions or evolving objectives. This stateless design limited AI to immediate responses—it could react, but it could not sustain continuity, adapt over time, or coordinate multi-step execution. Such systems were computationally efficient, but structurally incapable of autonomy.

Modern AI architectures increasingly demand more. Context and memory are no longer optional enhancements; they are foundational to intelligent behavior. Stateful systems introduce continuity—tracking conversation history, preserving intermediate results, and maintaining user or task context. Yet memory alone does not create autonomy. The emergence of agentic AI builds upon stateful design by layering structured planning, tool invocation, execution loops, and evaluation mechanisms on top of persistent context. In this sense, the state is the substrate, but orchestration is what enables action.

Large language models (LLMs) such as GPT-5 exemplify this architectural evolution. On their own, these models are stateless systems: they generate responses based only on the input provided in a given prompt and have no inherent memory of prior interactions. However, when integrated into real-world applications, they operate within stateful orchestration frameworks. These surrounding systems manage conversation history, retrieve relevant external knowledge, coordinate structured tool calls, and control multi-step execution workflows. As a result, while the core model remains stateless, the overall application behaves as a context-aware, stateful system. Modern agentic systems are therefore not defined by model intelligence alone, but by how effectively they manage and govern state across planning–acting–observing cycles.

This article examines the progression from stateless to stateful to agentic systems, clarifying why state is necessary—but not sufficient—for autonomy. We explore the architectural foundations of state management, including working memory, persistent knowledge stores, orchestration graphs, and tool ecosystems. We then demonstrate how ZBrain Builder, an enterprise-grade agentic AI orchestration platform, operationalizes these principles, enabling organizations to design governed, scalable, stateful AI systems that execute complex workflows with continuity, transparency, and control.

The future of AI is not defined solely by larger models, but by smarter architectures. Understanding stateful systems as the bridge to agentic AI is essential to building the next generation of intelligent, enterprise-ready systems.

- Stateful vs. stateless agents: Beyond memory retention

- From stateful agents to agentic AI

- Why stateful agents are foundational to agentic AI

- The architecture of stateful agents: Context as execution infrastructure

- ZBrain Builder in action: Orchestrating stateful agentic systems

- What agentic stateful systems enable

- Challenges and future directions in agentic stateful systems

Stateful vs. stateless agents: Beyond memory retention

The distinction between stateless and stateful agents is often reduced to a simple question of memory: does the system retain past interactions? While this framing is technically accurate, it is incomplete—particularly in the context of agentic AI.

In modern agentic systems, the state is not merely conversational history. It is the structured execution context that enables planning, tool coordination, decision-making, and controlled autonomy. The real distinction between stateless and stateful agents is not just about remembering—it is about whether the system can maintain continuity of reasoning, action, and policy over time.

Understanding this difference is essential to understanding the evolution from reactive AI services to goal-directed agentic systems.

Stateless agents explained

Stateless agents are AI systems that process each request independently, without retaining information from prior interactions. Every input is treated as an isolated event, and the system generates outputs solely from the current prompt and its pre-trained knowledge.

Core characteristics of stateless agents

- No execution continuity:

A stateless agent does not retain conversational context, task progress, or prior outputs. Repeating the same input yields the same result because no historical state influences the response.

- Pipeline simplicity:

Stateless systems follow a single-pass execution model: input → compute → output. There is no planning stage, no intermediate tracking, and no evolving objective. This architectural simplicity makes them predictable and easy to scale.

- Operational efficiency:

Without memory stores or state synchronization, stateless agents incur minimal overhead. They require no context retrieval or session management, making them suitable for high-throughput, low-latency environments.

- Security by design:

Because stateless agents do not retain user data or execution traces, the surface area for data leakage is reduced. Privacy compliance and data governance are comparatively straightforward.

Typical use cases

Stateless architectures are well-suited for:

- Single-turn factual Q&A

- Deterministic APIs (calculators, converters)

- Image classification and spam detection

- Stateless search queries

- Isolated command execution

These systems excel where continuity, personalization, or multi-step reasoning are not required.

The architectural limitation

However, stateless systems cannot:

- Track goals across multiple steps

- Coordinate tool usage

- Evaluate prior decisions

- Adapt strategy mid-execution

- Maintain constraints across a workflow

They respond—they do not orchestrate.

In the era of agentic AI, this limitation becomes structurally significant.

Stateful agents explained

Stateful agents extend beyond single-turn responsiveness by maintaining structured information across agent interactions and execution steps. Traditionally, this was framed as conversational memory. In agentic AI, it is more accurately described as execution state management.

A stateful agent does not treat each query as isolated. It maintains continuity across tasks, tools, and sessions.

Core characteristics of stateful agents

- Execution continuity:

Stateful agents track the progression of a task. They maintain intermediate outputs, decision paths, and contextual constraints throughout multi-step workflows.

- Contextual reasoning:

The system understands follow-up instructions and evolving objectives because prior steps remain available within its execution context.

- Dynamic adaptation:

Stateful agents modify their strategy based on intermediate outcomes, tool results, or feedback. They can iteratively refine their actions based on intermediate results, rather than producing a final output in a single forward pass.

- Persistent objectives:

Rather than generating a single response, the agent works toward completing a defined goal—possibly across multiple reasoning–action cycles.

- Personalization and history awareness:

Beyond execution logic, stateful agents can include user preferences, prior decisions, or domain context, enabling tailored and coherent long-term interactions.

The trade-off

Stateful architectures introduce complexity:

- Storage and retrieval infrastructure

- Concurrency management

- Version control and consistency handling

- Governance and access control

- Latency overhead from context management

State enables autonomy—but autonomy demands disciplined engineering.

Beyond memory: State as execution context

In agentic systems, the state must be understood as cognitive scaffolding, not merely memory retention.

State encompasses multiple structured dimensions:

Goal state

- Current objective

- Sub-goals

- Completion criteria

Planning state

- Decision paths

- Task decomposition results

- Pending actions

Execution state

- Tool invocation history

- API responses

- Database query results

- Retrieved information

- Intermediate reasoning outputs

Policy state

- Retry limits

- Role-based permissions

- Security policies

Performance state

- Confidence scores

- Evaluation metrics

- Failure counters

- Escalation triggers

This broader conception of the state transforms AI systems from reactive responders into orchestrated problem-solvers. It enables reasoning–acting loops in which the agent can:

- Interpret a goal

- Decide the next best action

- Invoke a tool

- Evaluate the result

- Adjust strategy

- Continue until completion

Without a state, such loops collapse into disconnected responses.

State, therefore, is not a convenience—it is the structural requirement for autonomy.

State across tasks, tools, and sessions

In agentic AI, the state operates across three critical dimensions:

1. Across tasks

Complex enterprise workflows involve multiple stages:

- Information gathering

- Validation

- Analysis

- Decision generation

- Reporting

A stateful agent tracks task progression, ensuring continuity from initiation to completion. Stateless systems cannot guarantee workflow integrity.

2. Across tools

Agentic systems interact with heterogeneous tools:

- Vector search systems

- Structured databases

- Knowledge graphs

- External APIs

- Code execution environments

- Google search tools

State allows the agent to:

- Store tool outputs

- Compare intermediate results

- Resolve conflicts

- Chain tool outputs into subsequent actions

Without a state, tool outputs cannot be meaningfully composed.

3. Across time

Enterprise environments evolve:

- Policies are updated

- Data changes

- Regulations shift

Stateful agents can ensure continuity across sessions or workflow interruptions.

This temporal dimension elevates the state from short-term memory to lifecycle management.

The strategic distinction

The choice between stateless and stateful architectures should no longer be framed as:

“Do we need memory?”

Instead, it should be framed as:

“Do we require autonomous, multi-step, policy-governed execution?”

- If the objective is single-turn response generation, a stateless architecture is sufficient.

- If the objective is goal-directed orchestration across multiple tools, tasks, and extended workflows, statefulness becomes essential.

Stateful agents provide the structural foundation for agentic AI systems. They enable planning, evaluation, iteration, and governance. Stateless systems remain valuable for narrow, high-efficiency use cases—but they cannot support the cognitive architecture required for modern autonomous AI systems.

In this light, the state is not merely a retained context. It is the operational backbone of an intelligent agency.

Streamline your operational workflows with ZBrain AI agents designed to address enterprise challenges.

From stateful agents to agentic AI

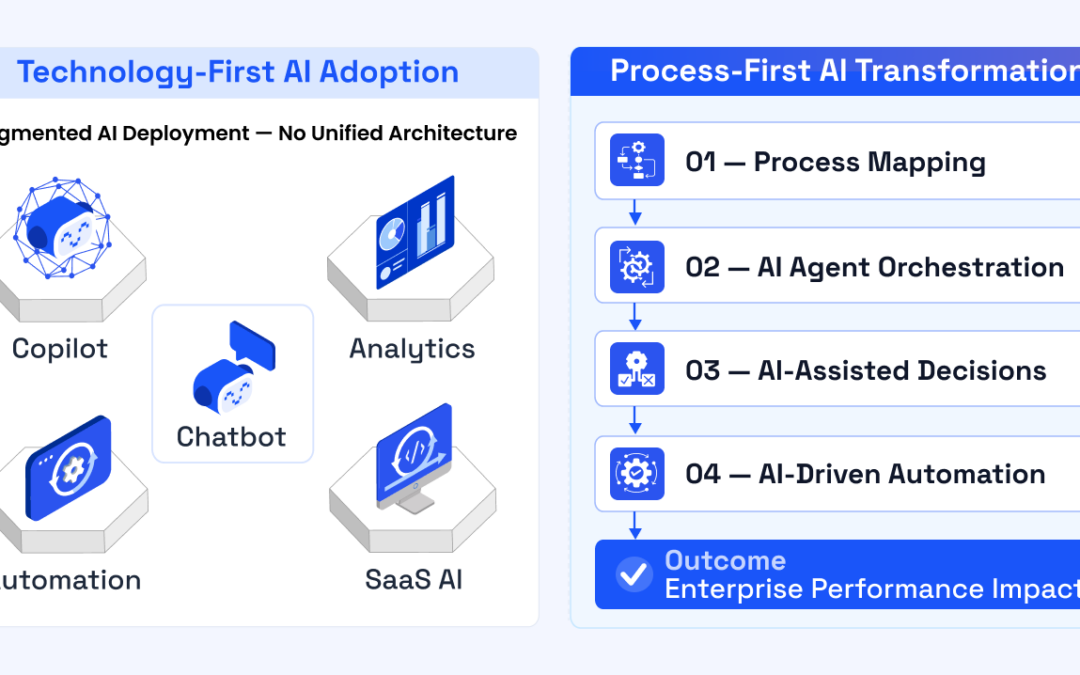

The evolution of intelligent systems can be understood as a progression across three architectural stages: stateless, stateful, and agentic.

Stateless systems respond to isolated prompts. Stateful systems retain context across interactions. Agentic systems, however, move beyond memory and into goal-directed execution.

Understanding this distinction is critical.

Stateless → Stateful → Agentic

Stateless systems process each request independently. They are efficient, predictable, and simple—but inherently limited. There is no continuity, no accumulation of knowledge, and no persistence of objectives.

Stateful systems introduce memory. They retain conversation history, user preferences, and contextual information across interactions. This enables personalization, multi-turn dialogue, and session continuity.

However, the state alone does not constitute autonomy. A stateful chatbot that remembers your name is not an agent. It is context-aware—but still reactive.

Agentic systems represent the next architectural leap. They maintain not just conversational context, but execution state. Essentially, agentic AI is the framework; AI agents are the building blocks within the framework. Agentic AI is the broader concept of solving problems with limited supervision, whereas an AI agent is a specific component within that system designed to handle tasks and processes with a degree of autonomy. This model is changing the way humans interact with AI. The agentic AI system understands the user’s goal or vision and uses the information provided to solve the problem.

In an agentic system, AI agents can:

- Track objectives across multiple steps

- Decompose goals into sub-tasks

- Invoke external tools

- Evaluate intermediate results

- Adjust strategies dynamically

- Resume execution across interruptions

In other words, agentic systems operate through structured decision-making loops rather than isolated prompt completions.

Why state alone is not autonomy

A stateful system can remember past inputs, but without structured planning and tool coordination, it cannot reliably complete complex objectives. True autonomy requires:

- Persistent goal tracking

- Explicit execution control

- Iterative reasoning

- Validation mechanisms

- Tool-based action

Without these capabilities, a system remains reactive—though context-aware.

Agentic AI emerges when a state is combined with structured orchestration.

Completing the transformation: Planning, tools, and evaluation

The transition from stateful to agentic systems is completed through three additional architectural layers:

- Planning mechanisms – enabling decomposition of high-level objectives into structured sub-tasks.

- Tool ecosystems – allowing deterministic interaction with external systems, databases, and APIs.

- Evaluation and control loops – ensuring intermediate outputs are validated before proceeding.

Together, these components transform AI from a conversational responder into a goal-oriented executor.

Agentic systems do not simply answer—they pursue outcomes.

Why stateful agents are foundational to agentic AI

As AI systems evolve from reactive responders to autonomous problem-solvers, the limitations of stateless architectures become increasingly apparent. Advanced AI applications rarely consist of single-turn question answering. Instead, they involve multi-step reasoning, tool invocation, intermediate validation, and iterative refinement. These capabilities require more than isolated responses—they require execution continuity.

Stateful agents provide the structural foundation for agentic AI.

At the core of agentic systems is a planning–acting–observing loop. Rather than generating a single output, the system:

- Interprets an objective.

- Plans the next action.

- Executes that action (e.g., calls a tool, retrieves data, performs analysis).

- Observes the result.

- Updates its internal state.

- Determines whether to continue, revise, or conclude.

Without a persistent state, this loop collapses into disconnected steps. The system would be unable to track intermediate results, maintain sub-goals, or evaluate prior decisions. Stateful design enables the agent to move beyond prompt-response behavior into structured, iterative reasoning.

Planning–acting–observing loops

Agentic AI systems operate through cyclical execution rather than linear pipelines. In troubleshooting, research, financial analysis, compliance validation, or enterprise workflow automation, the system must refine its understanding as new information becomes available.

For example, a troubleshooting agent may:

- Ask clarifying questions,

- Analyze system logs,

- Execute diagnostic checks,

- Evaluate outcomes,

- Adjust its hypothesis,

- Continue until resolution.

Each of these steps depends on maintaining execution state—what has been tried, what results were observed, what hypotheses remain plausible. Stateless systems cannot preserve this evolving reasoning chain.

State, therefore, becomes the substrate for iterative cognition.

ReAct-style reasoning and multi-hop workflows

Modern agentic architectures frequently adopt reasoning–action paradigms in which the model alternates between internal reasoning and external action. In such systems, the agent:

- Reasons about what to do next,

- Invokes a tool,

- Integrates the tool output into its reasoning,

- Proceeds to the next step.

This pattern inherently requires maintaining:

- Tool invocation history,

- Intermediate reasoning outputs,

- Decision paths,

- Confidence estimates.

Similarly, multi-hop workflows—where solving a problem requires sequential data gathering across multiple sources to reach an answer/conclusion—cannot function without state continuity. An enterprise compliance agent, for instance, may retrieve policy documents, cross-reference regulatory databases, extract structured data, and synthesize a decision report. Each hop builds upon prior outputs.

State is what binds these hops into a coherent trajectory.

Execution continuity and cross-tool context sharing

Agentic AI systems rarely operate in isolation from tools. They interact with:

- Retrieval systems,

- Structured databases,

- APIs,

- Code execution environments,

- Knowledge graphs.

To coordinate across tools, the agent must preserve context between calls. Tool outputs must be stored, evaluated, and passed forward. Errors must be tracked. Retries must be controlled. Budgets must be monitored.

This cross-tool orchestration depends on structured state management. Stateless architectures cannot reliably compose tool outputs into a unified reasoning process.

Execution continuity is especially critical in enterprise deployments where workflows span minutes, hours, or even days. Long-running tasks—such as document review pipelines, financial audits, or operational monitoring—require checkpointing, resumability, and consistent state synchronization.

Without a state, autonomy is impossible.

From reactive answering to autonomous problem solving

Traditional AI systems respond. Agentic AI systems pursue objectives.

The transition from reaction to autonomy hinges on the state. It enables:

- Persistent goal tracking

- Dynamic branching logic

- Intermediate validation

- Strategy revision

- Context-aware decision making

- Cross-tool coordination

- Constraint and policy enforcement

Stateful agents can adapt based on feedback, refine their reasoning based on tool outputs, and carry forward knowledge across sessions. This capability extends beyond personalization—it enables structured task completion.

Moreover, the state allows AI systems to learn operationally during deployment. While the underlying model weights may remain static, the agent’s behavior evolves through the accumulation of execution context, stored preferences, observed outcomes, and policy adjustments. This continuous adaptation represents a significant step toward more resilient and capable AI systems.

The structural imperative

In advanced AI applications—enterprise automation, adaptive retrieval systems, financial modeling, regulatory compliance validation, research synthesis, or intelligent operations management—stateless architectures are fundamentally insufficient. They lack the continuity required for planning, evaluation, and iteration.

Stateful agents, by contrast, provide:

- Coherent multi-step execution

- Tool coordination

- Iterative reasoning

- Persistent objectives

- Controlled autonomy

State is not merely a convenience for conversational continuity. It is the structural requirement for agentic behavior.

Streamline your operational workflows with ZBrain AI agents designed to address enterprise challenges.

The architecture of stateful agents: Context as execution infrastructure

In traditional AI systems, maintaining context was largely synonymous with storing conversation history. In agentic AI systems, context extends far beyond chat transcripts. It encompasses execution state, planning artifacts, tool outputs, policy constraints, and persistent knowledge.

Designing a truly agentic stateful system, therefore, requires architectural consideration across three foundational layers:

- Memory layers (short-term and long-term)

- Orchestration and execution state management

- Policy and control mechanisms

Together, these layers transform the state from passive memory into an active execution infrastructure.

Memory layers in agentic systems

Agentic systems operate across multiple tiers of memory, each serving a distinct functional role. Understanding these layers is essential for building systems capable of planning, iteration, and cross-tool coordination.

Working memory (Ephemeral context)

Working memory corresponds to the model’s active context window—the tokens currently available to the LLM during reasoning. This layer supports immediate decision-making within a reasoning–action loop.

However, working memory is finite. Context windows impose hard token limits, and naïvely appending conversation history or tool outputs eventually leads to context erosion. Without intelligent management, critical early decisions are deferred, disrupting execution continuity.

Effective working memory management in agentic systems includes:

- Summarization of prior reasoning steps

- Sliding window context strategies

- Structured prompt compression

The objective is not to preserve every detail, but to preserve execution-relevant state. Working memory must contain:

- The current objective

- Active sub-goals

- Most recent tool outputs

- Decision rationale

- Immediate constraints

In agentic architectures, working memory acts as the system’s cognitive scratchpad.

Episodic memory (Session state)

Episodic memory tracks the broader session context beyond the immediate reasoning step. It includes:

- Conversation history

- Task progress markers

- Intermediate results

- Tool invocation logs

- Retry counters

This layer enables continuity across multi-step workflows. For example, if an agent is conducting compliance analysis across several documents, episodic memory tracks which documents have been processed, what inconsistencies were identified, and which validations remain incomplete.

Episodic memory ensures that execution is not reset between steps. It allows the agent to pause, resume, branch, and iterate without losing situational awareness.

Long-term memory (Persistent knowledge)

Long-term memory resides outside the context window and persists across sessions. It includes:

- Vector databases for semantic recall

- Structured databases (SQL/NoSQL)

- Knowledge graphs

- User profiles

- Historical task records

Vector databases enable associative recall, allowing agents to retrieve semantically relevant information without explicit keyword matching. Knowledge graphs provide structured reasoning capabilities, capturing relationships and hierarchies. Hybrid approaches combine both, yielding precision and contextual depth.

Long-term memory transforms the agent from a transient responder into a continuously learning system capable of sustained domain intelligence.

Tool state (External system context)

In agentic systems, tools themselves carry state. When an agent queries a database, calls an API, executes code, or retrieves documents, the outputs of those actions become part of the system’s execution context.

Tool state includes:

- API response payloads

- Database query results

- Retrieved document sets

- Execution logs

- Error messages

Without preserving tool state, cross-tool reasoning becomes impossible. Agentic systems must treat tool outputs as first-class state objects that can be evaluated, revised, or reused.

Orchestration graphs and execution state

Memory layers alone do not create autonomy. Agentic AI requires structured orchestration mechanisms to manage execution state across planning–acting–observing loops.

Modern agentic architectures increasingly adopt graph-based execution models rather than linear pipelines.

Directed Acyclic Graphs (DAGs)

In graph-based systems, tasks are decomposed into nodes representing actions or decisions. Some of such nodes are:

- Planning node

- Retrieval node

- Tool invocation node

- Evaluation node

- Response generation node

Edges define execution flow and conditional branching. This allows agents to:

- Loop until conditions are met

- Branch based on tool outputs

- Recover from failure

- Revisit prior reasoning

State flows through the graph as a shared object, accessible and mutable at each node.

State machines and execution control

Agentic systems can also be modeled as state machines. Each state represents a phase of execution (e.g., “planning,” “retrieving,” “validating,” “responding”). Transitions occur based on observed outcomes or policy constraints.

This explicit modeling of execution state enables:

- Deterministic debugging

- Controlled iteration

- Failure recovery

- Human-in-the-loop checkpoints

Checkpointing mechanisms further enhance reliability by allowing workflows to resume from saved execution states rather than restarting entirely.

In production environments, this is critical for long-running or high-stakes processes.

Node-based execution architecture

Each node in an orchestration graph performs a defined function:

- Planning nodes define sub-goals.

- Tool nodes execute external actions.

- Evaluation nodes assess intermediate outputs.

- Memory nodes retrieve or update persistent state.

By separating reasoning from execution and evaluation, the architecture supports modular, inspectable autonomy.

This structured approach differs sharply from monolithic, prompt-based systems, in which logic is embedded in a single interaction. Agentic orchestration separates control flow from generation, managing execution state explicitly and making the system observable, auditable, and governable.

Policy and control layer

Autonomy without control introduces risk. Agentic systems, therefore, require a policy layer that governs how the state evolves and how decisions are made.

Retry limits and failure handling

Stateful architectures must monitor:

- Retry counters

- Error frequency

- Confidence thresholds

If an agent repeatedly fails to retrieve valid data, the policy layer can terminate the agent or escalate to human review.

Governance rules

Enterprise deployment demands:

- Role-based data access

- Source trust validation

- Audit logs of tool invocations

- Memory retention policies

Policy states enforce constraints on data access, retention policies, and decision logging, ensuring auditable and compliant system behavior.

Access permissions and security context

Agentic systems frequently operate across sensitive systems. State must therefore include security context:

- Authentication tokens

- Role identifiers

- Permission scopes

This ensures that the orchestration logic adheres to enterprise governance frameworks.

From memory management to agentic architecture

Traditional discussions of stateful agents focus primarily on conversation persistence. Agentic AI expands this dramatically. State becomes:

- A cognitive substrate for reasoning

- A coordination mechanism for tools

- A tracking system for goals and constraints

- A governance layer for compliance and control

Graph-based orchestration engines and enterprise platforms operationalize these principles. Whether implemented programmatically through state graphs or visually through orchestration builders, the core objective remains the same: enable multi-step autonomy with structured state continuity.

In this architecture, context is not merely remembered—it is actively curated, propagated, evaluated, and governed across tasks, tools, and time.

Therefore, the state is not an auxiliary feature. It is the execution backbone of agentic AI systems.

Streamline your operational workflows with ZBrain AI agents designed to address enterprise challenges.

ZBrain Builder in action: Orchestrating stateful agentic systems

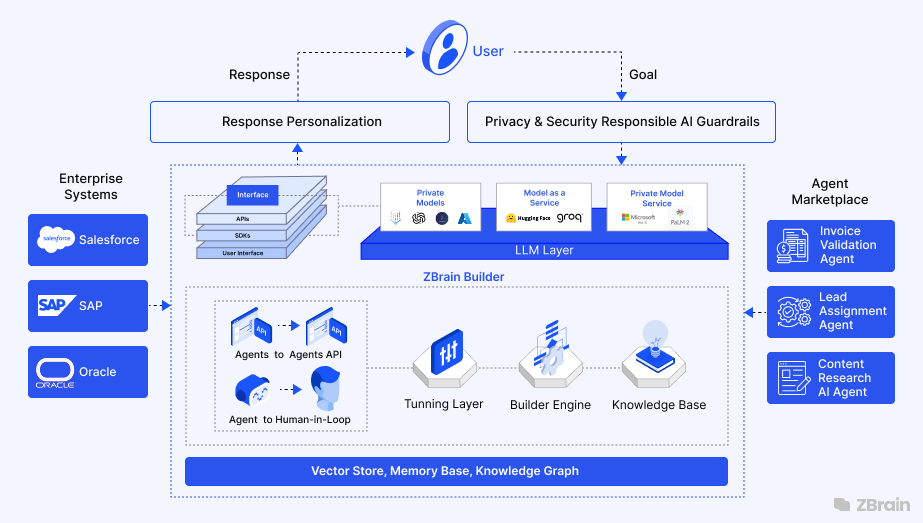

To make the discussion concrete, it is useful to examine how agentic, stateful systems are designed and deployed in practice. ZBrain Builder is ZBrain’s agentic AI orchestration platform that operationalizes structured autonomy. It integrates execution graphs, shared state management, tool ecosystems, policy controls, and observability into a unified framework that enables goal-directed AI workflows at enterprise scale.

Rather than treating the state as a passive conversation history, ZBrain Builder treats it as an execution backbone. Every workflow—referred to as a Flow—functions as a structured orchestration graph in which reasoning nodes, tool invocation nodes, conditional branches, and evaluation steps share a persistent state object. This architecture enables agents to plan, act, observe outcomes, update context, and iterate until objectives are met.

Through this orchestration model, ZBrain Builder enables capabilities that extend well beyond conversational continuity. It supports:

Personalized agents that leverage persistent user context and domain knowledge

- Long-running workflows that can pause, checkpoint, and resume without losing execution state

- Integrated knowledge systems that inject structured memory into reasoning loops

- Multi-agent crew configurations where specialized agents collaborate over a shared state

- Policy-enforced autonomy with configurable memory scopes, access controls, and guardrails

- Full execution traceability through observability dashboards and thought tracing

In the sections that follow, we examine how these capabilities are realized, illustrating how ZBrain Builder functions as an agent-orchestration platform that transforms stateful memory into controlled, auditable, and scalable agentic autonomy.

Personalized, context-aware assistants: Consider an AI sales assistant that interacts with customers on your website.

- With ZBrain Builder, you could give this agent access to a knowledge base (containing past and build a Flow where the first step looks up the user’s info when a trigger happens.

- This agent, being stateful, will greet the user by name, recall their last purchase (“How are you enjoying the laptop you bought in June?”), and avoid recommending products they already have. All of this is possible because the app retains memory of the user’s history.

- The knowledge base serves as long-term memory, and ZBrain’s Flow ensures that once the data is retrieved, it’s passed along to the answer-generating step. This level of personalization and continuity drives better engagement.

- In practice, you would configure the app to connect to the relevant knowledge base(s) in the bot settings, enabling “Follow-up Conversation” mode for session memory, and perhaps use prompt templates that instruct the agent to utilize the stored context (e.g., system prompt: “You are a sales assistant.

The user’s info: {{profile_data}}. Always use this to personalize responses.”) ZBrain takes care of injecting that profile data into the agent’s context on each turn. The result is an AI agent that feels aware and consistent, boosting user trust and satisfaction.

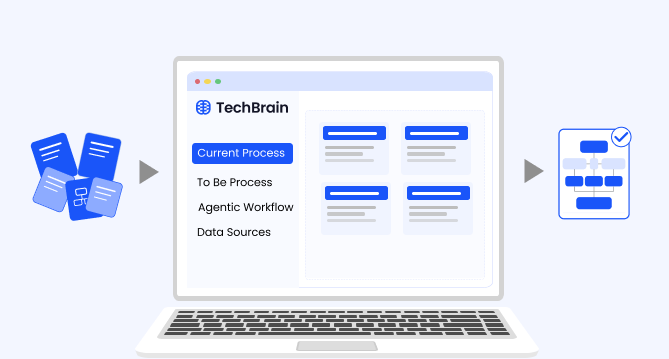

Task-resuming workflows and execution checkpoints: Flows as Orchestration graphs: In ZBrain Builder, a Flow is not merely a sequence of connected components—it represents a directed execution graph. Each Flow consists of nodes and edges, where:

- Nodes represent reasoning or action units (e.g., classification, retrieval, tool invocation, approval, generation).

- Edges represent state transitions, determining how execution moves from one step to the next.

- A shared state object flows across nodes, carrying task context, intermediate outputs, constraints, and decisions throughout execution.

This graph-based architecture is foundational to enabling agentic behavior.

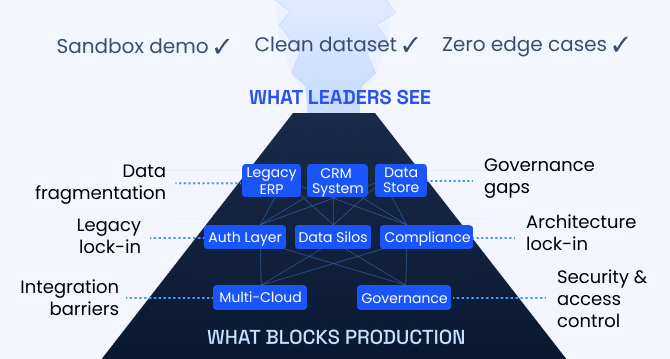

Consider a complex internal helpdesk automation workflow involving multiple stages: gathering user input, retrieving knowledge base content, applying decision logic, possibly escalating to a human reviewer, and finally generating a response. In a stateless architecture, any interruption—such as a delayed user response or a required human approval—would require restarting the process or manually reconstructing context.

In contrast, ZBrain’s orchestration graph maintains a persistent execution state. Each Flow run instantiates a shared state object that persists until completion. If execution reaches an “Approval” node or waits for external input, the current state is checkpointed. The graph effectively pauses, retaining all intermediate data, including retrieved documents, user inputs, decisions made, and tool outputs.

When execution resumes, the state object is restored and forwarded to the next node. The agent continues precisely where it left off. No context reconstruction is required. The orchestration engine ensures continuity across asynchronous events.

This design dramatically increases reliability for long-running and enterprise-grade workflows.

For example, in a content generation and review Flow:

- A planning node outlines the content structure.

- A generation node produces a draft.

- An approval node pauses execution for human review.

- After edits are submitted, the graph transitions to a refinement node that incorporates the feedback.

Because the shared state object persists across nodes, the refinement step has access not only to the original draft but also to the human reviewer’s edits and comments. The agent does not start a new session; it resumes execution within the same task state. Operators can even retry execution from a specific node if an error occurs, leveraging the checkpointed state rather than restarting the entire workflow.

This orchestration model ensures robustness. Agents can pause, branch, loop, and resume without losing continuity. For enterprise leaders, this means AI systems can integrate seamlessly with asynchronous business processes and human oversight. For technical teams, it eliminates the complexity of manually restoring the state after interruptions.

Memory injection and retrieval as structured execution nodes: Memory injection within ZBrain Flows is also governed by the orchestration graph model.

Each retrieval or knowledge-access step serves as a dedicated node in the execution graph. The output of that node is written to the shared state object and becomes available to downstream nodes.

Consider an agent designed to answer employee HR queries:

- A classification node interprets the user’s intent.

- A retrieval node queries an indexed HR knowledge base.

- The retrieved documents are stored in the shared state.

- A reasoning node (LLM block) consumes both the user query and the retrieved documents to generate a grounded response.

Because the shared state object flows across nodes, the generation step has structured access to prior outputs without manual context stitching. Variables such as {{docs}} are dynamically populated from the state object, ensuring just-in-time memory injection into the reasoning phase.

This design elevates memory from passive storage to an active component of the execution graph. Retrieval is no longer an isolated API call—it is a stateful transition in a structured reasoning pipeline.

Moreover, because each node reads from and writes to the same shared state, the system supports:

- Cross-node reasoning

- Conditional branching based on retrieved data

- Iterative refinement (e.g., re-query if confidence is low)

- Policy-driven execution constraints

The result is not simply an agent that remembers, but an agent that reasons about what to retrieve, when to retrieve it, and how to incorporate it into ongoing task execution.

By explicitly modeling Flows as directed execution graphs with persistent shared state, ZBrain Builder operationalizes agentic orchestration. It provides the structural foundation required for multi-step reasoning, tool integration, human-in-the-loop collaboration, and controlled autonomy at enterprise scale.

Enabling stateful agents with ZBrain’s Agent Crew system: ZBrain Builder’s Agent Crew framework is designed to operationalize agentic autonomy through structured orchestration, extending far beyond simple context preservation. It provides precise control over how execution state, memory, and policy constraints persist as tasks move across agents, sessions, tools, and time.

At its core, Agent Crew transforms stateful agents into governed, multi-agent systems capable of coordinated reasoning and controlled autonomy.

- Memory scope modes: Agentic control over context persistence

When configuring an Agent Crew, ZBrain Builder allows architects to define a memory scope for each agent. This setting does more than control memory retention; it establishes the informational boundaries within which the agent operates, directly influencing its autonomy and coordination with other agents.

This determines how much contextual state is retained and shared, and directly defines the agent’s degree of “statefulness”:

|

Memory Mode |

Behavior |

|

No Memory |

Treats every request as a clean slate. No data or history is retained. Best for purely stateless utility tasks (e.g., a calculator agent). |

|

Crew Memory |

Retains context only within the same agent’s sessions inside the crew. Enables continuity for multi-step tasks without leaking context to unrelated agents. |

|

Tenant Memory |

Shares context across all agents and sessions within the tenant. Creates a fully stateful environment where agents can access shared long-term memory for context-driven personalization and collaboration. |

This design enables explicit architectural decisions about isolation versus continuity. For example:

- A compliance-checking agent can be sandboxed with No Memory, ensuring strict data isolation.

- A customer support crew can operate under Tenant Memory, allowing personalized, cross-session continuity.

These modes function as agentic policy controls, governing how the state propagates across execution graphs. Memory scope is therefore not just a configuration toggle—it is a governance boundary.

- Thought tracing that makes agent state transparent: Statefulness is not just about storing memory — it’s also about making that state observable and explainable. ZBrain Builder includes a Thought Tracing feature that, when enabled, captures and displays each agent’s reasoning steps, decision path, and intermediate states on the dashboard.

This makes the inner workings of a stateful agent fully transparent. Operators can:

- Trace how an agent interpreted prior inputs and decisions

- Inspect state changes between steps

- Debug and fine-tune prompts or logic based on the agent’s thought sequence

For complex multi-agent crews, this traceability is essential for ensuring predictability, auditability, and trust in stateful behaviors.

Why this matters

By combining configurable memory scopes with thought tracing, ZBrain’s Agent Crew framework lets enterprises build true stateful agent ecosystems:

- Agents retain the memory of past interactions

- Context flows persist between steps, sessions, and even agents

- Operators can observe and govern stateful behavior in real time

This capability enables ZBrain Builder to power long-running, context-rich workflows—from end-to-end document processing pipelines to personalized virtual assistants that adapt over time.

Human-in-the-loop and collaboration: Stateful agents are not just about autonomous operation; they also enable better human-AI collaboration. ZBrain Builder supports this in multiple ways.

- One is through feedback loops – for example, a human reviewer can give a thumbs down with a feedback box to add details to an agent’s answer, and ZBrain Builder can capture that feedback to refine future outputs.

- Another is by explicit hand-offs: as described, a Flow can be designed to route certain decisions to humans (using, say, an “Approval” component or sending a notification) and then continue.

Because the agent state persists, the human’s input can be inserted, and the LLM picks up context immediately. This is crucial in enterprise settings where full automation is risky – you want the AI solution to do the heavy lifting but allow human validation for sensitive steps.

- ZBrain’s Flows make such patterns straightforward. You could have an “Agent” do a task, then an “Approval” step for a person to verify, then another “Agent” to finalize. All share the state, which might contain the work-in-progress and the human’s inputs. The system essentially provides a memory trace that both AI and humans can see. In fact, ZBrain’s monitoring module lets you inspect each step’s input and output, which brings us to traceability.

Agent crew coordination with shared memory: When multiple agents form a team (Agent Crew), ZBrain Builder introduces a special orchestration: typically, a supervisor agent manages sub-agents, as mentioned earlier. For example, if you have a complex task like “Analyze quarterly sales and prepare a report”, you might have one agent that collects data, another that analyzes it, another that generates a draft report, and so on – plus a supervisor agent coordinating. In ZBrain’s architecture, the supervisor delegates subtasks to subordinate agents and consolidates their results. The shared state (or memory) is what enables this chaining.

The key is that all agents operate on a common state object – effectively a blackboard that each agent reads from and writes to. ZBrain Builder ensures each agent’s context includes the state updates so far. The advantage is similar to a racing team working quickly and efficiently: each specialist knows exactly what the others have done and what remains, without extra prompting. This approach greatly enhances multi-agent collaboration.

The ZBrain Builder platform also provides an agent directory with pre-built agents (for various tasks) that are designed to plug into workflows. These pre-built agents follow the standard of using shared memory and APIs to communicate, so that you can assemble them into a custom crew quickly. If, for instance, you deploy a “Researcher Agent” and a “Writer Agent” from the library, the researcher could fetch info (update state with findings), and the writer could use those findings to compose text. The supervisor agent pattern in ZBrain Builder’s Agent Crew can be configured via the low-code interface by simply nesting or connecting agents and setting conditions. The end result is an orchestrated team of agents functioning as a unit, with ZBrain Builder handling the inter-agent messaging and memory synchronization in the background. For complex enterprise workflows, this yields both higher success (as each agent is focused and not overloaded) and resilience (agents can be added or retried as needed, state persistence, to scale out the work).

Visual indicators and traceability of context: One of the challenges of stateful systems is debugging and transparency – how do you know what the AI solution remembered or what it’s doing with that memory? ZBrain Builder addresses this with robust monitoring tools. The platform includes an Agent dashboard where you can see, in real time, each agent’s inputs, outputs, and even the chain of thought if enabled. For example, during a flow run, you can inspect the sequence of actions: which knowledge base entries were retrieved, what the intermediate prompt looked like, what response was given, etc. You can also monitor token usage and costs per step, which is important since stateful interactions tend to use more tokens. This traceability is invaluable for verifying that the agent is using context correctly. If something goes wrong (say the agent answered with outdated info), you can look at the log and see whether it pulled the wrong document from memory or if the memory was not updated.

There are also metrics, such as session lengths and satisfaction scores, in the Performance dashboard.

All this observability means that despite the added complexity of state, you have the tools to maintain control and understanding of your agents. For an executive, this translates to confidence that the AI application isn’t a black box – you have audit logs of what information it used. For AI engineers, it greatly eases troubleshooting and iterative improvement (you might discover the summary is losing a key detail, and then tweak the summarization prompt or increase the context window size, for example). In summary, ZBrain Builder doesn’t just enable stateful agents; it makes their inner workings more transparent and manageable, which is crucial for enterprise adoption.

Through these examples, we’ve seen that stateful agents unlock capabilities far beyond stateless ones: personalized assistance, multi-turn reasoning, the ability to resume and adapt, and collaborative multi-agent workflows. ZBrain Builder serves as a practical platform to realize these capabilities with relatively little effort. It abstracts much of the complexity (like hooking up memory stores or writing orchestration code) into a configurable system.

Tools as core execution primitives

In Agent Crew workflows, tools are not treated as peripheral plugins. They are modeled as native execution nodes within the orchestration graph.

This distinction is critical.

ZBrain Builder supports:

- Structured tool calling

- API integrations

- Cross-system coordination

- Deterministic data retrieval alongside generative reasoning

Each tool invocation becomes a node in the execution graph. The output of that node is written into the shared state object, making it accessible to subsequent reasoning steps.

This enables clear separation between:

- Deterministic operations (e.g., querying a financial database, fetching CRM records, calling an external API)

- Generative reasoning (e.g., synthesizing insights, drafting reports, explaining results)

By structuring tool use in this way, ZBrain Builder enforces architectural clarity. The agent reasons about when to call a tool, executes it, observes the result, updates the state, and proceeds. This planning–acting–observing loop is foundational to agentic AI.

Cross-system coordination becomes natural within this framework. An agent can:

- Query an ERP system

- Retrieve relevant policy documentation

- Perform validation

- Generate a summary

Each action updates the shared state, enabling multi-step reasoning without brittle context stitching.

ZBrain Builder enables enterprises to deploy not just stateful agents, but governed agentic systems.

These systems:

- Retain memory across tasks and sessions

- Coordinate across tools and data sources

- Enforce enterprise policies

- Support human oversight

- Provide full execution transparency

Statefulness becomes the substrate for controlled autonomy. And orchestration becomes the mechanism through which that autonomy operates reliably at scale.

Streamline your operational workflows with ZBrain AI agents designed to address enterprise challenges.

What agentic stateful systems enable

Agentic stateful systems unlock capabilities that are fundamentally impossible in stateless environments.

Multi-step enterprise workflow orchestration

Agentic stateful systems can execute long-running, multi-stage workflows across systems while maintaining continuity of objectives, intermediate results, and constraints.

Example:

An enterprise procurement agent gathers vendor quotes, validates compliance requirements, checks budget thresholds, routes for approval, and generates final documentation—tracking progress and decisions throughout the lifecycle.

Benefit:

Eliminates repetitive human coordination, reduces operational friction, and ensures consistent policy enforcement across extended workflows.

This capability transforms AI from an assistant into a workflow orchestrator.

Compliance validation pipelines

Stateful agents enable structured, auditable compliance verification across multiple documents, data sources, and regulatory frameworks.

Example:

A regulatory compliance agent retrieves updated policy guidelines, cross-references internal documentation, flags discrepancies, logs violations, and prepares structured remediation reports—maintaining traceable execution state across each validation step.

Benefit:

Supports governance, risk mitigation, and audit readiness in regulated industries such as finance, healthcare, and manufacturing.

Here, the state enables not just recall, but deterministic validation across time and evolving data.

Automated research and knowledge synthesis agents

Agentic systems can perform iterative research workflows involving retrieval, analysis, refinement, and synthesis.

Example:

A research agent decomposes a complex question, retrieves information from multiple sources, evaluates relevance, reformulates queries when needed, and produces a structured synthesis—tracking sources and intermediate findings along the way.

Benefit:

Reduces time-to-insight for analysts and decision-makers while ensuring transparency in how conclusions were derived.

This extends beyond one-pass Retrieval-Augmented Generation. It represents adaptive, multi-hop knowledge orchestration.

Financial analysis and forecasting workflows

Stateful agents enable structured financial reasoning across datasets and time horizons.

Example:

A financial operations agent pulls revenue data, compares quarterly performance, adjusts forecasts based on macroeconomic inputs, validates results against budget policies, and produces an executive summary—all within a single execution workflow.

Benefit:

Improves analytical consistency, reduces manual reconciliation, and supports faster executive decision-making.

Without state continuity, such multi-source reasoning would fragment into isolated responses.

Cross-system data reconciliation

Modern enterprises operate across heterogeneous systems—ERP, CRM, HRMS, and analytics platforms. Agentic systems can coordinate across them.

Example:

A reconciliation agent compares inventory counts from warehouse systems with ERP records, flags mismatches, queries shipment logs, and updates records after verification—maintaining a consistent execution state across each tool call.

Benefit:

Enhances operational accuracy and reduces reconciliation overhead.

State becomes the shared coordination substrate across systems.

Adaptive retrieval and iterative RAG

Agentic stateful systems extend traditional RAG into adaptive, multi-step retrieval processes.

Example:

An AI knowledge agent evaluates whether initial retrieval results sufficiently answer a query. If not, it reformulates the search, switches sources, and iterates until confidence thresholds are met—tracking evidence quality across cycles.

Benefit:

Improves factual accuracy, reduces hallucination risk, and ensures responses are grounded in validated evidence.

This is retrieval as a controlled reasoning loop—not a one-time lookup.

Autonomous operational systems

Stateful agents can monitor and react to evolving environments while preserving situational awareness.

Example:

A logistics optimization agent tracks warehouse restocking events, delivery delays, route constraints, and historical congestion patterns to dynamically adjust routing decisions.

Benefit:

Improves efficiency, reduces risk, and enables real-time operational adaptation.

Autonomy here depends on remembering environmental changes and prior decisions.

Human–agent collaborative execution

State persistence enables structured human oversight without breaking workflow continuity.

Example:

An HR agent drafts candidate outreach communications, routes them for approval, incorporates human edits, logs final decisions, and schedules follow-ups—preserving full execution context throughout.

Benefit:

Balances automation with governance, allowing AI systems to operate safely in high-stakes environments.

State enables collaborative autonomy rather than isolated automation.

From memory to mission execution

The shift from stateless to stateful—and from stateful to agentic—represents a structural transformation.

In essence, agentic stateful systems unlock continuity, coordination, and controlled autonomy. They enable building AI systems that do not merely respond—but plan, act, evaluate, and complete mission-critical workflows at enterprise scale.

This is the difference between an intelligent assistant and an operational AI agent.

Challenges and future directions in agentic stateful systems

While engineering stateful agents is already complex, evolving them into fully agentic systems—capable of planning, tool coordination, iterative reasoning, and cross-system orchestration—introduces a significantly higher order of technical, operational, and ethical challenges.

As AI systems evolve from reactive responders to goal-directed autonomous systems, the engineering burden shifts from prompt optimization to lifecycle governance. Memory is only one dimension. Execution control, tool coordination, reliability, and policy enforcement become equally critical.

Below are the key challenges facing agentic stateful systems—and the emerging directions shaping their future.

Managing architectural complexity

Stateful agentic systems combine:

- Multi-layer memory (working, episodic, long-term)

- Tool ecosystems

- Orchestration graphs

- Policy constraints

- Human-in-the-loop workflows

- Observability frameworks

Each additional layer increases the surface area for failure.

Unlike stateless systems, agentic architectures must:

- Maintain a coherent state across nodes

- Synchronize tool outputs

- Prevent state corruption

- Govern transitions in execution graphs

This complexity requires disciplined system design, rigorous testing, and standardized abstractions.

Future direction:

We are likely to see:

- Standardized memory interfaces

- Modular orchestration primitives

- Auto-managed memory summarization

- Built-in state consistency validation

- Framework-level policy engines

Agentic infrastructure will evolve like distributed systems engineering, shifting from custom implementations to robust, reusable architectural patterns.

Scalability and performance trade-offs

Agentic statefulness introduces computational overhead:

- Retrieval calls

- Graph transitions

- State updates

- Tool invocations

- Long context windows

Balancing performance and contextual richness is a persistent challenge.

Large context windows increase cost and latency. Frequent vector or database lookups introduce bottlenecks. Multi-agent coordination compounds compute demands.

Architectural mitigations include:

- Intelligent caching layers

- Session-level state partitioning

- Memory sharding by tenant or task

- On-demand retrieval strategies

- Hierarchical memory models

Future direction:

Improvements in long-context models, optimized vector search, and adaptive retrieval strategies will reduce latency while preserving reasoning depth. However, enterprise architects must still design for horizontal scalability and resource budgeting.

Consistency and reliability of execution state

Agentic systems mutate state continuously. When multiple processes update shared memory, consistency risks arise.

Challenges include:

- Concurrent state updates

- Corrupted execution trails

- Orphaned workflows

- Partial state writes during failures

State consistency in agentic systems parallels transactional guarantees in database systems.

Emerging best practices include:

- Checkpointing and deterministic execution replay

- Version-controlled memory updates

- Transactional state writes

- Failure recovery mechanisms

- State validation hooks between graph transitions

Reliability becomes non-negotiable when agentic systems operate in regulated or mission-critical domains.

Tool misuse and loop failures

Agentic systems introduce a new class of failure modes beyond simple hallucination.

- Infinite loops: Reasoning–action cycles can become trapped in recursive patterns, repeatedly querying tools without progress.

- Tool hallucination: An agent may fabricate tool outputs or invoke tools incorrectly if structured validation is not enforced.

- Misaligned execution paths: Without clear policy constraints, agents may select suboptimal or irrelevant tools, deviating from intended workflows.

Mitigation strategies include:

- Explicit loop counters

- Retry limits

- Tool invocation validation

- Confidence thresholds

- Structured output schemas

Future systems will likely incorporate formal verification layers to prevent agents from entering uncontrolled execution states.

Evaluation of agentic systems

Traditional evaluation focuses on answer accuracy. Agentic systems require workflow-level evaluation.

Critical metrics include:

- Task completion rate

- Trajectory success (did the agent follow a valid reasoning path?)

- Tool correctness

- Policy compliance

- Cost efficiency

- Latency per completed objective

Evaluating only final outputs obscures systemic issues.

Emerging practices include:

- Step-level trace inspection

- State mutation tracking

- Tool call auditing

- Simulation-based stress testing

Evaluation must shift from response quality to execution quality.

Governance and security in agentic architectures

Persistent state and cross-system autonomy expand the attack surface.

- Prompt injection: Retrieved content may introduce malicious instructions that alter execution logic.

- Data exfiltration risks: Agents with memory may inadvertently leak sensitive historical data.

- Role-Based Access Control: Agents must operate within clearly defined permission scopes.

- Auditability and compliance logging: Every state mutation, tool invocation, and decision must be traceable.

Security measures include:

- Strict role-based data segmentation

- Encrypted memory stores

- Access logging

- Guardrail enforcement layers

- Policy-driven tool invocation controls

As enterprises deploy agentic systems, governance will be as critical as model capability.

Optimizing context utilization

As state accumulates, selecting the right context becomes increasingly complex.

Challenges include:

- Memory overload

- Retrieval noise

- Context irrelevance

- Token inefficiency

Strategies include:

- Recency-based prioritization

- Semantic relevance scoring

- Topic-based segmentation

- Hierarchical memory architectures

- Adaptive retrieval loops

Future advances may include self-managed memory systems where agents decide autonomously what to compress, retain, or discard.

Self-improving agentic systems

One of the most promising frontiers is self-optimization.

Agentic systems can improve through:

- Structured feedback loops

- Reflection mechanisms

- Performance analysis

- Adaptive policy tuning

For example:

- If a retrieval strategy repeatedly underperforms, the agent adjusts it.

- If tool latency exceeds thresholds, the policy layer modifies the invocation strategy.

- If users repeatedly override decisions, the agent refines its decision rules.

However, adaptive systems introduce governance challenges. Uncontrolled self-modification risks drift from enterprise policy.

Future systems will likely balance:

- Adaptive learning

- Controlled update pipelines

- Performance guardrails

- Reset or rollback mechanisms

Ethical and accountability considerations

Agentic stateful systems raise deeper ethical questions:

- How do we prevent learned bias from accumulated state?

- How do we ensure fairness in personalized behaviors?

- How do we explain decisions influenced by historical memory?

- How do we enable users to delete their stored state?

Responsible deployment requires:

- Transparent data retention policies

- User consent and data control

- Explainability mechanisms

- Regular fairness audits

As systems gain autonomy, the requirements for governance, transparency, and accountability become correspondingly stronger.

The road ahead

The evolution from stateful agents to fully agentic systems represents a shift from memory engineering to execution governance.

The central challenges are no longer:

- Can the system remember?

They are now:

- Can the system execute safely?

- Can it reason reliably across tools?

- Can it scale without breaking?

- Can it remain compliant and auditable?

- Can it improve without drifting?

The future of agentic AI will depend on disciplined orchestration architectures, robust policy enforcement, scalable memory management, and trajectory-level evaluation frameworks.

Endnote

The distinction between stateless, stateful, and agentic systems is not merely architectural—it defines the ceiling of what AI can accomplish. Stateless agents respond. Stateful agents remember. Agentic systems execute.

The state alone does not create autonomy; it is the indispensable foundation on which autonomy is built. Without a persistent execution context, there can be no planning, no multi-step coordination, no controlled tool use, and no reliable long-running workflows. As AI systems evolve from reactive interfaces to goal-directed digital operators, state becomes the structural backbone that enables reasoning across time, tools, and tasks.

Modern enterprise applications increasingly demand this level of capability. Organizations are not seeking chat interfaces that generate isolated answers; they require systems that can decompose objectives, orchestrate across databases and APIs, validate intermediate results, incorporate human oversight, and resume execution without loss of continuity. These are not features of simple conversational AI—they are hallmarks of agentic architecture.

ZBrain Builder is positioned not merely as an agentic AI platform, but as an orchestration platform that operationalizes stateful autonomy. It integrates shared execution state, structured tool invocation, multi-agent coordination, policy controls, and observability into a unified enterprise-ready framework. Abstracting away the complexity of execution graphs, lifecycle management, and governance enforcement, it enables organizations to deploy agentic systems without building bespoke orchestration infrastructure from scratch.

For enterprise leaders, the implication is strategic. The value of AI will increasingly depend not on model size alone, but on architectural intelligence—the ability to coordinate memory, tools, policies, and workflows into reliable, auditable systems. Agents that can plan, act, evaluate, and adapt over time will deliver exponentially more operational leverage than systems limited to single-turn responses.

For AI practitioners, the mandate is equally clear. Building the next generation of AI systems requires fluency not only in models, but in state management, orchestration frameworks, evaluation pipelines, and governance controls. The future of AI belongs to agentic systems that combine contextual continuity with disciplined execution.

The industry is moving toward AI that behaves less like a reactive interface and more like a capable digital workforce. Stateful architecture makes this transition possible. Agentic orchestration makes it practical. And platforms designed with these principles at their core are accelerating the shift from conversational AI to autonomous, enterprise-grade intelligence.

The future of AI is not defined by smarter models alone, but by smarter systems. Stateful architecture is the bridge that enables reliable autonomy. Agentic AI is the outcome.

Discover how ZBrain Builder can help you build stateful, context-aware AI agents—start transforming your enterprise workflows today!

Listen to the article

Author’s Bio

An early adopter of emerging technologies, Akash leads innovation in AI, driving transformative solutions that enhance business operations. With his entrepreneurial spirit, technical acumen and passion for AI, Akash continues to explore new horizons, empowering businesses with solutions that enable seamless automation, intelligent decision-making, and next-generation digital experiences.

Table of content

- Stateful vs. stateless agents: Beyond memory retention

- From stateful agents to agentic AI

- Why stateful agents are foundational to agentic AI

- The architecture of stateful agents: Context as execution infrastructure

- ZBrain Builder in action: Orchestrating stateful agentic systems

- What agentic stateful systems enable

- Challenges and future directions in agentic stateful systems

Frequently Asked Questions

What is the difference between stateless, stateful, and agentic AI systems?

Stateless systems process each request independently, with no awareness of prior interactions. They are suitable for simple, one-step tasks such as calculations, fact lookups, or isolated Q&A.

Stateful systems introduce memory. They retain conversation history, task progress, and contextual data across interactions. This enables personalization, continuity, and multi-turn workflows.

Agentic systems go further. They combine persistent state with structured planning, tool invocation, execution loops, and evaluation mechanisms. Instead of merely remembering, they:

- Track objectives

- Decompose goals into sub-tasks

- Invoke external tools deterministically

- Validate intermediate results

- Resume execution across interruptions

Why is state necessary but not sufficient for agentic AI?

Memory alone does not create autonomy.

A stateful chatbot can remember your name or the context of prior conversations. However, without structured planning, tool coordination, and evaluation loops, it remains reactive.

Agentic AI requires:

- Persistent execution state

- Planning–acting–observing cycles

- Structured tool invocation

- Policy-driven control

- Outcome validation

The state is the substrate. Planning, tools, and governance complete the transformation into an autonomous system capable of achieving objectives.

How do agentic systems use planning–acting loops?

Agentic systems operate through iterative execution cycles rather than single-response generation. A typical loop includes:

- Planning the next step based on objectives

- Acting by invoking a tool or retrieving data

- Observing the result

- Updating execution state

- Deciding whether to continue, branch, or terminate

This reasoning–action loop enables multi-step workflows such as compliance validation, financial analysis, research synthesis, and cross-system reconciliation.

The quality of an agentic system is evaluated not only by its final output but by the integrity of its execution path.

How do stateful agents manage memory across tasks and sessions?

Agentic stateful systems typically use multiple memory layers:

- Working memory (context window): Holds immediate reasoning context.

- Episodic memory (session state): Tracks task progress and intermediate outputs.

- Long-term memory (persistent stores): Includes vector databases for semantic recall and structured databases or knowledge graphs for relational reasoning.

Advanced orchestration frameworks manage how state flows across execution nodes, ensuring consistency, persistence, and controlled updates.

What enterprise use cases require agentic stateful systems?

Agentic stateful systems are essential for:

- Multi-step enterprise workflows (Example: procurement, HR onboarding, financial reporting)

- Compliance validation pipelines across policies and regulations

- Automated research and adaptive retrieval systems

- Cross-system data reconciliation between ERP, CRM, and operational tools

- Multi-agent collaboration on complex objectives

These tasks require continuity, tool coordination, and goal tracking—capabilities impossible in stateless systems.

How does ZBrain Builder enable agentic stateful orchestration?

ZBrain Builder operationalizes agentic architecture through:

- Graph-based workflow orchestration

- Shared execution state across nodes and agents

- Structured tool invocation and API integrations

- Memory scopes (No Memory, Crew Memory, Tenant Memory)

- Long-running workflow checkpointing

- Deterministic execution replay

- Built-in policy controls and guardrails

Rather than treating memory as an add-on, ZBrain Builder integrates state as an execution backbone within enterprise workflows.

How does ZBrain’s Agent Crew framework support multi-agent coordination?

Agent Crew enables structured multi-agent orchestration where:

- A supervisor agent delegates tasks

- Specialized sub-agents perform domain-specific actions

- All agents share a centralized execution state

- Intermediate outputs are propagated across nodes

This architecture supports distributed reasoning while maintaining coordinated execution.

Agents collaborate on a shared state rather than operating in isolation.

How does ZBrain Builder integrate tools into agentic workflows?

In ZBrain, tools are modeled as structured execution primitives within the orchestration graph—not as external plugins.

Agents can:

- Invoke APIs

- Query databases

- Retrieve knowledge base entries

- Perform code execution

- Interact with enterprise systems

Each tool call updates the shared execution state, enabling deterministic coordination between generative reasoning and structured computation.

This separation improves reliability, auditability, and enterprise safety.

How does ZBrain Builder ensure governance and compliance in stateful systems?

ZBrain incorporates governance at the orchestration layer through:

- Role-based access control

- Tenant-scoped memory isolation

- Retry and cost limits

- Human-in-the-loop checkpoints

- Execution trace logging

- Thought tracing and decision audit chains

Observability is essential for governing autonomous systems. ZBrain provides trajectory-level inspection, allowing operators to review reasoning paths, tool calls, and state transitions.

This ensures autonomy remains controlled and auditable.

What is the future direction of agentic stateful AI?

The future of agentic stateful AI lies in:

- Smarter memory compression and retrieval

- Self-evaluating agents with reflection loops

- Stronger policy enforcement frameworks

- Formal verification for tool safety

- Scalable trajectory-based evaluation metrics

AI systems are moving from reactive interfaces to governed digital operators.

How do we get started with ZBrain for AI development?

To begin your AI journey with ZBrain:

- Contact us at hello@zbrain.ai

- Or fill out the inquiry form on zbrain.ai

Our dedicated team will work with you to evaluate your current AI development environment, identify key opportunities for AI integration, and design a customized pilot plan tailored to your organization’s goals.

Insights

The agentic enterprise: Why AI success requires an operating model redesign

Organizations that redesign their operating models around agentic AI are beginning to outperform those that apply AI only incrementally.

Enterprise AI pilot-to-production gap: Root causes & how to address them

The underlying cause is structural. In many enterprises, AI pilots are developed on infrastructure that was not designed to support production deployment.

Solution architecture best practices: A guide for enterprise teams

The architecture design process culminates in a set of documented artifacts that communicate the solution to development, operations, and business teams.

Common solution architecture design challenges — and how to overcome them

Solution architecture must evolve from fragmented documentation practices to a structured, collaborative, and continuously validated design capability.

Why structured architecture design is the foundation of scalable enterprise systems

Structured architecture design guides enterprises from requirements to build-ready blueprints. Learn key principles, scalability gains, and TechBrain’s approach.

A guide to intranet search engine

Effective intranet search is a cornerstone of the modern digital workplace, enabling employees to find trusted information quickly and work with greater confidence.

Enterprise knowledge management guide

Enterprise knowledge management enables organizations to capture, organize, and activate knowledge across systems, teams, and workflows—ensuring the right information reaches the right people at the right time.

Company knowledge base: Why it matters and how it is evolving

A centralized company knowledge base is no longer a “nice-to-have” – it’s essential infrastructure. A knowledge base serves as a single source of truth: a unified repository where documentation, FAQs, manuals, project notes, institutional knowledge, and expert insights can reside and be easily accessed.

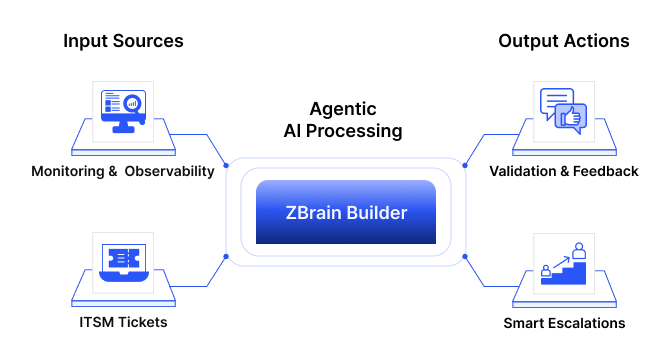

How agentic AI and intelligent ITSM are redefining IT operations management

Agentic AI marks the next major evolution in enterprise automation, moving beyond systems that merely respond to commands toward AI that can perceive, reason, act and improve autonomously.