The AI trust gap: Why governance architecture determines enterprise value

Trust in autonomous AI agents is eroding, even as the technology behind them advances at a pace few enterprise capabilities have ever matched. This decline is not due to AI itself regressing; if anything, model and agent capabilities are advancing faster than enterprises can absorb them. Nor is it happening because the business case has weakened; by most measures, the economic potential of agentic AI continues to strengthen.

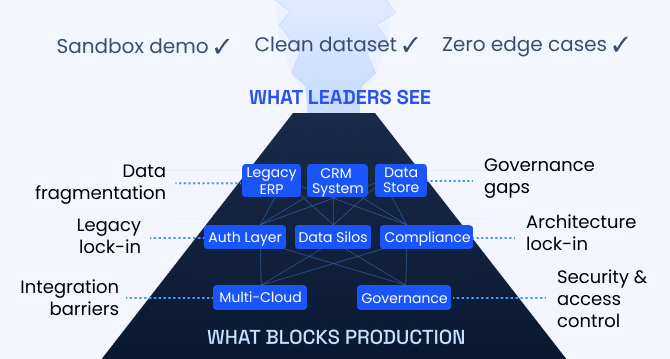

Trust eroded for a different reason. As AI systems became increasingly capable of making consequential decisions without human intervention, many executives responsible for deploying them discovered that they lacked visibility into how those systems were operating. What had previously been treated as a manageable technical limitation—limited transparency into AI decision processes—began to reveal itself as a strategic risk.

This challenge is best understood as a trust gap. It is not primarily a communication problem or a matter of public perception. Rather, it reflects a structural weakness in how many enterprise AI systems are designed and governed.

In many organizations, AI deployments lack the architectural mechanisms needed to monitor how decisions are made, explain those decisions to regulators or customers, intervene when outcomes deviate from expectations, and demonstrate accountability when required. Without these capabilities, organizations struggle to scale autonomous systems responsibly.

A growing divide is emerging as a result. Companies that are generating sustained value from AI have invested in architectures and governance structures that make AI systems observable, auditable, and controllable. Many others have not.

Understanding why this gap exists, what it costs organizations, and what it takes to close it has become a central challenge for executives seeking to scale AI responsibly. For many enterprises, the opportunity to address the problem proactively—before regulatory scrutiny or operational failures force the issue—may be narrower than it appears.

Table of content

- Adoption without scale

- The constraint hiding in plain sight

- When opacity becomes a board-level risk

- The governance premium

- Four layers that make AI governable

- Engineering for accountability

- The operating model that governance requires

- Sectors where AI transparency standards are becoming more rigid

- The regulatory wave that has already arrived

- What the leaders actually do

Adoption without scale

Recent statistics on enterprise AI adoption are striking. McKinsey’s 2025 State of AI survey[1], which gathered responses from nearly 2,000 executives across 105 countries, reports that 88% of organizations now use artificial intelligence in at least one business function. This figure has risen rapidly, from 78% just one year earlier and from roughly half of organizations only three years ago. By this measure, AI has moved from experimental technology to operational infrastructure at a pace rarely seen in enterprise software adoption.

Yet the headline adoption figures conceal a more complex reality. While AI use is widespread, the organizational trust and governance structures required to scale it remain far less developed.

IBM’s 2024 CEO Study[2], based on interviews with 3,000 chief executives across more than 30 countries, highlights the magnitude of this gap. The study finds that 75% of CEOs believe trusted AI is impossible without effective governance, yet only 39% report having strong governance mechanisms in place for generative AI today. The resulting 36-point gap between executive expectations and organizational capability represents a critical barrier to scaling AI across the enterprise.

Other research points to the consequences of this gap. A 2025 Capgemini Research Institute survey[3] of 1,500 senior executives across 14 countries found that trust in fully autonomous AI agents fell from 43% to 27% in a single year. As AI systems became more capable and autonomous, executive confidence in them declined rather than increased. The issue is not the maturity of the technology itself; it is the absence of governance structures capable of making those systems understandable, controllable, and accountable.

Evidence suggests that this governance deficit remains widespread. IBM’s research[4] on AI ethics and governance indicates that only 49% of organizations report fully transparent AI decision-making processes. In other words, more than half of enterprises deploying AI today cannot clearly explain—using verifiable evidence rather than general assurances—how their systems arrive at consequential decisions.

When such transparency is absent, organizations lack the mechanisms needed to investigate failures or assign responsibility. Without decision logs, traceable reasoning pathways, or clearly defined ownership, problematic outcomes can be difficult to diagnose and correct.

The broader implications are becoming visible. According to Stanford HAI’s 2025 AI Index Report[5], the number of AI-related incidents recorded in the AI Incident Database reached 233 cases in 2024, representing a 56.4% increase from the previous year and the highest level on record. These incidents include credit decisions influenced by biased training data, hiring algorithms that produced discriminatory outcomes, clinical recommendations issued without adequate oversight, and customer-facing decisions generated without auditable justification.

In this context, the central strategic question facing organizations is no longer whether they are adopting AI. Most already have. The more consequential issue is whether they have established the architectural, governance, and accountability infrastructure necessary to manage and scale it responsibly. That distinction increasingly separates the organizations generating sustained value from AI from those that struggle to translate adoption into meaningful business impact.

The constraint hiding in plain sight

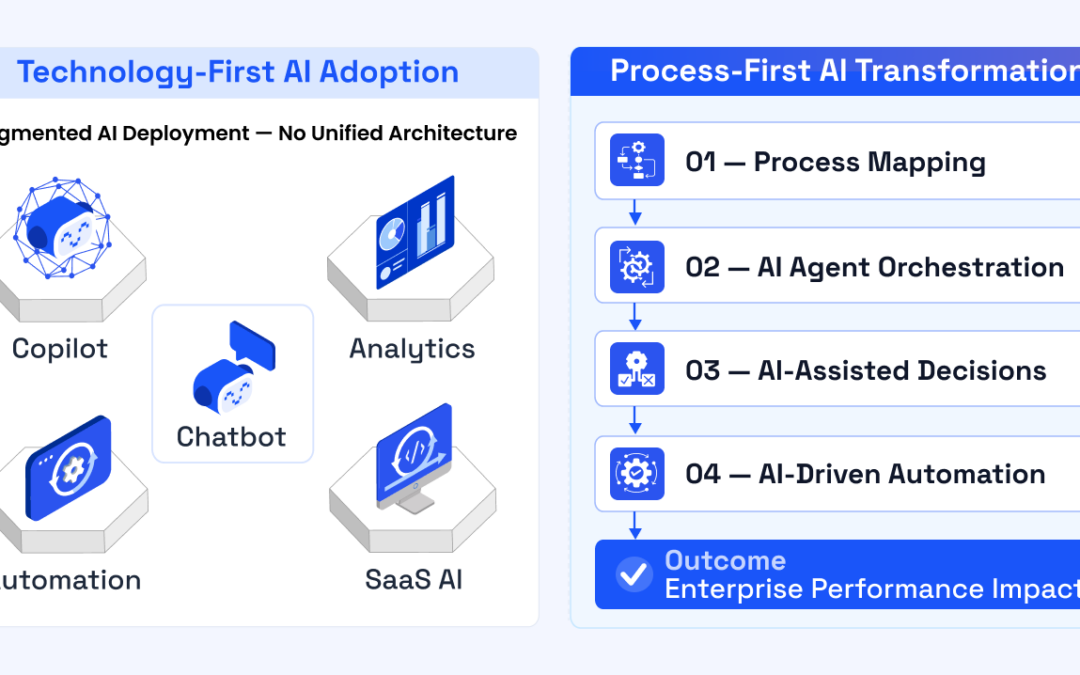

To understand why enterprise AI is not scaling as quickly as expected, it is important to identify the true constraint. The limitation is not model capability. Advances in large language models and other AI systems have accelerated at a pace that would have seemed improbable only a few years ago. Nor is the primary barrier data infrastructure. Although integrating enterprise data remains a significant challenge, organizations are steadily investing to address it.

The more fundamental constraint is organizational. Most enterprises lack the governance structures required to operate AI at consequential scale without exposing themselves to legal, reputational, or financial risk. In other words, the central challenge is not capability but governability.

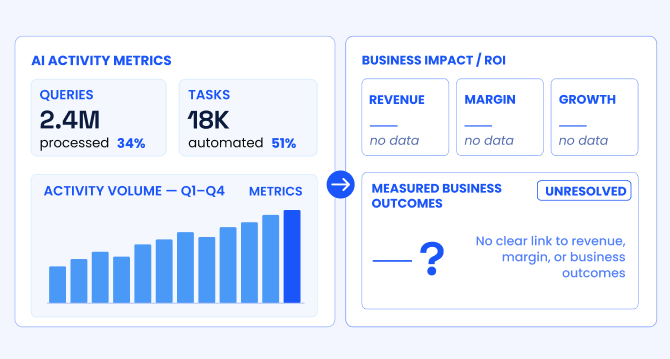

Performance data increasingly supports this conclusion. McKinsey’s 2025 research[6] on AI adoption finds that only 6% of organizations qualify as AI “high performers,” defined as companies generating at least 5% of EBIT from AI initiatives. A 2025 study by Boston Consulting Group[7] examining 1,250 global companies reached a similar conclusion: only 5% of organizations report meaningful financial returns from AI, while approximately 60% report little or no measurable benefit despite significant investment. Earlier BCG research in 2024[8] reported that just 4% of companies were creating substantial value from AI. Across different methodologies and datasets, the findings are consistent. AI adoption is widespread, but enterprise-scale value creation remains rare.

What distinguishes the small group of organizations generating sustained value is not the sophistication of the models they deploy. Instead, it is the presence of governance structures that allow AI systems to operate reliably and accountably. McKinsey finds[9] that AI high performers are three times more likely than their peers to implement formal human-in-the-loop validation checkpoints. Approximately 65% of high-performing organizations maintain such oversight mechanisms, compared with about 20% of others. They are also significantly more likely to redesign workflows to incorporate structured AI supervision and to have senior executives visibly accountable for AI outcomes across the organization. These differences reflect governance practices rather than purely technological investments.

The importance of governance becomes even more pronounced as AI evolves from analytical and generative applications toward autonomous agency. Deloitte’s 2026 State of AI[10] in the Enterprise, based on responses from 3,235 senior leaders across 24 countries, reports that the use of agentic AI systems is expected to rise from 23% of organizations today to approximately 74% within two years. At the same time, only 21% of organizations report having a mature governance framework capable of managing autonomous agents.

This gap is significant. Within a relatively short timeframe, many enterprises plan to deploy AI systems capable of making consequential decisions—approving credit, prioritizing medical cases, screening job candidates, or executing financial transactions—without having established the governance structures necessary to oversee those actions effectively.

The result is a widening governance implementation gap. During this period, organizations may deploy increasingly autonomous AI systems without the institutional mechanisms needed to monitor, explain, and control their decisions. For enterprises operating at scale, this gap represents more than an operational challenge—it represents a growing risk exposure as AI becomes embedded in critical business processes.

Streamline your operational workflows with ZBrain AI agents designed to address enterprise challenges.

When opacity becomes a board-level risk

Discussions about AI governance are often framed primarily in ethical terms—focusing on values such as fairness, non-discrimination, and explainability. These considerations are important, but framing governance solely as an ethical issue understates the magnitude of the risk organizations now face. Increasingly, AI opacity represents not just an ethical concern but a material enterprise risk exposure.

Recent research quantifies this shift. Gartner’s 2025 Market Guide[11] for AI Trust, Risk, and Security Management reports that through 2026, at least 80% of unauthorized AI-related incidents are expected to originate from internal violations rather than external attacks. These incidents include information oversharing, inappropriate system use, or autonomous AI behavior that diverges from its intended purpose. In other words, the primary source of risk lies not in hostile external actors but in the unguarded internal deployment of AI systems. Gartner further estimates[12] that AI-related regulatory violations could contribute to a 30% increase in legal disputes for technology companies by 2028.

Regulatory developments have already begun to translate this risk into direct financial exposure. The European Union’s AI Act, which entered into force in August 2024, establishes penalties for violations involving high-risk AI systems of up to €35 million or 7% of global annual turnover[13], whichever is higher. For a multinational organization generating €10 billion in annual revenue, this could represent an exposure of approximately €700 million. Importantly, triggering such penalties does not require a sophisticated cyberattack or system breach. It may simply involve deploying AI systems in regulated domains—such as credit decisions, hiring processes, health care applications, or law enforcement—without the logging, transparency, and human oversight mechanisms required under the regulation.

Governance frameworks increasingly emphasize the structural nature of this risk. The NIST AI Risk Management Framework, widely used as a reference standard for AI governance in the United States, identifies system opacity and insufficient documentation of AI decision processes as primary obstacles to effective risk management. Without transparency into how decisions are produced, organizations cannot reliably monitor system behavior, investigate failures, or demonstrate accountability.

Industry data suggests that many enterprises remain unprepared for this challenge. In Gartner’s 2025 survey[14] of 360 organizations, more than 70% of IT leaders identified regulatory compliance as one of the three most significant barriers to deploying generative AI, yet only 23% expressed strong confidence in their organization’s ability to manage the associated security and governance requirements. Awareness of the issue is widespread, but the underlying governance architecture often remains incomplete.

For boards and executive teams, the implications are increasingly concrete. If an AI system within the organization were to make a consequential decision—such as denying a loan application, rejecting a job candidate, or recommending a clinical intervention—could the organization produce a verifiable record of how that decision was made? This would include the decision log, the model version deployed, the input data used, the reasoning pathway, and the accountable individual responsible for oversight.

If any of those elements are missing, the exposure is not hypothetical. It represents a governance gap that can translate directly into regulatory, legal, and reputational risk.

The governance premium

One of the most important—and often counterintuitive—findings emerging from recent research on enterprise AI is that strong governance practices do not hinder performance. On the contrary, organizations that invest seriously in AI governance, transparency, and responsible AI frameworks consistently outperform their peers across multiple performance indicators.

PwC’s quantitative analysis[15] of responsible AI investment provides one of the clearest demonstrations of this effect. The firm compared organizations that allocate an additional 10% of their AI budgets to comprehensive governance programs with those that spend only enough to meet minimal compliance requirements. The results show that companies investing more heavily in governance achieve valuations up to 4% higher and revenue up to 3.5% higher than their peers. PwC attributes this effect to what its researchers describe as a “trust halo”—the cumulative commercial value created when AI systems are explainable, auditable, and defensible. Over time, this transparency strengthens confidence among customers, employees, investors, and regulators, producing measurable economic benefits.

Research[16] conducted by the Notre Dame–IBM Technology Ethics Lab, based on a survey of 915 executives across 19 countries, identifies an even more pronounced performance advantage at higher investment levels. Organizations allocating more than 10% of their AI budgets to ethics and governance initiatives generate 30% higher operating profit from AI investments than companies allocating 5% or less. These organizations also report 22% higher customer satisfaction and retention, 20% fewer operational incidents, and 19% higher internal AI adoption rates. In this context, governance does not improve a single dimension of performance; it strengthens several simultaneously.

Capgemini’s research[17] highlights another dimension of the governance advantage: speed of value realization. Organizations that establish governance frameworks before scaling their AI initiatives achieve return on investment approximately 45% faster than organizations that attempt to retrofit governance after deployment. In practice, governance-first strategies reduce the operational disruptions associated with incident remediation, regulatory intervention, and reputational recovery that frequently follow poorly governed AI deployments.

This finding challenges a common assumption in executive discussions about AI. Governance is often perceived as a constraint on innovation or speed. In reality, poorly governed systems tend to generate hidden costs that slow progress over time. Systems that appear to move quickly during early deployment often encounter delays later as organizations address unexpected failures, compliance issues, or reputational risks.

The potential downside of insufficient governance further reinforces this point. PwC’s modelling[18] of significant AI-related incidents shows that public trust in an affected company can decline by at least 20% immediately following a major AI failure, with recovery occurring only gradually even among organizations with established governance frameworks. In severe scenarios, the resulting reputational and financial impact can lead to company valuation losses of up to 50% within the first two weeks following an incident.

In this context, the perceived short-term efficiency gains from deploying AI systems without sufficient governance represent a fragile advantage. As Stanford HAI’s data[19] illustrates—with AI-related incidents increasing by 56% in a single year—the risks associated with opaque or poorly governed systems are growing rapidly. Organizations that treat governance as a strategic capability rather than a compliance obligation are therefore better positioned not only to manage risk but also to capture the full performance potential of AI.

Four layers that make AI governable

If transparency is the objective of AI governance, architecture is the mechanism that enables it. Emerging governance frameworks—including the European Union’s AI Act, the NIST AI Risk Management Framework, and Gartner’s AI Trust, Risk, and Security Management (TRiSM) model—converge on a common architectural principle: effective governance depends on a set of operational capabilities embedded directly into AI systems.

These capabilities can be understood as four interdependent layers. Each layer contributes a necessary element of governance, and the absence of any one of them weakens the effectiveness of the others.

Decision visibility

The first layer is decision visibility. Organizations must be able to record and examine how AI systems arrive at consequential decisions. This requires automated, real-time logging at the point of execution rather than post hoc reconstruction. Key elements include the input data used by the model, the model version deployed, the output generated, and the thresholds or parameters guiding the decision.

Regulatory and governance frameworks increasingly treat this capability as foundational. Article 12 of the EU AI Act requires logging mechanisms for high-risk AI systems. Similarly, the NIST AI Risk Management Framework embeds traceability within its “Govern” and “Measure” functions. Gartner’s TRiSM research[20] highlights the operational implication: without continuous monitoring and automated guardrails, governance policies have limited impact in production environments. In practice, the effectiveness of governance depends not on policy documentation but on system-level decision logs.

Controllability

The second layer is controllability. AI systems operating within consequential workflows must incorporate mechanisms that allow humans to review, approve, or override outputs before actions are executed. These control points must be embedded directly into system design rather than existing only as theoretical safeguards.

This principle is reflected in Article 14 of the EU AI Act, which mandates built-in human oversight mechanisms for high-risk AI deployments. Research on organizational AI performance also underscores the value of such controls. McKinsey’s studies[21] of AI high performers show that approximately 65% of leading organizations implement formal human-in-the-loop checkpoints, compared with roughly 23% of average organizations.

Accountability

The third layer is accountability. For every AI system responsible for consequential decisions, a clearly identified individual must be accountable for its deployment, operation, and outcomes. Effective governance requires more than shared oversight or committee structures; it requires explicit ownership.

In many organizations, this is where governance frameworks encounter practical difficulties. While policies often articulate high-level principles of responsible AI, responsibility for individual systems may remain diffuse or undefined. Without clearly assigned accountability, organizations struggle to respond effectively when failures occur or regulatory scrutiny arises.

Defensibility

The fourth layer is defensibility—the ability to demonstrate, under external scrutiny, that an AI system operates within defined parameters and governance controls. Defensibility emerges when the preceding layers function together: decisions are traceable, oversight mechanisms exist, accountability is assigned, and comprehensive records are available for review.

This capability is increasingly important as regulators, courts, and investors demand greater transparency around automated decision-making. Organizations must be able to demonstrate that AI systems operate with appropriate safeguards, including detailed logging, structured human oversight, and clearly documented ownership.

Taken together, these four layers transform governance from a conceptual commitment into an operational capability. When embedded into system architecture, they enable organizations to deploy AI at scale while maintaining the transparency, accountability, and control necessary to manage risk and sustain trust.

Streamline your operational workflows with ZBrain AI agents designed to address enterprise challenges.

Engineering for accountability

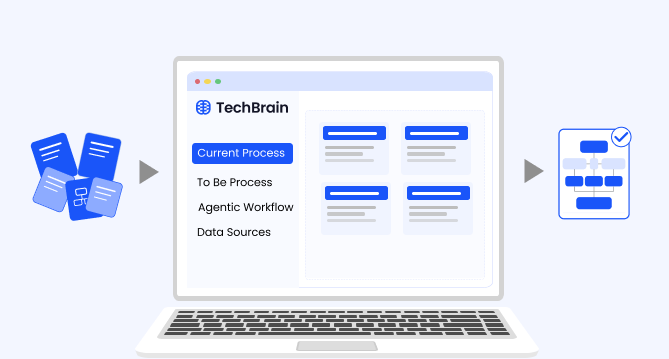

The governance frameworks discussed earlier establish what responsible AI requires. The next question is how organizations translate those requirements into operational systems. Increasingly, this challenge is addressed through what can be described as a “glass-box” architecture—an approach in which AI systems are designed for observability and accountability from the outset.

The central principle of this approach is straightforward: AI systems must be built to be observable during operation rather than retrofitted with transparency after problems arise. The difference between these two approaches is substantial. Systems designed with built-in observability can support governance in real time, whereas systems retrofitted after deployment often struggle to provide the information necessary for monitoring, auditing, or regulatory review.

Evidence suggests that many organizations have yet to establish this architectural foundation. Deloitte’s 2026 State of AI research[22] finds that only 21% of organizations report having a mature governance model capable of managing autonomous agents, even though approximately 80% plan significant agentic AI deployments within the next two years. Capgemini’s research[23] produces similar findings, reporting that fewer than one in five organizations consider their data and technology infrastructure sufficiently mature to support agentic AI at scale. Gartner’s 2025 survey[24] of 360 organizations further indicates that companies deploying dedicated AI governance platforms are 3.4 times more likely to achieve high governance effectiveness than those attempting to manage AI risk using general-purpose tools. These results reinforce an important conclusion: effective governance is primarily an architectural capability rather than a policy objective.

Organizations that successfully operationalize AI governance typically adopt several architectural disciplines.

Logging as infrastructure

Decision logs, model version histories, input–output records, and confidence scores must be treated as core enterprise data assets. These records should be stored, retained, and made queryable with the same rigor applied to financial or operational data. Regulatory frameworks increasingly require such capabilities. The EU AI Act, for example, mandates logging and record retention for high-risk AI systems, while the NIST AI Risk Management Framework requires documentation throughout the AI lifecycle. These requirements can only be met if systems generate the necessary records automatically and continuously during operation.

Workflow design with explicit decision checkpoints

AI-enabled workflows should incorporate clearly defined points at which human reviewers can evaluate, approve, or override system outputs before consequential actions are executed. Embedding these checkpoints into process design ensures that human oversight remains integrated into decision-making processes rather than applied only after errors occur.

Model lifecycle governance

AI systems require ongoing monitoring and maintenance. Models may drift over time, training data may become outdated, and regulatory expectations may evolve. Effective governance therefore requires structured processes for model evaluation, retraining, validation, and retirement as conditions change.

Stakeholder transparency architecture

Different stakeholders require different forms of visibility into AI systems. Regulators may require detailed audit trails. Affected individuals may require clear explanations of how decisions were made. Boards and executives require risk dashboards that summarize system behavior. Engineers require performance metrics that enable operational monitoring and improvement. These transparency outputs must be designed into the system architecture from the outset rather than generated ad hoc in response to inquiries.

The importance of this architectural approach extends beyond governance alone. Gartner predicts[25] that by 2028, approximately 25% of enterprise security breaches will be linked to the misuse or abuse of AI agents by internal or external actors. Systems lacking observability therefore create simultaneous vulnerabilities for both governance and cybersecurity functions. In this context, glass-box architecture serves not only as a governance mechanism but also as a critical component of enterprise risk management.

The operating model that governance requires

Technology architecture alone cannot deliver effective AI governance. Even systems designed with strong observability and control mechanisms require an organizational operating model that translates those technical capabilities into clear accountability. Governance becomes meaningful only when supported by defined roles, decision rights, review processes, and enforcement mechanisms.

For most organizations, the governance journey begins at the board level. Deloitte’s 2025 Global Boardroom Survey[26], based on responses from 695 board members and executives across 56 countries, reports that 31% of boards still do not include AI as a regular agenda item. Although this represents an improvement from 45% in the previous year, the finding remains notable given that key provisions of the EU AI Act governing high-risk AI systems will take full effect in August 2026. The same survey indicates that nearly one-third of respondents believe their organizations are not yet prepared to deploy AI at scale, even as many of those organizations continue expanding AI initiatives. This disconnect between readiness and deployment suggests that AI-related risk may be accumulating faster than governance structures are evolving to manage it.

Research[27] by MIT Sloan Management Review and Boston Consulting Group, drawing on responses from more than 1,200 executives, highlights a related governance gap. While 82% of executives agree that responsible AI should be a top management priority, only 55% report that it currently receives that level of attention within their organizations. The resulting 27-point gap between stated importance and operational focus reflects less a lack of awareness than the absence of governance structures that embed oversight into regular organizational processes. At the same time, IBM’s CEO research[28] indicates that 61% of chief executives are pushing AI adoption faster than some parts of their organizations are comfortable with, creating pressure that can widen governance gaps during rapid deployment.

Organizations seeking to govern AI effectively typically establish three structural elements within their operating models.

Clear separation of governance responsibilities

Effective oversight requires clearly defined ownership across multiple functions. Risk management teams define risk appetite and establish consequences for policy violations. Legal teams oversee regulatory compliance and manage litigation exposure. Technology organizations maintain the technical architecture required for logging, monitoring, override mechanisms, and model lifecycle governance. Business units remain accountable for the outcomes of AI-enabled processes, including the use cases deployed and the human review checkpoints embedded in those workflows. When these responsibilities are not clearly defined, governance tends to become diffuse and difficult to enforce.

Structured governance cadence

Oversight mechanisms must operate on a recurring schedule rather than only in response to incidents. Many organizations are beginning to institutionalize AI governance through regular review cycles: quarterly reporting to boards, monthly executive oversight meetings, and continuous operational monitoring through automated system dashboards. Gartner predicts[29] that by 2029 approximately 10% of global boards will use AI-generated analysis to challenge executive decisions that materially affect business performance, suggesting that board-level AI oversight is likely to evolve into a more active governance function.

Audit-ready system design

Organizations increasingly recognize the importance of designing AI systems with regulatory review in mind from the outset. Systems should be documented and monitored as though they will be subject to external audit, because in many industries they will be. Deloitte’s guidance[30] on leading AI governance emphasizes that companies should proactively track regulatory developments and build systems capable of demonstrating safety, fairness, and compliance through structural evidence rather than retrospective explanation. Adopting this audit-ready posture helps ensure that organizations can provide regulators, courts, and stakeholders with the documentation required to demonstrate responsible system operation.

Taken together, these structural elements transform governance from a policy commitment into an operational capability. When combined with transparent system architecture and disciplined oversight practices, they enable organizations to scale AI deployment while maintaining the accountability and risk controls necessary for sustained enterprise adoption.

Sectors where AI transparency standards are becoming more rigid

For organizations operating in regulated industries, transparency in AI systems is not merely a strategic objective—it is increasingly a legal requirement. In several critical sectors, regulatory expectations regarding explainability, documentation, and oversight predate the current wave of AI governance debates. What is changing is that these existing regulatory obligations are now being explicitly applied to AI systems, creating compliance requirements that many organizations are only beginning to assess.

Financial services

In financial services, the Federal Reserve’s SR 11-7 model risk management guidance[31], reinforced through subsequent guidance from the Office of the Comptroller of the Currency (OCC) and the Consumer Financial Protection Bureau (CFPB), requires financial institutions to maintain documented model development processes, independent validation, and continuous performance monitoring for models used in consequential decision-making. These requirements apply regardless of whether a system relies on traditional statistical techniques or advanced machine learning.

As a result, any AI system used for credit scoring, trading, underwriting, or risk assessment must be able to produce documentation explaining how decisions are generated, demonstrate that the model has undergone independent validation, and provide evidence of ongoing monitoring. Systems that cannot support these requirements risk noncompliance with established model risk management standards even in the absence of new AI-specific legislation.

Healthcare

In healthcare, regulatory expectations are defined in part by the U.S. Food and Drug Administration’s Action Plan for Artificial Intelligence and Machine Learning–Based Software as a Medical Device (SaMD)[32]. The FDA requires developers to demonstrate algorithmic transparency, evidence generation, bias assessment, and clear protocols for model updates when AI systems are used to support clinical decision-making.

AI applications that influence diagnosis, treatment recommendations, or patient management must therefore provide sufficient transparency for clinicians and regulators to understand how the system functions. Failure to meet these requirements can lead to delayed regulatory approval, expanded post-market surveillance obligations, or enforcement actions. In practice, transparency is treated as a prerequisite for market access rather than a feature to be addressed after deployment.

Employment and workforce management

Regulatory scrutiny is also increasing in employment-related uses of AI. In 2023, the U.S. Equal Employment Opportunity Commission (EEOC)[33] issued technical guidance clarifying that Title VII of the Civil Rights Act applies to AI-driven hiring, screening, and promotion tools. Employers deploying automated decision systems must therefore ensure that these systems do not produce discriminatory outcomes or disparate impacts across protected groups.

When organizations rely on opaque AI screening tools, they may struggle to demonstrate compliance if challenged. In such cases, the absence of explainability may itself be interpreted as evidence that adequate oversight mechanisms were not in place. For companies using AI in hiring or workforce evaluation at scale, explainability has therefore become an immediate compliance consideration rather than a future regulatory possibility.

Insurance

In the insurance sector, the National Association of Insurance Commissioners (NAIC)[34] issued an AI Model Bulletin in 2024 requiring insurers to ensure that AI-driven underwriting and pricing decisions can be explained to regulators and policyholders. Insurers must be able to demonstrate the basis on which algorithmic pricing or risk assessments are generated and confirm that these systems comply with consumer protection and anti-discrimination standards.

As regulatory scrutiny increases across U.S. jurisdictions—and with the EU AI Act classifying insurance access decisions as high-risk AI applications—organizations using opaque pricing models face growing regulatory and legal exposure.

Public sector and critical infrastructure

For public sector institutions and organizations operating critical infrastructure, transparency obligations are expanding even further. Annex III of the EU AI Act[35] identifies several categories of AI deployment as high-risk, including systems used in:

-

critical infrastructure management

-

employment and worker management

-

access to education

-

access to essential services such as credit

-

law enforcement and border control

-

judicial and democratic processes

AI systems operating within these domains must meet stringent requirements for auditability, automated logging, human oversight, and transparency documentation. Beginning in August 2026, these requirements will become mandatory conditions for lawful deployment within the European Union.

Across these sectors, the direction of regulatory policy is clear. Transparency is no longer treated solely as a desirable characteristic of responsible AI systems; it is increasingly embedded in existing legal and supervisory frameworks governing the making of consequential decisions. Organizations deploying AI in regulated environments must therefore ensure that their systems are capable of meeting these requirements as a matter of compliance, not just strategy.

The regulatory wave that has already arrived

In many executive discussions, AI regulation is often treated as a future development—something organizations should monitor while continuing to scale deployments. Recent evidence suggests that this assumption no longer reflects reality. According to the Stanford Human-Centered Artificial Intelligence (HAI) 2025 AI Index Report[36], regulatory activity around AI has already accelerated significantly.

In 2024, U.S. federal agencies introduced 59 AI-related regulatory actions, more than double the 25 recorded in 2023. These actions were issued by 42 different federal agencies, compared with 21 agencies the previous year. The increase reflects not only growing regulatory attention but also a broadening distribution of oversight responsibilities across government institutions. AI governance is no longer confined to a small number of specialized regulators; it is emerging across the broader administrative landscape.

Legislative activity at the state level has also expanded rapidly. In 2024, U.S. state legislatures proposed 629 AI-related bills and enacted 131 into law[37]. Among the most significant developments is Colorado’s AI Act, widely considered one of the most comprehensive state-level frameworks in the United States. The law establishes requirements related to transparency, bias impact assessments, and accountability mechanisms for AI systems involved in consequential decision-making affecting individuals.

The trend is not limited to the United States. Globally, references to artificial intelligence in legislative proceedings across 75 monitored countries increased by approximately 21% in a single year[38], continuing a ninefold rise in legislative attention since 2016. The global regulatory infrastructure for AI governance is therefore not emerging gradually; it has already entered an active phase of implementation.

A critical milestone in this process is August 2026, when the European Union’s AI Act will fully enforce its high-risk AI provisions. The regulation applies broadly to organizations operating within the European Union as well as companies outside the EU whose AI systems affect EU residents. The requirements include automated decision logging, interpretable system outputs, embedded human oversight, minimum log-retention periods, and clearly defined deployer accountability.

Importantly, these obligations require substantial preparation. Organizations must establish the necessary governance architecture—including monitoring infrastructure, documentation processes, and oversight mechanisms—before enforcement begins. Attempting to implement these capabilities in response to regulatory action would leave little room for effective compliance.

Taken together, these developments indicate that regulatory convergence around AI governance is no longer a distant prospect. It has become a current operating condition for organizations deploying AI at scale. Companies that treat regulatory milestones such as the EU AI Act’s 2026 enforcement date as the starting point for governance preparation may discover that the time required to build compliant systems is significantly longer than anticipated.

What the leaders actually do

Research across multiple studies points to a consistent pattern separating organizations that generate sustained value from AI from those that accumulate risk without commensurate returns. Analyses by McKinsey, Boston Consulting Group, PwC, and Capgemini—using different methodologies and datasets—converge on a similar set of organizational practices. These practices are less a sequential implementation roadmap than a set of governance disciplines that shape how AI systems are deployed and managed.

In practice, they can be understood as diagnostic questions that boards and senior leadership teams should be able to answer with evidence rather than general assurances.

Define AI risk appetite by decision type

The first discipline is establishing a clear risk appetite framework for AI-driven decisions before systems are deployed. Organizations should categorize AI applications into decision tiers—for example, decisions that can be fully automated, those requiring mandatory human review, and those that remain advisory in nature. Each category must carry explicit governance requirements.

McKinsey’s research[39] finds that high-performing organizations are approximately three times more likely to formalize these categories. Once defined, these decision tiers influence model deployment choices, oversight mechanisms, and accountability structures throughout the enterprise. Importantly, this classification represents a business governance decision, not simply a technical design choice, and therefore belongs at the board or executive leadership level.

Build governance infrastructure before scaling

The second discipline involves establishing the governance architecture—often described as the trust stack—before scaling AI systems across the enterprise. This includes the four foundational layers discussed earlier: decision visibility, controllability, accountability, and defensibility.

Organizations that validate these capabilities during early deployments tend to scale more effectively. Capgemini’s research[40] shows that companies adopting a governance-first approach achieve return on investment approximately 45% faster than those that attempt to introduce governance after systems have already been widely deployed. In practice, early governance investment reduces the operational disruptions associated with incident remediation, regulatory response, and reputational repair.

Assign clear accountability for AI systems

The third discipline is the assignment of explicit business ownership for each AI system operating in a consequential domain. Governance frameworks often fail when responsibility for AI oversight is distributed across committees or shared between technical and business teams without clear individual accountability.

IBM’s research[41] indicates that only about 41% of organizations report having established mechanisms to integrate AI governance into strategic decision-making, and even fewer assign accountability at the individual system level. Organizations that successfully scale AI typically designate a specific owner responsible for the system’s operational performance, compliance posture, and overall outcomes.

Establish a recurring governance cadence

The fourth discipline involves embedding governance into regular operational rhythms. Effective oversight requires structured review cycles at multiple organizational levels. These often include quarterly board-level reviews of AI risk, monthly executive-level reviews of model performance and governance metrics, and continuous operational monitoring through automated system dashboards.

The gap documented by MIT Sloan Management Review and BCG[42]—between the 82% of executives who say responsible AI should be a leadership priority and the 55% who report it is currently—reflects the absence of such institutionalized oversight mechanisms. Governance cadence transforms responsible AI from a conceptual commitment into a recurring management practice. As IBM’s CEO research[43] shows that 61% of CEOs are pushing AI adoption faster than some parts of their organizations are comfortable with, structured oversight becomes essential for managing the associated risks.

Design for audit readiness

The final discipline is adopting an audit-ready posture for AI systems. Rather than focusing solely on compliance with existing regulations, leading organizations build systems that can demonstrate transparency and accountability under external scrutiny.

This includes the ability to produce decision logs, model documentation, validation records, oversight evidence, and clear records of ownership for each consequential AI deployment. BCG’s research on organizations generating significant returns from AI finds that these companies are more likely to have developed robust governance practices and to engage their workforce more actively when redesigning workflows during AI deployment.

Taken together, these disciplines reflect a shift in how organizations approach AI governance. Rather than treating oversight as a compliance obligation applied after deployment, leading organizations embed governance directly into their operating models. This approach not only reduces risk exposure but also supports more durable value creation as AI systems scale across the enterprise.

Streamline your operational workflows with ZBrain AI agents designed to address enterprise challenges.

Endnote

The trust gap surrounding enterprise AI is fundamentally an architectural challenge, and its solution is increasingly well understood. Organizations that are generating sustained, scalable value from AI—roughly the 5% to 6% of companies consistently identified as high performers by BCG and McKinsey—have addressed this challenge by building governance capabilities directly into their operating infrastructure.

These organizations treat governance not as a compliance exercise but as a structural capability. Their systems incorporate decision logging as enterprise data infrastructure, clearly assigned accountability for individual AI deployments, structured human oversight at defined checkpoints, and continuous model lifecycle governance. At the organizational level, they also establish board-level risk appetite frameworks that guide decisions about where and how AI systems can be deployed.

The commercial case for this approach is increasingly supported by empirical evidence. PwC reports[44] that organizations investing meaningfully in AI governance achieve valuations approximately 4% higher and revenue approximately 3.5% higher than peers that limit governance investment to basic compliance. IBM’s research[45] finds that companies allocating substantial resources to responsible AI programs generate about 30% higher operating profit from AI initiatives. Capgemini’s studies[46] show that organizations adopting governance-first strategies achieve return on investment approximately 45% faster than those attempting to retrofit governance after scaling deployments. Across independent research programs, the performance benefits associated with governance investment appear consistent and cumulative.

The regulatory case for governance is also becoming clearer. The European Union’s AI Act, whose high-risk provisions become fully enforceable in August 2026, introduces penalties of up to 7% of global annual turnover for serious violations. At the same time, Gartner projects a 30% increase in AI-related regulatory disputes by the end of the decade, indicating that legal scrutiny around automated decision-making is likely to intensify.

Reputational considerations reinforce this trajectory. Research suggests that companies experiencing significant AI-related incidents can experience immediate trust declines of at least 20%, with reputational recovery often requiring years.

Looking forward, the organizations most likely to deploy autonomous AI systems at scale—with the confidence of regulators, investors, and boards—are unlikely to be those that pursued rapid deployment without governance foundations. Instead, they are likely to be the organizations that embedded governance capabilities early, treated transparency as a design requirement, established clear accountability structures, defined risk appetite at the leadership level, and implemented audit-ready oversight processes before external scrutiny required them to do so.

For executive teams, the central question is therefore no longer whether governance infrastructure will be necessary. Regulatory developments, market expectations, and operational experience already point in that direction. The more relevant question is whether governance capabilities will be established proactively—before systems scale—or reactively, after incidents, regulatory intervention, or operational failures make the need unavoidable.

Organizations that treat AI governance as infrastructure—not compliance—will be the ones that scale autonomous systems with confidence. The question for leadership is simple: Is your enterprise building AI systems it can trust, explain, and defend?

Author’s Bio

An early adopter of emerging technologies, Akash leads innovation in AI, driving transformative solutions that enhance business operations. With his entrepreneurial spirit, technical acumen and passion for AI, Akash continues to explore new horizons, empowering businesses with solutions that enable seamless automation, intelligent decision-making, and next-generation digital experiences.

Table of content

- Adoption without scale

- The constraint hiding in plain sight

- When opacity becomes a board-level risk

- The governance premium

- Four layers that make AI governable

- Engineering for accountability

- The operating model that governance requires

- Sectors where AI transparency standards are becoming more rigid

- The regulatory wave that has already arrived

- What the leaders actually do

Frequently Asked Questions

Why does enterprise AI adoption remain high while measurable business value remains limited?

High adoption rates often reflect experimentation rather than transformation. Many organizations deploy AI within existing workflows—automating tasks, generating insights, or improving efficiency—without redesigning how decisions and processes actually operate. As a result, AI improves isolated activities but does not fundamentally change how value is created. Organizations that capture meaningful returns tend to integrate AI into end-to-end workflows and redesign decision structures around it. This requires governance, data integration, and operating model changes. Without these structural adjustments, AI remains a productivity tool rather than a driver of enterprise-level performance.

Why is the AI trust gap increasingly viewed as an architectural issue rather than a cultural one?

Trust challenges in enterprise AI are rarely caused by skepticism about the technology itself. They arise when organizations cannot explain how AI systems make consequential decisions, cannot intervene when systems behave unexpectedly, or cannot demonstrate accountability to regulators and stakeholders. These capabilities depend on system architecture—logging infrastructure, oversight checkpoints, model lifecycle management, and auditability. Without these technical foundations, even well-intentioned governance policies remain ineffective. As AI systems become more autonomous and integrated into operational workflows, the ability to observe and control their behavior becomes essential to scaling AI responsibly.

How does the “trust stack” help organizations operationalize AI governance?

The trust stack translates governance principles into operational capabilities. It typically includes four layers: decision visibility, controllability, accountability, and defensibility. Decision visibility ensures that organizations can observe how AI systems operate through automated logging and monitoring. Controllability introduces structured human oversight at defined checkpoints. Accountability assigns ownership for each AI deployment and its outcomes. Defensibility allows organizations to demonstrate compliance and transparency during regulatory or legal review. Together, these layers enable AI systems to operate at scale while remaining observable, governable, and auditable—qualities increasingly required for enterprise adoption.

What distinguishes organizations that generate sustained value from AI from those that do not?

Research consistently shows that high-performing AI organizations differ less in technology choice and more in governance and operating discipline. These companies establish clear risk frameworks, assign accountable business owners for AI systems, and embed oversight into workflows before scaling deployments. They also treat governance infrastructure—logging systems, monitoring platforms, and model lifecycle management—as core enterprise capabilities. By contrast, organizations that struggle to capture value often scale AI experimentation without these structures in place. The result is fragmented deployments, governance gaps, and limited ability to integrate AI into core business processes.

Why is AI governance increasingly a board-level responsibility?

As AI systems begin influencing decisions related to credit, hiring, healthcare, and financial transactions, the associated risks extend beyond operational management into legal, reputational, and strategic domains. Boards are ultimately responsible for overseeing enterprise risk exposure, including risks introduced by automated decision-making. Governance frameworks such as the EU AI Act and NIST AI Risk Management Framework reinforce this expectation by requiring documented oversight, accountability, and monitoring. For boards, the challenge is not evaluating individual algorithms but ensuring that the organization has the infrastructure and governance processes necessary to manage AI responsibly at scale.

How should organizations prepare for the regulatory convergence surrounding AI governance?

Preparation begins with recognizing that many regulatory expectations already exist within sector-specific frameworks such as financial model risk management standards, employment law, and healthcare regulation. New regulations like the EU AI Act extend these expectations into explicit AI governance requirements. Organizations should therefore focus on building capabilities that support explainability, monitoring, auditability, and human oversight. Rather than treating each regulation independently, many leading organizations adopt unified governance frameworks that address multiple requirements simultaneously. This approach reduces compliance complexity while enabling AI systems to operate within evolving regulatory environments.

What operational changes are required as organizations move from AI assistance to autonomous agents?

Agentic AI systems introduce new governance challenges because they can initiate actions rather than merely generate recommendations. This shift requires organizations to redesign workflows around oversight checkpoints, escalation mechanisms, and continuous monitoring. Decision thresholds must be clearly defined so that certain actions remain automated while others trigger human review. In addition, organizations must monitor agent performance over time to detect drift, unintended behavior, or operational anomalies. As autonomy increases, governance mechanisms must evolve from periodic review processes into continuous operational oversight embedded directly into the workflow.

How should executives evaluate whether their organization is ready to scale AI responsibly?

Executives should assess readiness across several dimensions: governance infrastructure, data integration, model lifecycle management, and organizational accountability. Key questions include whether AI decisions are fully logged, whether oversight checkpoints exist in critical workflows, whether each AI deployment has a clearly identified owner, and whether model performance is continuously monitored. Organizations should also evaluate whether boards and executive teams receive regular reporting on AI risk and performance. Readiness for scaling AI is therefore less about technical experimentation and more about whether the organization has built the governance and operational structures required to manage AI at enterprise scale.

What role does transparency play in maintaining trust as AI systems become more autonomous?

Transparency enables organizations to demonstrate that AI systems operate within defined parameters and governance frameworks. As automation increases, stakeholders—including regulators, employees, customers, and investors—expect visibility into how decisions are made and how risks are managed. Transparency mechanisms such as decision logs, explainability reports, and audit trails provide this visibility. They also allow organizations to detect problems early, correct system behavior, and respond effectively to regulatory or legal scrutiny. Without transparency, organizations may find it difficult to sustain trust in AI systems even if the underlying technology performs effectively.

Insights

The AI ROI illusion: Why enterprises struggle to measure AI impact

Organizations with stronger measurement discipline are better positioned to link AI deployments to measurable business outcomes, prioritize high-impact use cases across the enterprise, allocate capital more effectively, and continuously refine models using real-world performance feedback.

The agentic enterprise: Why AI success requires an operating model redesign

Organizations that redesign their operating models around agentic AI are beginning to outperform those that apply AI only incrementally.

Enterprise AI pilot-to-production gap: Root causes & how to address them

The underlying cause is structural. In many enterprises, AI pilots are developed on infrastructure that was not designed to support production deployment.

Solution architecture best practices: A guide for enterprise teams

The architecture design process culminates in a set of documented artifacts that communicate the solution to development, operations, and business teams.

Common solution architecture design challenges and solutions

Solution architecture must evolve from fragmented documentation practices to a structured, collaborative, and continuously validated design capability.

Why structured architecture design is the foundation of scalable enterprise systems

Structured architecture design guides enterprises from requirements to build-ready blueprints. Learn key principles, scalability gains, and TechBrain’s approach.

Intranet search engine guide: How it works, use cases, challenges, strategies and future trends

Effective intranet search is a cornerstone of the modern digital workplace, enabling employees to find trusted information quickly and work with greater confidence.

Enterprise knowledge management guide

Enterprise knowledge management enables organizations to capture, organize, and activate knowledge across systems, teams, and workflows—ensuring the right information reaches the right people at the right time.

Company knowledge base: Why it matters and how it is evolving

A centralized company knowledge base is no longer a “nice-to-have” – it’s essential infrastructure. A knowledge base serves as a single source of truth: a unified repository where documentation, FAQs, manuals, project notes, institutional knowledge, and expert insights can reside and be easily accessed.