The AI ROI illusion: Why enterprises struggle to measure AI impact

Enterprise leaders are converging on a critical realization about artificial intelligence: durable competitive advantage will not come from models alone, but from how organizations harness data and embed intelligence into the way work is executed.

Artificial intelligence has rapidly emerged as one of the largest strategic investment priorities for enterprises. As organizations accelerate deployment across operations, products, and decision systems, boards are increasingly asking a fundamental question:

What is the return on investment from AI?

The expectation of measurable value is widespread. According to the IBM Institute for Business Value CEO Study (2025), 85% of CEOs expect their organizations to achieve positive returns from scaled AI initiatives by 2027. AI is no longer viewed as an experimental capability; it is now treated as a strategic asset expected to drive enterprise performance.

Yet current evidence points to a growing disconnect between expectation and realized impact.

The McKinsey State of AI Report (2025) finds that only 39% of organizations report enterprise-level EBIT (earnings before interest and taxes) impact from AI deployments. At the same time, Deloitte research suggests that most organizations require two to four years to realize returns from a typical AI use case—significantly longer than the payback periods associated with traditional technology investments. Many executives describe this pattern as the AI J-curve: early investments in data, infrastructure, and model development generate an initial cost burden before measurable value begins to materialize and scale.

Taken together, these findings highlight a structural disconnect. AI adoption is accelerating across industries, yet the ability to evaluate its economic contribution remains underdeveloped.

Part of the challenge lies in the way AI creates value. Unlike traditional technologies that automate discrete, well-defined tasks, AI reshapes decision-making, workflow coordination, and operational dynamics across functions. Its impact is inherently distributed. This creates what is often described as the attribution problem: AI does not operate as a standalone unit generating isolated output. Instead, it augments human judgment and system interactions across the enterprise, diffusing value across teams, processes, and technologies. For CFOs attempting to assess financial returns, this makes attribution—and therefore measurement—significantly more complex.

The result is a widening AI measurement gap: a growing divergence between the scale of enterprise AI investment and the ability to quantify its economic value.

For boards and executive leadership teams, the implication is clear. The question of AI ROI is both necessary and justified. The challenge lies not in asking the question, but in measuring the answer.

This article examines why traditional ROI models break down in the context of AI, where current measurement approaches fall short, and how organizations can adopt more structured frameworks to evaluate AI-driven value creation.

Table of content

- The right approach to AI performance measurement

- Why traditional ROI models break down for AI

- Why current AI metrics fail

- Addressing the performance gap

- The human dimension: why AI measurement is an organizational challenge

- Building a structured framework for measuring AI value

- Enabling measurement across all three tiers

- Applying the three-tier framework across enterprise use cases

- Why AI ROI measurement requires strong governance

The right approach to AI performance measurement

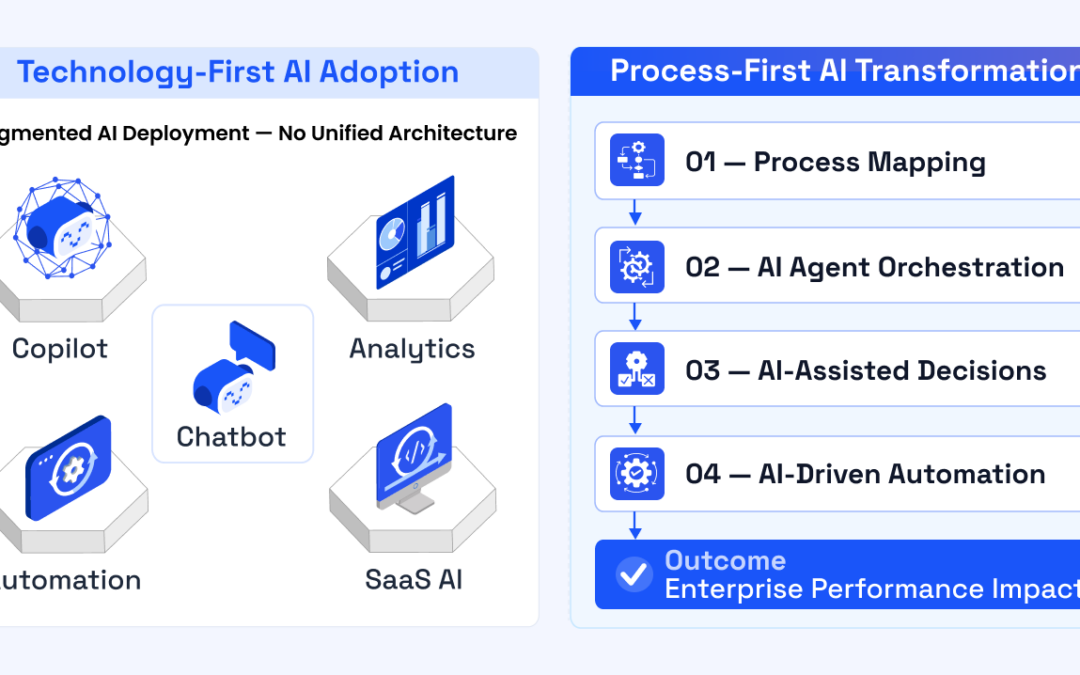

As organizations seek to address AI ROI, two distinct measurement philosophies are emerging.

Activity measurement: Operational utilization

The first centers on activity measurement.

Organizations operating within this model track operational indicators associated with AI deployment, including the number of AI queries processed, hours of labor saved, tasks automated, and internal adoption rates of AI tools.

These metrics provide visibility into system utilization and operational efficiency. They indicate that AI technologies are being deployed and integrated into workflows. However, measures such as “hours of labor saved” often represent trapped value—time that has been freed within a workflow but not redeployed into revenue-generating or cost-reducing activities.

As a result, activity metrics rarely establish a clear connection between AI deployment and enterprise financial performance.

Outcome measurement: Business impact and value realization

The alternative approach focuses on outcome measurement.

Organizations adopting this model evaluate AI based on its contribution to business outcomes, such as revenue growth attributable to AI-enabled insights, improved forecasting accuracy, reduced operational risk, and increased customer lifetime value.

This perspective shifts the emphasis from utilization to impact—from what AI systems are doing to how they are improving organizational performance.

The distinction between these approaches carries important governance implications.

Activity measurement produces operational dashboards.

Outcome measurement establishes investment accountability.

Organizations that rely primarily on activity metrics often struggle to demonstrate AI’s business value at the board level. By contrast, those that link AI performance to financial and strategic outcomes are better positioned to justify continued investment and scale AI initiatives with confidence.

In practical terms, the difference is straightforward: activity measurement tracks what AI systems do; outcome measurement assesses what they achieve.

For CFOs and executive leadership teams responsible for capital allocation, this distinction is central to the AI ROI discussion.

Why traditional ROI models break down for AI

Part of the difficulty in measuring AI value stems from a deeper structural issue: the frameworks organizations use to evaluate technology investments were designed for an earlier generation of enterprise systems.

Historically, technology ROI was relatively straightforward to assess because systems supported clearly defined operational functions. Traditional IT investments were evaluated using efficiency-oriented metrics such as cost per transaction, system uptime, ticket resolution time, and infrastructure utilization. These measures were effective because enterprise software primarily served as operational infrastructure, improving the efficiency of existing processes without fundamentally altering decision-making.

During the digital transformation wave, organizations adopted a second generation of performance metrics focused on customer and market outcomes, including customer acquisition cost, conversion rates, churn reduction, and digital engagement. These metrics reflected technologies that reshaped how organizations reached customers and delivered services, but they still operated largely within established business models and decision structures.

Artificial intelligence introduces a fundamentally different dynamic.

Rather than automating predefined tasks or digitizing existing workflows, AI functions increasingly as a decision system embedded within enterprise operations. AI models forecast demand, assess operational risk, prioritize opportunities, and recommend actions in real time. In many organizations, these systems now operate as collaborative decision partners alongside human teams, influencing outcomes across multiple functions.

This shift changes the nature of value creation.

According to the World Economic Forum (2025), the economic impact of AI is increasingly tied not to improvements in task execution speed, but to improvements in decision quality. AI enables organizations to make faster, more informed, and more consistent decisions across complex and rapidly changing environments—capabilities that traditional software platforms were never designed to deliver.

The challenge is that decision quality has historically been one of the most difficult variables to measure.

Conventional ROI frameworks were built to evaluate investments in labor productivity, capital utilization, and operational cost reduction. AI, by contrast, often creates value through less tangible but more consequential mechanisms, including improved forecasting accuracy, faster organizational learning cycles, and the emergence of entirely new capabilities.

The nature of the asset itself is also different.

Unlike traditional software systems that begin to depreciate upon deployment, well-governed AI systems can increase in value over time. As models learn from enterprise-specific data and incorporate feedback from human experts, their predictive accuracy and decision relevance continue to improve. In this sense, AI behaves less like a static software tool and more like a learning asset whose value compounds with use.

AI investments also generate strategic option value—the ability to pursue new capabilities as opportunities emerge. By establishing the underlying data infrastructure, models, and decision systems, organizations gain the flexibility to launch AI-enabled products, reconfigure operations, and respond more rapidly to market shifts. These forms of strategic flexibility are difficult to capture using traditional financial models that rely on short-term cost savings or direct revenue attribution.

The result is a structural mismatch between how AI creates value and how organizations attempt to measure it.

Enterprises are effectively evaluating a new class of strategic assets using financial frameworks designed for a previous generation of technology. Until measurement approaches evolve to reflect the decision-centric and learning-driven nature of AI systems, organizations will continue to struggle to translate AI activity into clearly attributable financial outcomes—even when the underlying value is already being created.

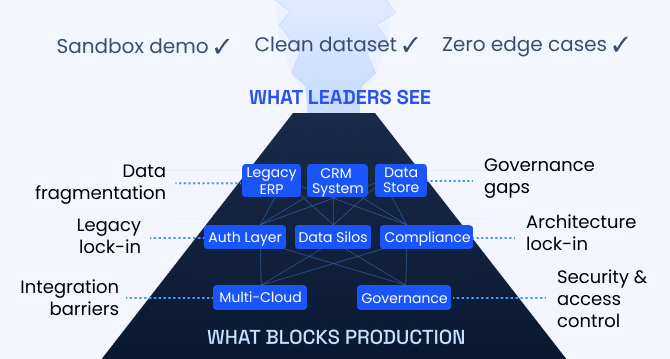

Why current AI metrics fail

If the difficulty in measuring AI value stems from outdated frameworks, its consequences are already visible in how organizations report AI performance today. Across industries, AI initiatives are frequently evaluated using metrics that appear compelling at the operational level but fail to clearly link to enterprise value.

In practice, four recurring measurement failures explain why many AI programs struggle to demonstrate ROI—even when they are delivering real benefits.

1. Proxy metrics instead of business value

Many organizations rely on proxy indicators to demonstrate the impact of AI. These metrics capture operational activity rather than economic outcomes. Common examples include hours of labor saved, tasks automated, queries processed, and internal adoption rates of AI tools.

While such indicators provide visibility into efficiency gains, they do not inherently translate into financial value. Time saved within a workflow does not yield an economic return unless that capacity is redeployed to higher-value activities.

As a result, organizations often report productivity improvements without corresponding gains in revenue or profitability. Finance leaders frequently describe this dynamic as trapped value—efficiencies within the system that have not been translated into measurable business outcomes.

At a fundamental level, efficiency measures how quickly tasks are executed; value reflects whether the organization is making better decisions. Most AI dashboards today track the former, while boards ultimately care about the latter.

2. The attribution problem

AI systems rarely operate in isolation. Instead, they influence outcomes across multiple functions simultaneously.

A demand forecasting model, for example, can shape supply chain planning, pricing decisions, production scheduling, and inventory allocation. When performance improves—through higher margins or fewer stockouts—it becomes difficult to determine how much of that improvement can be directly attributed to the AI system versus other operational changes.

This creates a fundamental challenge for financial reporting. CFOs must be able to trace value creation to specific investments, yet AI often generates distributed benefits across functions and workflows. Without clear attribution mechanisms, the economic contribution of AI remains difficult to quantify—even when the value is real.

The challenge is further compounded by limited decision traceability in many AI deployments. If organizations cannot track how AI-generated recommendations influence downstream decisions, linking those decisions to financial outcomes becomes nearly impossible. Without traceability, defensible ROI measurement becomes significantly more difficult.

3. Denominator blindness in AI ROI calculations

Another common issue arises from incomplete cost accounting in AI business cases.

Return on investment depends not only on the value generated, but also on the full cost of deploying and operating the system. In many cases, however, organizations underestimate the denominator of the ROI equation. Beyond initial model development, AI systems require ongoing investment in cloud infrastructure, data preparation and integration, model monitoring and retraining, governance, and human-in-the-loop validation.

In addition, AI introduces a cost dynamic that differs from traditional enterprise software. Unlike systems where marginal usage costs are near zero, AI often incurs variable costs with each use. Every prediction, recommendation, or generative output requires compute resources. At scale, these inference costs can materially affect the economics of AI deployment.

When these factors are not fully incorporated into ROI calculations, organizations risk overstating the financial performance of their AI initiatives.

4. The wrong time horizon

Finally, many organizations evaluate AI investments using time horizons that are too short.

Boards and executive teams often expect new technology initiatives to demonstrate measurable financial impact within a single reporting cycle. AI systems, however, tend to generate value cumulatively over time as models improve with additional data, workflows adapt to AI-driven insights, and organizations learn how to integrate model outputs into decision processes.

In practice, meaningful impact emerges gradually through integration and adoption—not immediately at deployment.

At the same time, AI introduces a new dimension of risk-adjusted performance. Models must be continuously monitored to ensure reliability, regulatory compliance, and protection against biased or inaccurate outputs that could erode value or create reputational risk. These governance requirements further reinforce that AI ROI cannot be assessed solely through short-term operational metrics.

Taken together, these measurement failures point to a broader governance challenge. Research from MIT Sloan Management Review highlights that many organizations struggle to translate AI experimentation into enterprise value due to organizational constraints, including leadership alignment, data readiness, workforce capabilities, and governance structures.

Organizations may have the technical capability to deploy AI systems, but often lack the frameworks required to measure and manage their economic impact effectively.

Until these measurement approaches evolve, enterprises risk producing increasingly sophisticated AI dashboards while leaving the central question unresolved: What business value has actually been created?

Addressing the performance gap

Despite the challenges of measuring AI, emerging evidence suggests that organizations with strong measurement capabilities are already outperforming their peers.

PwC research indicates that organizations embedding governance, accountability, and performance measurement into their AI strategies are significantly more likely to generate measurable business value. Notably, 58% of executives report that responsible AI practices improve efficiency and profitability—underscoring the role of structured governance and measurement in translating AI deployment into tangible outcomes.

The difference is not merely analytical; it reflects a shift in how AI is governed and managed within the enterprise.

Organizations with stronger measurement discipline are better positioned to link AI deployments to measurable business outcomes, prioritize high-impact use cases across the enterprise, allocate capital more effectively, and continuously refine models using real-world performance feedback.

In practice, effective measurement enables organizations to manage AI as a portfolio of investments rather than a collection of isolated experiments.

The importance of this capability becomes clearer when examining how organizations convert AI deployment into financial results. Research from the MIT Sloan Management Review–BCG Artificial Intelligence and Business Strategy study finds that companies redesigning their KPIs to reflect AI-enabled decision processes are three times more likely to achieve superior financial outcomes than those relying on traditional performance metrics.

This points to a deeper dynamic.

Organizations that measure AI effectively gain visibility into how models perform in real-world decision environments. They can identify where value is being created, refine or redeploy underperforming systems, and scale successful use cases across business units.

By contrast, organizations that lack structured measurement frameworks often remain trapped in cycles of pilot-stage experimentation—deploying AI capabilities without a clear basis for prioritization or scale.

Over time, the ability to measure AI performance compounds. Organizations that measure effectively learn faster from their deployments and scale successful systems more rapidly.

In the emerging AI economy, competitive advantage may depend less on who deploys AI first and more on how quickly organizations learn from deployment and translate those insights into measurable business outcomes.

The human dimension: why AI measurement is an organizational challenge

The challenge of measuring AI value is not purely technical. In many organizations, it is fundamentally organizational.

Activity-based metrics—such as the number of automated workflows or hours saved—can be reported relatively easy because they do not disrupt existing performance structures. These indicators signal operational improvement without requiring meaningful changes to how teams are evaluated, incentivized, or held accountable.

Outcome-based metrics introduce a different dynamic. When organizations begin measuring AI in terms of business outcomes—such as decision quality, revenue contribution, or risk reduction—they surface fundamental questions about how value is created and who is accountable for it across functions.

This often exposes an accountability gap. While AI systems generate insights and recommendations, responsibility for translating those insights into measurable business impact is frequently unclear or distributed across multiple stakeholders.

In many organizations, the teams responsible for developing AI systems are also responsible for reporting their performance. As a result, measurement frameworks tend to emphasize activity indicators—such as adoption rates or deployment volumes—rather than outcomes tied to financial performance or strategic impact.

Empirical evidence reinforces this pattern. Research from Boston Consulting Group shows that although many organizations are experimenting with AI, only 22% have progressed beyond proof-of-concept deployments, and just 4% are generating substantial value at scale. Similarly, research from MIT Sloan Management Review and BCG finds that while 60% of leaders believe their organizations need better KPIs to support decision-making, only about one-third are using AI to redesign or improve their performance metrics.

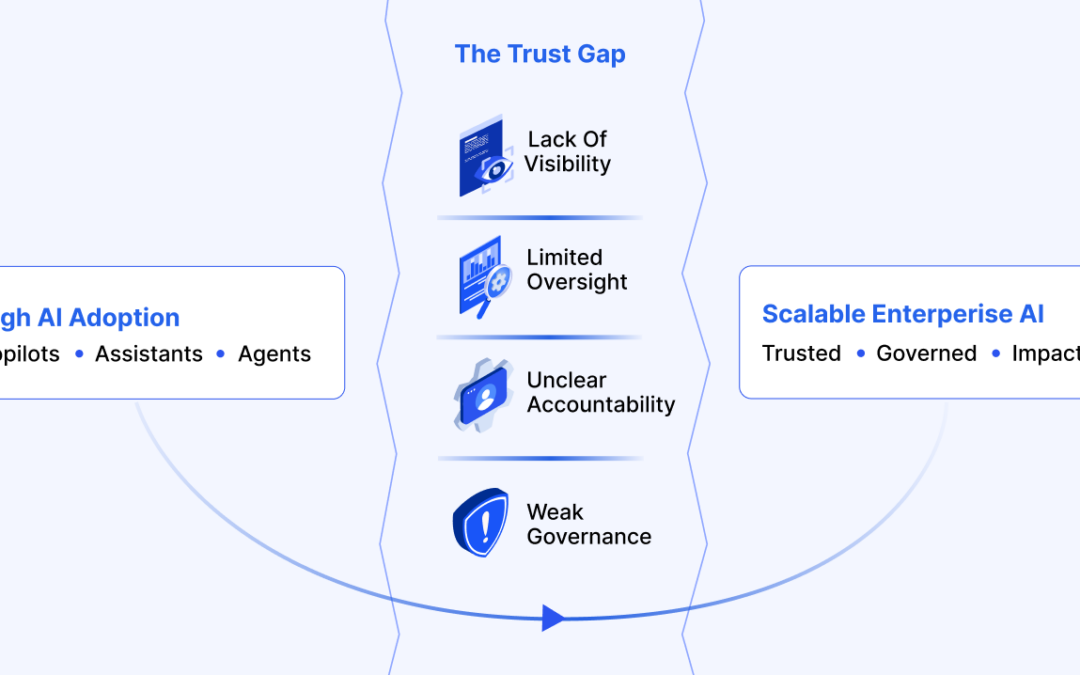

Taken together, these findings point to a broader organizational challenge: enterprises are scaling AI capabilities faster than they are developing the governance and measurement frameworks required to translate those capabilities into sustained business value.

The rise of AI value governance

Addressing this gap requires tighter integration between AI initiatives and enterprise governance.

For senior leadership—particularly CFOs and boards—AI measurement cannot remain confined to data science or technology teams. It must be embedded within the organization’s broader financial and performance governance framework.

As organizations mature, new roles are emerging to bridge this divide. Positions such as Chief AI Officers (CAIOs) or AI value leaders are focused less on the mechanics of model development and more on the alignment between technical deployment and enterprise value. Their mandate centers on a critical question: How does an AI-driven insight translate into measurable enterprise impact?

Answering this question requires a shift in perspective. Organizations must move beyond isolated efficiency gains and toward measurement frameworks that treat AI as a strategic capability—one whose impact is tracked, attributed, and governed with the same rigor applied to any other major capital investment.

Building a structured framework for measuring AI value

Traditional ROI models struggle to capture the full value of artificial intelligence because they focus primarily on cost reduction and efficiency gains. While these outcomes remain important, they represent only a narrow portion of the value that AI systems can generate.

A more complete approach is to evaluate AI through a multi-layered measurement framework—one that reflects the different ways in which value is created and realized over time.

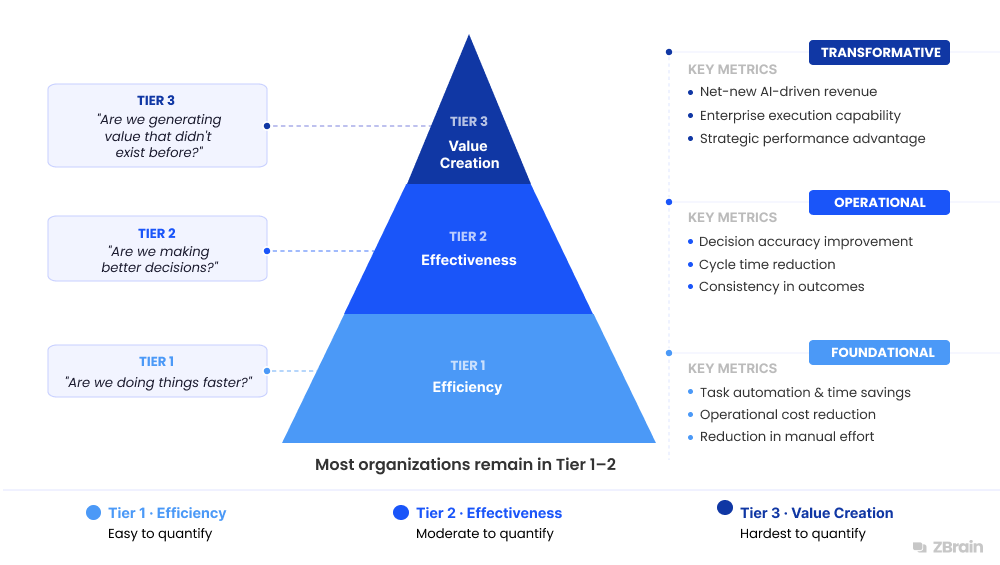

In practice, organizations tend to progress through three distinct tiers of measurement, moving from operational improvements to broader strategic impact.

This progression mirrors how AI evolves within the enterprise: from a tool that enhances efficiency, to a system that augments decision-making, to a capability that enables entirely new forms of value creation.

A three-tier framework for measuring AI value

Tier 1: Efficiency metrics

The first layer of AI measurement focuses on operational efficiency—how AI systems improve the speed, cost, and reliability of existing processes.

Most organizations begin their measurement journey at this level because the indicators are familiar, well-understood, and relatively easy to quantify.

Common efficiency metrics include reductions in operational costs, time saved through task automation, decreases in manual workload, lower error rates in routine processes, and increased throughput of operational workflows.

These measures help demonstrate early AI value by highlighting productivity gains and process optimization. However, they capture only a limited portion of AI’s potential impact, focusing primarily on improving tasks that organizations were already performing.

Tier 2: Effectiveness metrics

The second layer shifts the focus from efficiency to effectiveness—how AI improves decision-making and process outcomes.

Rather than asking whether tasks are completed faster, this layer evaluates whether they are performed better. Effectiveness metrics assess the quality, consistency, and reliability of outcomes enabled by AI systems.

Examples include improvements in decision accuracy within analytical workflows, reductions in process cycle times, agent-to-human handoff rates in automated systems, enhancements in response quality and consistency, and reductions in operational risk or compliance errors.

While these metrics provide deeper insight into AI’s operational contribution, they remain largely focused on optimizing existing processes. As a result, many organizations that measure AI at this level gain better visibility into performance—but still fall short of capturing its full strategic value.

Tier 3: Value creation metrics

The third tier represents the most strategic dimension of AI measurement: value creation.

At this level, the focus shifts from optimizing existing operations to understanding how AI enables new forms of enterprise value. AI systems move beyond task automation to augment decision-making, unlock new capabilities, and create opportunities that did not previously exist.

Examples include net-new revenue generated through AI-driven insights, measurable improvements in enterprise decision quality, the creation of new products or services, expansion of automation into previously complex or judgment-intensive workflows, and sustained strategic advantages driven by AI-enabled intelligence.

These metrics are inherently more difficult to quantify, but they reflect the true transformative potential of AI.

Why measuring value creation is difficult

Despite its importance, relatively few organizations can effectively measure value creation.

The challenge lies in the nature of tier 3 metrics. Unlike efficiency or effectiveness, value creation requires visibility into how AI systems interact with enterprise workflows, decision processes, and data over time.

To operate at this level, organizations must be able to continuously monitor system performance, evaluate reasoning quality and response reliability, trace how AI-generated outputs influence downstream decisions, and attribute value across complex workflows involving both human and AI actors.

Traditional analytics tools are not designed to provide this level of insight. As AI deployments become more sophisticated—particularly with the rise of agentic systems and multi-step workflows—measurement increasingly requires specialized platforms that support governance, monitoring, and evaluation at scale.

Enabling measurement across all three tiers

The three-tier measurement framework can be operationalized through platforms such as ZBrain Builder—an enterprise agentic AI orchestration platform designed to support observability, evaluation, and governance at scale.

ZBrain Builder provides integrated monitoring and evaluation capabilities that enable organizations to track how AI agents and applications perform in production environments. Through structured dashboards and evaluation mechanisms, organizations gain visibility into operational efficiency, response quality, and knowledge utilization across AI deployments.

These signals allow teams to understand not only how AI systems behave within enterprise workflows, but also how they contribute to broader business processes and outcomes.

Tier 1: Measuring efficiency

At the efficiency layer, organizations evaluate how AI systems operate within workflows and the resources required to generate outputs.

These metrics provide visibility into the operational workload handled by AI agents, system responsiveness, and the cost dynamics of AI execution. They help organizations assess how effectively AI systems are handling tasks, improving throughput, and optimizing resource utilization within existing processes.

ZBrain Builder provides monitoring across agent interactions, application usage, and prompt execution, enabling teams to observe AI activity across production environments with clarity and consistency.

| Category | Metrics | Description |

|---|---|---|

| Agent-specific metrics | Utilized time, session time, average session time, session start date, session end date, accuracy, tasks completed, satisfaction score, tokens used, cost | Provide visibility into agent execution behavior and session-level activity. These metrics indicate session duration, when interactions occur, tasks completed, response quality, and associated token and cost consumption. |

| Application-specific metrics | Number of sessions, average response time, satisfaction score | Measure application-level usage and performance. These metrics capture how frequently AI applications are accessed, how quickly they respond, and how the quality of responses is evaluated through feedback or scoring mechanisms. |

| Prompt-related metrics | Total runs, credits used, total cost, last updated, total logs, total tokens, duration, average time, median time, run over time | Track prompt and workflow execution across the system. These metrics capture execution frequency, runtime performance, token usage, cost, and logs generated during prompt runs. |

Together, these efficiency indicators provide visibility into the operational footprint of AI systems, including workload volume, execution dynamics, and usage patterns.

Tier 2: Measuring effectiveness

The second tier evaluates whether AI systems generate outputs that are accurate, relevant, and grounded in enterprise knowledge.

While Tier 1 focuses on operational execution, Tier 2 shifts attention to the quality and reliability of AI-generated responses.

ZBrain Builder supports this layer through structured evaluation workflows that enable organizations to assess response correctness, similarity to expected outputs, and alignment with underlying knowledge sources.

System validation metric

-

Health Check: This metric determines whether an AI entity is operational and capable of generating valid responses. If a health check fails, subsequent evaluation metrics are not executed for that instance.

Response correctness metrics

-

Accuracy

-

Exact match

-

F1 score

These metrics evaluate the correctness of generated responses by comparing them against reference answers or expected outputs.

Text similarity and summarization quality metrics

-

Levenshtein similarity

-

Rouge-L score

These indicators measure how closely generated responses align with the reference text, making them particularly relevant for summarization, content generation, and structured output validation.

Knowledge grounding metrics

-

Response relevancy

-

Faithfulness

These metrics assess whether generated responses are both relevant to the input query and grounded in the provided knowledge sources.

Human feedback signal

-

Satisfaction score

This metric determines the average satisfaction score based on the evaluation of a given entity.

Tier 3: Measuring value creation

The third tier focuses on value creation—how AI systems support business workflows, leverage enterprise knowledge, and enable new capabilities across applications.

At this stage, measurement moves beyond operational performance and response accuracy to evaluate how AI systems assist users, enhance knowledge discovery, and integrate enterprise information into decision processes. The emphasis shifts from how AI performs to how AI contributes to enterprise outcomes.

Response usefulness indicators

-

Creativity

-

Helpfulness

-

Clarity

These indicators assess the qualitative dimensions of AI-generated outputs—whether responses are understandable, actionable, and appropriately structured for end users. They provide insight into how effectively AI systems augment human work rather than simply generate content.

Knowledge utilization indicators

-

Documents: Number of documents ingested into the knowledge base (PDFs, files, datasets, etc.)

-

Words: Total number of words processed from all ingested documents

-

Linked apps: Number of connected applications

These indicators reflect the breadth and depth of enterprise knowledge accessible to AI systems. They signal the system’s capacity to draw from diverse information sources and support more contextually informed outputs.

Knowledge retrieval evaluation

ZBrain Builder supports two retrieval approaches for knowledge-grounded AI responses:

-

Basic retrieval

-

Agentic retrieval

Basic retrieval follows a traditional retrieval-augmented generation approach, fetching relevant documents from the knowledge base to support response generation.

Agentic retrieval, enabled through ZBrain Builder’s agentic RAG framework, introduces a more advanced, decision-driven model of retrieval.

In this approach, the language model operates as a reasoning agent—determining when retrieval is required, orchestrating query refinement, evaluating relevance, and validating retrieved information before generating a response.

This iterative process enables AI systems to retrieve and apply knowledge more intelligently, improving both response quality and contextual accuracy in complex enterprise scenarios.

Measurement maturity across tiers

Early deployments tend to emphasize operational telemetry—including session activity, response time, and token consumption—providing visibility into system usage and execution dynamics. As systems scale, organizations introduce response evaluation metrics such as accuracy, faithfulness, and relevance to assess the quality and reliability of AI outputs.

At higher levels of maturity, measurement expands further to capture user impact and knowledge utilization, incorporating indicators such as helpfulness, clarity, and creativity. These metrics reflect how effectively AI systems support decision-making and augment human work.

By integrating operational monitoring, response evaluation, and knowledge utilization, organizations can move beyond tracking AI activity and develop a more comprehensive understanding of how AI systems contribute to enterprise value.

Applying the three-tier framework across enterprise use cases

The practical value of a structured AI measurement framework becomes clearer when applied to real enterprise use cases. AI agents deployed across business functions can be evaluated consistently using a three-tier lens—efficiency, effectiveness, and value creation.

This approach enables organizations to move beyond measuring isolated AI activity and instead understand how AI systems contribute to operational performance and strategic outcomes.

Financial planning and analysis

AI agents supporting financial planning and analysis can automate data aggregation, generate financial summaries, and assist with financial workflows.

-

Tier 1 (Efficiency): Hours saved in financial reporting, time required to generate financial summaries, and data processing speed

-

Tier 2 (Effectiveness): Forecast accuracy, variance analysis precision, and relevance of decision-support insights

-

Tier 3 (Value creation): Revenue protected through early risk detection and improved capital allocation decisions

In this context, measurement enables finance leaders to evaluate not only productivity improvements but also whether AI-driven insights enhance the quality of financial decisions.

Talent acquisition and workforce support

Human resources teams increasingly deploy AI agents to assist with candidate screening, job matching, and recruitment workflows.

-

Tier 1 (Efficiency): Time-to-hire reduction, resume screening speed, and recruiter workload reduction

-

Tier 2 (Effectiveness): Candidate–job match accuracy, offer acceptance rate, and hiring decision quality

-

Tier 3 (Value creation): Improved employee retention and stronger workforce productivity outcomes

By measuring across these tiers, organizations can determine whether AI improves hiring decisions rather than simply accelerating recruitment processes.

Procurement and operations monitoring

Supply chain environments generate large volumes of operational data across procurement, logistics, and supplier networks. AI agents can analyze these signals to detect disruptions and support operational decision-making.

-

Tier 1 (Efficiency): Purchase order processing speed, automated workflow throughput, and operational cycle time reduction

-

Tier 2 (Effectiveness): Exception resolution accuracy, demand forecast alignment, and supplier risk detection accuracy

-

Tier 3 (Value creation): Revenue protected through early supply disruption detection and improved supply chain resilience

Across these examples, a consistent principle emerges: every AI deployment should ultimately be linked to a tier 3 business outcome. Without this connection, organizations risk measuring activity rather than value—capturing what AI systems do, but not what they achieve.

Why AI ROI measurement requires strong governance

Measurement frameworks alone are insufficient without the governance structures required to ensure that AI systems operate transparently, reliably, and accountably.

In the absence of governance, organizations may track AI performance metrics but remain unable to explain how automated systems influence business outcomes. This lack of visibility introduces both operational and regulatory risk.

Effective AI governance requires more than monitoring system activity—it requires the ability to evaluate output quality and trace how AI-generated insights propagate through enterprise decision processes.

Core governance capabilities typically include:

-

Continuous monitoring of the AI system performance

-

Structured evaluation of outputs against defined quality metrics

-

Alerting mechanisms when outputs fall below acceptable thresholds

-

Comprehensive logging of system activity and evaluation results for auditability

Together, these capabilities provide visibility into how AI systems behave within enterprise workflows, enabling organizations to investigate anomalies, diagnose performance degradation, and maintain control over system behavior.

Platforms such as ZBrain Builder operationalize these governance requirements by embedding monitoring, evaluation, and control mechanisms directly into AI workflows. Through execution logs, evaluation metrics, and monitoring dashboards, organizations can observe system performance, validate response quality, and trace how AI-generated outputs influence downstream processes.

This level of observability enables organizations to detect failures, enforce policy constraints, and sustain reliable AI operations at scale.

As regulatory expectations around AI continue to evolve, governance is becoming a central requirement of enterprise AI deployment. Organizations must be able to demonstrate that automated systems are not only deployed, but also measured, monitored, and controlled.

In this context, measurement frameworks serve a dual purpose. They function not only as management tools for performance evaluation but also as the foundation for responsible, auditable AI governance.

Measuring what matters

As AI adoption accelerates, the central challenge facing enterprises is not whether AI can create value—but whether that value can be measured, understood, and scaled.

Most organizations today measure what is easiest: system activity, automation levels, and usage patterns. These indicators provide operational visibility, but they fall short of answering the question that matters most to leadership—how AI contributes to business performance.

Addressing this requires a shift toward measuring what matters. Organizations must link AI performance to decision quality, process outcomes, and financial impact. This requires not only new metrics but also stronger governance, clearer accountability, and deeper integration between AI systems and enterprise workflows.

Measurement, in this context, becomes a capability—not a reporting exercise. It enables organizations to learn from deployments, refine systems, and scale value across the enterprise.

Over time, this capability compounds. Organizations that measure effectively improve faster, allocate capital more precisely, and build stronger alignment between AI investments and business outcomes.

As AI becomes a core component of enterprise operations, the ability to measure its impact will separate experimentation from execution—and activity from value.

Looking to move from AI experimentation to measurable business value? ZBrain Builder enables organizations to operationalize AI across enterprise workflows with built-in monitoring, evaluation, and governance capabilities. Book a demo to see how it works.

Author’s Bio

An early adopter of emerging technologies, Akash leads innovation in AI, driving transformative solutions that enhance business operations. With his entrepreneurial spirit, technical acumen and passion for AI, Akash continues to explore new horizons, empowering businesses with solutions that enable seamless automation, intelligent decision-making, and next-generation digital experiences.

Table of content

- The right approach to AI performance measurement

- Why traditional ROI models break down for AI

- Why current AI metrics fail

- Addressing the performance gap

- The human dimension: why AI measurement is an organizational challenge

- Building a structured framework for measuring AI value

- Enabling measurement across all three tiers

- Applying the three-tier framework across enterprise use cases

- Why AI ROI measurement requires strong governance

Frequently Asked Questions

Why is measuring the return on investment (ROI) from AI so challenging?

Measuring AI ROI is difficult because the value generated by AI systems is often distributed across multiple workflows, decisions, and business processes rather than appearing as a single measurable output.

Several factors contribute to this challenge:

-

The attribution problem — AI systems rarely operate in isolation. Their outputs influence multiple functions simultaneously, making it difficult to determine how much performance improvement can be attributed to the AI system itself.

-

Activity metrics instead of outcome metrics — Many organizations track indicators such as queries processed or tasks automated, which reflect system usage rather than business impact.

-

Incomplete cost accounting — AI deployments incur ongoing costs, such as infrastructure, inference, monitoring, and governance, that are often not fully captured in ROI calculations.

-

Longer value realization timelines — AI systems typically generate value gradually as models improve with data and organizations adapt workflows around AI-driven insights.

Among these challenges, the attribution problem is particularly significant. Because AI often augments human decision-making across multiple processes—such as forecasting, pricing, inventory management, and production planning—improvements in business performance may result from a combination of factors. This diffusion of impact makes it difficult to isolate the specific financial contribution of AI systems.

What is the difference between activity metrics and outcome metrics in AI measurement?

Organizations typically measure AI performance in two ways: activity metrics and outcome metrics.

Activity metrics track how AI systems are used within workflows. These indicators often include:

-

Number of AI queries processed

-

Tasks automated

-

Internal adoption rates

-

Hours of labor saved

While these signals demonstrate system utilization, they do not necessarily indicate whether the organization is generating economic value.

Outcome metrics, by contrast, focus on business impact, such as:

-

Improvements in forecasting accuracy

-

Revenue growth linked to AI insights

-

Reductions in operational risk

-

Increased customer lifetime value

In simple terms, activity metrics measure what AI systems are doing, while outcome metrics assess what they are actually achieving. Boards and finance leaders expect organizations to demonstrate the latter.

Why do traditional ROI frameworks fail when applied to AI?

Traditional ROI models were designed to measure improvements in labor productivity and operational efficiency. AI, however, often creates value through improved decision quality, faster organizational learning, and new capabilities that emerge over time. These effects are harder to capture using conventional financial measurement frameworks.

What are the most common mistakes organizations make when measuring AI performance?

Many organizations rely on proxy indicators that appear meaningful but fail to capture real economic value.

Common examples include:

-

Hours of labor saved

-

Number of automated tasks

-

Number of AI models deployed

-

Internal adoption rates

These indicators often represent operational activity rather than business impact.

A frequently cited issue is “trapped value.” Time saved through automation does not automatically produce a financial return unless the freed capacity is redirected toward higher-value activities such as revenue generation or strategic decision-making.

As a result, companies may report productivity improvements while seeing little measurable improvement in profitability.

What is the three-tier framework for measuring AI value?

A more comprehensive way to measure AI impact is through a three-tier framework, which evaluates AI systems across progressively deeper levels of value creation.

Tier 1 – Efficiency

Focuses on operational improvements such as:

-

Automation of manual tasks

-

Reduced processing time

-

Lower operational costs

-

Faster workflow execution

Tier 2 – Effectiveness

Evaluates whether AI improves decision-making or process outcomes, including:

-

Improved forecasting accuracy

-

Reduced process errors

-

Better response quality

-

Improved consistency in automated outputs

Tier 3 – Value creation

Examines how AI contributes to strategic business outcomes, such as:

-

Net-new revenue opportunities

-

Improved enterprise decision quality

-

New products or services enabled by AI

-

Expansion of automation into complex workflows

Organizations typically mature through these tiers as AI adoption expands across the enterprise.

What is ZBrain Builder, and how does it support enterprise AI adoption?

ZBrain Builder is an enterprise agentic AI orchestration platform designed to help organizations build, deploy, and manage AI agents and applications using proprietary enterprise data. The platform provides a low-code environment for orchestrating workflows, integrating enterprise systems, and deploying AI solutions securely across business operations.

By combining AI development, workflow orchestration, knowledge integration, and monitoring capabilities in a single platform, ZBrain Builder helps enterprises move from isolated AI experiments to scalable production deployments.

How does ZBrain Builder support AI monitoring, evaluation, and observability?

Enterprise AI systems require continuous monitoring to ensure reliability and alignment with business objectives. ZBrain Builder provides observability capabilities that allow organizations to track how AI agents, applications, and prompts behave in production environments.

These capabilities typically include:

-

Monitoring dashboards that track system usage and performance

-

Evaluation frameworks that assess response quality and correctness

-

Execution logs that trace workflow activity

-

Performance metrics for agents, applications, and prompts

Together, these signals provide visibility into operational performance, response quality, and system utilization across AI deployments.

Why is governance becoming an important component of AI measurement?

As AI systems increasingly influence operational and strategic decisions, organizations must be able to demonstrate that those systems operate transparently, reliably, and responsibly.

Effective AI governance typically requires the ability to:

-

Monitor the AI system performance continuously

-

Evaluate response quality using structured metrics

-

Detect anomalies or performance degradation

-

Maintain logs and audit trails for regulatory compliance

Governance ensures that organizations not only deploy AI effectively but also manage the associated risks as AI systems scale across critical workflows.

How can organizations get started with ZBrain Builder?

To begin your AI journey with ZBrain, you can contact the team at hello@zbrain.ai or submit an inquiry through the website at zbrain.ai. The team will work with you to assess your current AI environment, identify key integration opportunities, and design a customized pilot plan aligned with your organization’s goals.

Insights

The AI Trust Gap: Why Governance Architecture Determines Enterprise Value

The trust gap surrounding enterprise AI is fundamentally an architectural challenge, and its solution is increasingly well understood.

The agentic enterprise: Why AI success requires an operating model redesign

Organizations that redesign their operating models around agentic AI are beginning to outperform those that apply AI only incrementally.

Enterprise AI pilot-to-production gap: Root causes & how to address them

The underlying cause is structural. In many enterprises, AI pilots are developed on infrastructure that was not designed to support production deployment.

Solution architecture best practices: A guide for enterprise teams

The architecture design process culminates in a set of documented artifacts that communicate the solution to development, operations, and business teams.

Common solution architecture design challenges and solutions

Solution architecture must evolve from fragmented documentation practices to a structured, collaborative, and continuously validated design capability.

Why structured architecture design is the foundation of scalable enterprise systems

Structured architecture design guides enterprises from requirements to build-ready blueprints. Learn key principles, scalability gains, and TechBrain’s approach.

Intranet search engine guide: How it works, use cases, challenges, strategies and future trends

Effective intranet search is a cornerstone of the modern digital workplace, enabling employees to find trusted information quickly and work with greater confidence.

Enterprise knowledge management guide

Enterprise knowledge management enables organizations to capture, organize, and activate knowledge across systems, teams, and workflows—ensuring the right information reaches the right people at the right time.

Company knowledge base: Why it matters and how it is evolving

A centralized company knowledge base is no longer a “nice-to-have” – it’s essential infrastructure. A knowledge base serves as a single source of truth: a unified repository where documentation, FAQs, manuals, project notes, institutional knowledge, and expert insights can reside and be easily accessed.